Unraveling The Mysteries Of Hallucinations In Large Language Models

Unraveling The Mysteries Of Hallucinations In Large Language Models Early models were prone to hallucinations significantly. however, the problem has improved with the implementation of various mitigation strategies. knowledge probing techniques and training the model to use web search tools have been proven effective in mitigating the problem. We delve into the intriguing world of ai with a focused examination of hallucinations in llms, exploring various strategies and methods aimed at reducing their effects and enhancing the accuracy of language generation.

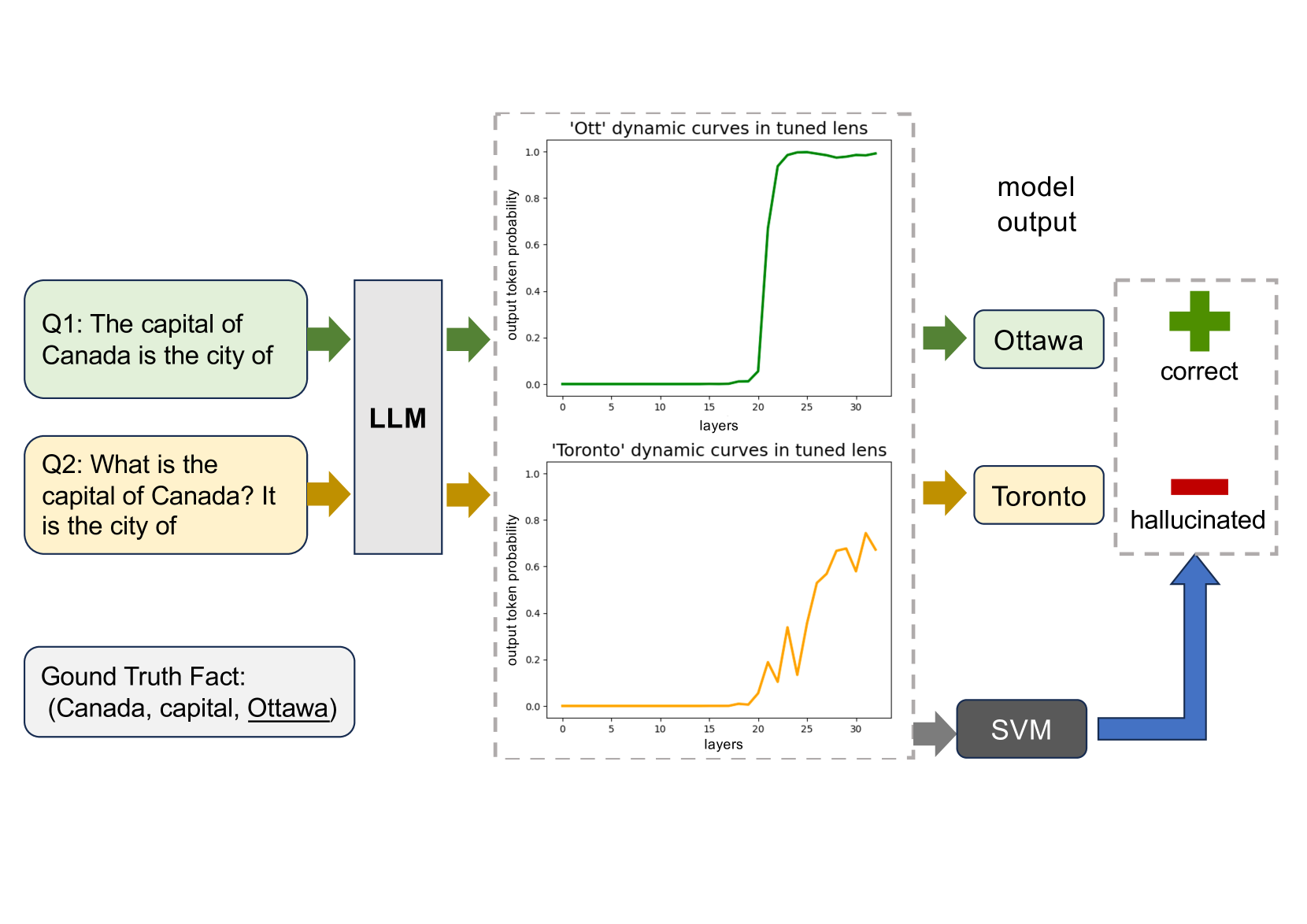

Pollmgraph Unraveling Hallucinations In Large Language Models Via We delve into the intriguing world of ai with a focused examination of hallucinations in llms, exploring various strategies and methods aimed at reducing their effects and enhancing the. Hallucinations undermine the reliability and trustworthiness of llms, especially in domains requiring factual accuracy. this survey provides a comprehensive review of research on hallucination in llms, with a focus on causes, detection, and mitigation. Our study shed light on understanding the reasons for llms’ hallucinations on their known facts, and more importantly, on accurately predicting when they are hallucinating. This blog explores hallucinations in large language models (llms), examining their mechanisms, impacts, and mitigation strategies. it delves into advanced detection methods and corrective approaches, highlighting the challenges and opportunities in enhancing the reliability and accuracy of these ai systems.

Pollmgraph Unraveling Hallucinations In Large Language Models Via Our study shed light on understanding the reasons for llms’ hallucinations on their known facts, and more importantly, on accurately predicting when they are hallucinating. This blog explores hallucinations in large language models (llms), examining their mechanisms, impacts, and mitigation strategies. it delves into advanced detection methods and corrective approaches, highlighting the challenges and opportunities in enhancing the reliability and accuracy of these ai systems. This review systematically maps research on hallucinations in large language models using a descriptive scheme that links model outputs to four system architect. In this work, we present a comprehensive survey and empirical analysis of hallucination attribution in llms. introducing a novel framework to determine whether a given hallucination stems from not optimize prompting or the model's intrinsic behavior. Abstract large language models (llms) have shown exceptional capabilities in natural language processing (nlp) tasks. however, their tendency to generate inaccurate or fabricated information (commonly referred to as hallucinations) poses serious challenges to reliability and user trust. Hallucinations undermine the reliability and trustworthiness of llms, especially in domains requiring factual accuracy. this survey provides a comprehensive review of research on hallucination in llms, with a focus on causes, detection, and mitigation.

Unraveling Large Language Model Hallucinations This review systematically maps research on hallucinations in large language models using a descriptive scheme that links model outputs to four system architect. In this work, we present a comprehensive survey and empirical analysis of hallucination attribution in llms. introducing a novel framework to determine whether a given hallucination stems from not optimize prompting or the model's intrinsic behavior. Abstract large language models (llms) have shown exceptional capabilities in natural language processing (nlp) tasks. however, their tendency to generate inaccurate or fabricated information (commonly referred to as hallucinations) poses serious challenges to reliability and user trust. Hallucinations undermine the reliability and trustworthiness of llms, especially in domains requiring factual accuracy. this survey provides a comprehensive review of research on hallucination in llms, with a focus on causes, detection, and mitigation.

Unraveling Large Language Model Hallucinations Abstract large language models (llms) have shown exceptional capabilities in natural language processing (nlp) tasks. however, their tendency to generate inaccurate or fabricated information (commonly referred to as hallucinations) poses serious challenges to reliability and user trust. Hallucinations undermine the reliability and trustworthiness of llms, especially in domains requiring factual accuracy. this survey provides a comprehensive review of research on hallucination in llms, with a focus on causes, detection, and mitigation.

Unraveling Large Language Model Hallucinations Towards Data Science

Comments are closed.