Unit Testing Llm Powered Applications With Deepeval Textify Analytics

Unit Testing Llm Powered Applications With Deepeval Textify Analytics This integration allows you to use deepeval in ci cd pipelines, ensuring that your llm powered applications are thoroughly tested and reliable before deployment. Integrate llm evaluations into your ci cd pipeline with deepeval to catch regressions and ensure reliable performance. you can use deepeval with your ci cd pipelines to run both end to end and component level evaluations.

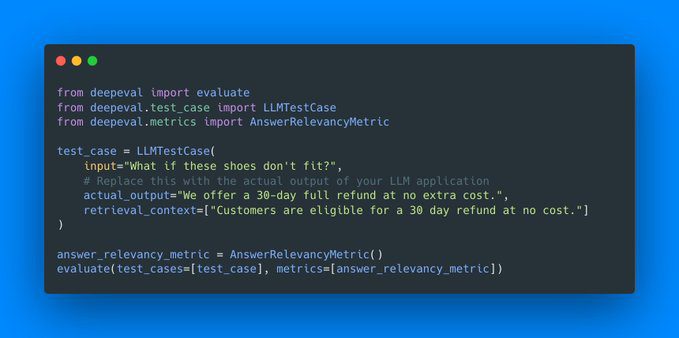

Effective Llm Assessment With Deepeval It is similar to pytest but specialized for unit testing llm applications. deepeval evaluates performance based on metrics such as hallucination, answer relevancy, ragas, etc., using llms and various other nlp models locally on your machine. It provides a simple and intuitive way to "unit test" llm outputs, similar to how developers use pytest for traditional software testing. with deepeval, you can easily create test cases, define metrics, and evaluate the performance of your llm applications. In this tutorial, you will learn how to set up deepeval and create a relevance test similar to the pytest approach. then, you will test the llm outputs using the g eval metric and run mmlu benchmarking on the qwen 2.5 model. Deepeval is a simple to use, open source llm evaluation framework, for evaluating large language model systems. it is similar to pytest but specialized for unit testing llm apps.

Effective Llm Assessment With Deepeval In this tutorial, you will learn how to set up deepeval and create a relevance test similar to the pytest approach. then, you will test the llm outputs using the g eval metric and run mmlu benchmarking on the qwen 2.5 model. Deepeval is a simple to use, open source llm evaluation framework, for evaluating large language model systems. it is similar to pytest but specialized for unit testing llm apps. Deepeval serves as a comprehensive platform for evaluating llm performance, offering a user friendly interface and extensive functionality. it enables developers to create unit tests for model outputs, ensuring that llms meet specific performance criteria. In this article, i’ll explore practical approaches to testing generative ai applications, with a special focus on using deepevals to ensure your llm systems perform reliably. Deepeval is a simple to use, open source llm evaluation framework, for evaluating large language model systems. it is similar to pytest but specialized for unit testing llm apps. Deepeval aims to make writing tests for llm applications (such as rag) as easy as writing python unit tests. for any python developer building production grade apps, it is common to set up pytest as the default testing suite as it provides a clean interface to quickly write tests.

Comments are closed.