Unit Testing Llm Applications With Stubidity

Unit Testing Llm Powered Applications With Deepeval Textify Analytics Our unit test suite uses around 40k tokens and is run roughly 10 times a day, so compared to invoking llm apis stubidity is saving us about $12 day. that's in addition to all the engineering hours and morale saved from troubleshooting flaky llm tests. Gem was evaluated on three established benchmarks across python, java, and c , using multiple state of the art llms, and was compared with the automated testing tool pynguin. experimental results reveal a persistent gap between coverage and mutation score in baseline llm generated tests.

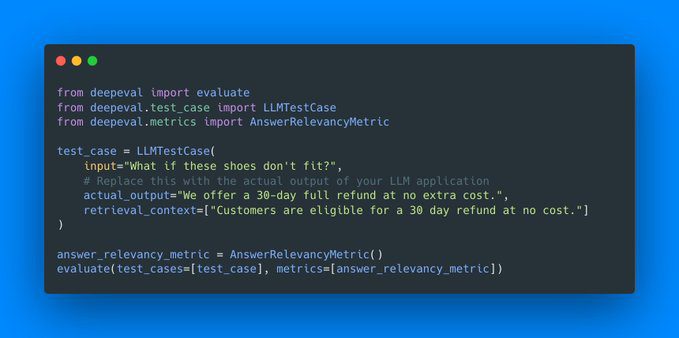

Unit Testing Llm Applications With Stubidity The llm evaluation framework $ used by some of the world's leading ai companies, deepeval enables you to build reliable evaluation pipelines to test any ai system. A mock replaces the real llm client in your test environment with a predictable stand in. this allows you to write fast, deterministic, and cost free unit tests that verify your application's logic without ever hitting a live api. the testing module provides a mockllm class designed for this purpose. Deepeval is a simple to use, open source llm evaluation framework, for evaluating large language model systems. it is similar to pytest but specialized for unit testing llm apps. deepeval incorporates the latest research to run evals via metrics such as g eval, task completion, answer relevancy. We first categorize existing unit testing tasks that benefit from llms, e.g., test generation and oracle generation. we then discuss several critical aspects of integrating llms into unit testing research, including model usage, adaptation strategies, and hybrid approaches.

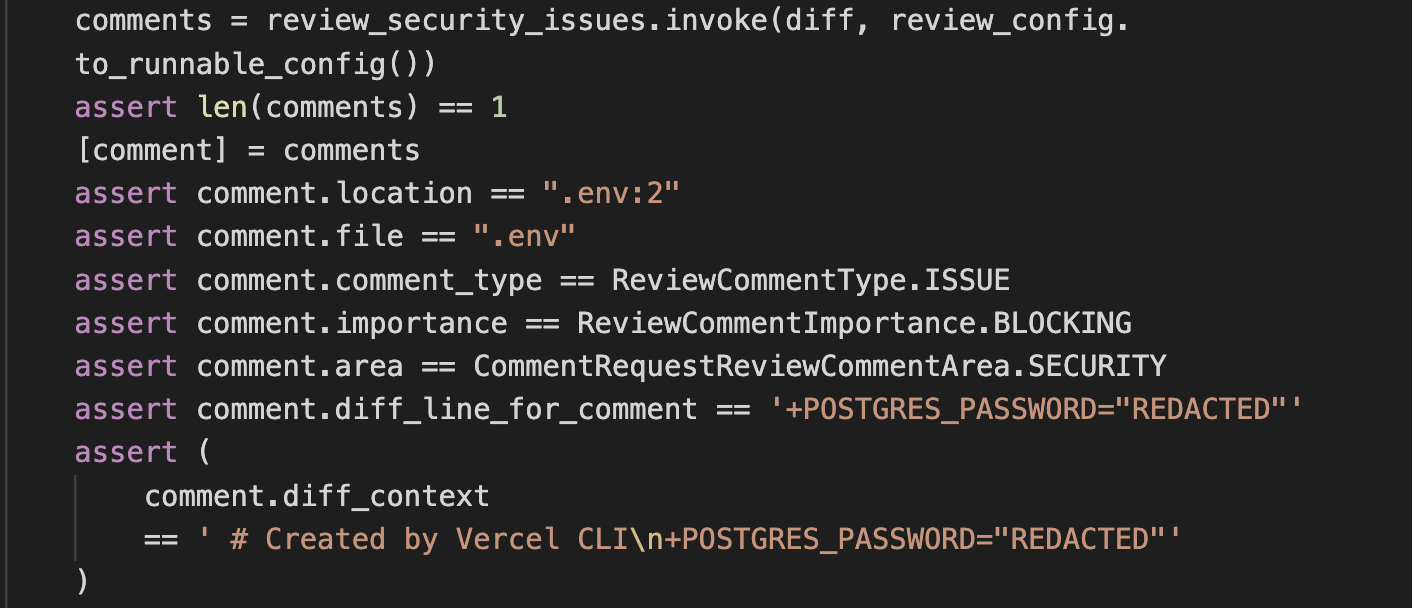

Github Llm Testing Llm4softwaretesting Deepeval is a simple to use, open source llm evaluation framework, for evaluating large language model systems. it is similar to pytest but specialized for unit testing llm apps. deepeval incorporates the latest research to run evals via metrics such as g eval, task completion, answer relevancy. We first categorize existing unit testing tasks that benefit from llms, e.g., test generation and oracle generation. we then discuss several critical aspects of integrating llms into unit testing research, including model usage, adaptation strategies, and hybrid approaches. Tutorial on unit testing llm outputs, prompt behavior, and model responses with structured assertions. Tl;dr: llm applications are in production at most engineering organizations and most are undertested. traditional pass or fail automation breaks against probabilistic outputs. this guide covers every major evaluation and observability tool in the 2026 landscape — including langfuse, giskard, arize, and confident ai that most guides miss — the five evaluation dimensions every test suite. Testing llms and generative ai systems is crucial for ensuring the quality and reliability of genai applications. prompt testing provides a way to write meaningful tests for these systems,. We describe a generic pipeline that incorporates static analysis to guide llms in generating compilable and high coverage test cases. we illustrate how the pipeline can be applied to different programming languages, specifically java and python, and to complex software requiring environment mocking.

Unit Testing Code With A Mind Of Its Own Tutorial on unit testing llm outputs, prompt behavior, and model responses with structured assertions. Tl;dr: llm applications are in production at most engineering organizations and most are undertested. traditional pass or fail automation breaks against probabilistic outputs. this guide covers every major evaluation and observability tool in the 2026 landscape — including langfuse, giskard, arize, and confident ai that most guides miss — the five evaluation dimensions every test suite. Testing llms and generative ai systems is crucial for ensuring the quality and reliability of genai applications. prompt testing provides a way to write meaningful tests for these systems,. We describe a generic pipeline that incorporates static analysis to guide llms in generating compilable and high coverage test cases. we illustrate how the pipeline can be applied to different programming languages, specifically java and python, and to complex software requiring environment mocking.

Llm Evaluation And Testing Platform Evidently Ai Testing llms and generative ai systems is crucial for ensuring the quality and reliability of genai applications. prompt testing provides a way to write meaningful tests for these systems,. We describe a generic pipeline that incorporates static analysis to guide llms in generating compilable and high coverage test cases. we illustrate how the pipeline can be applied to different programming languages, specifically java and python, and to complex software requiring environment mocking.

Llm Applications Uniquify Ai

Comments are closed.