Unit Testing For Natural Language Llms Lmunit Model

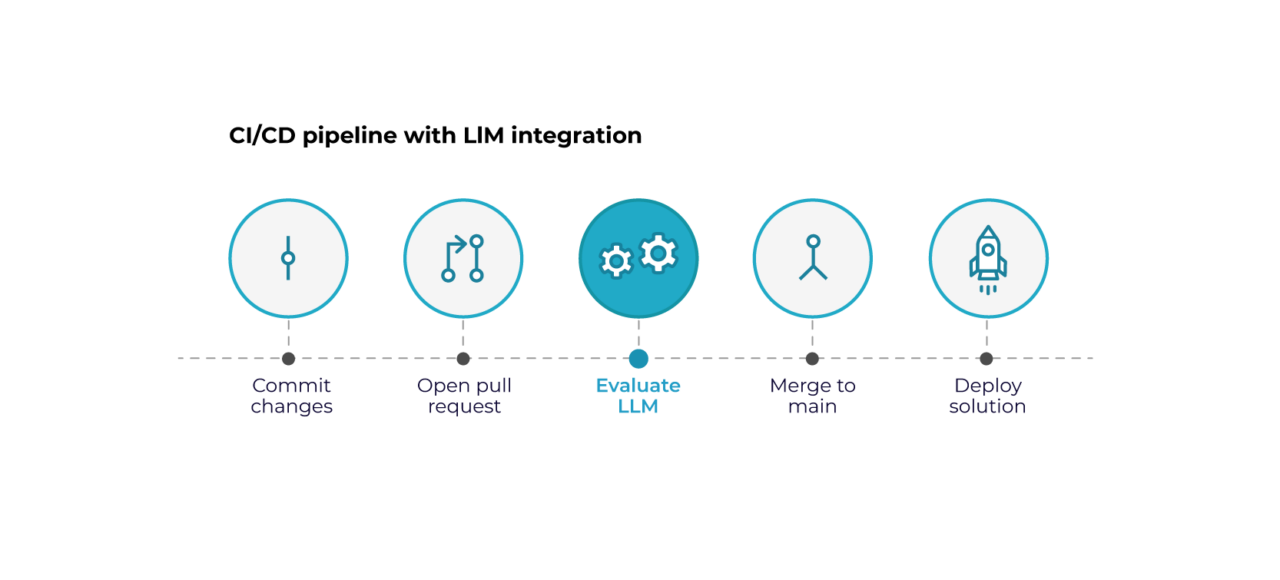

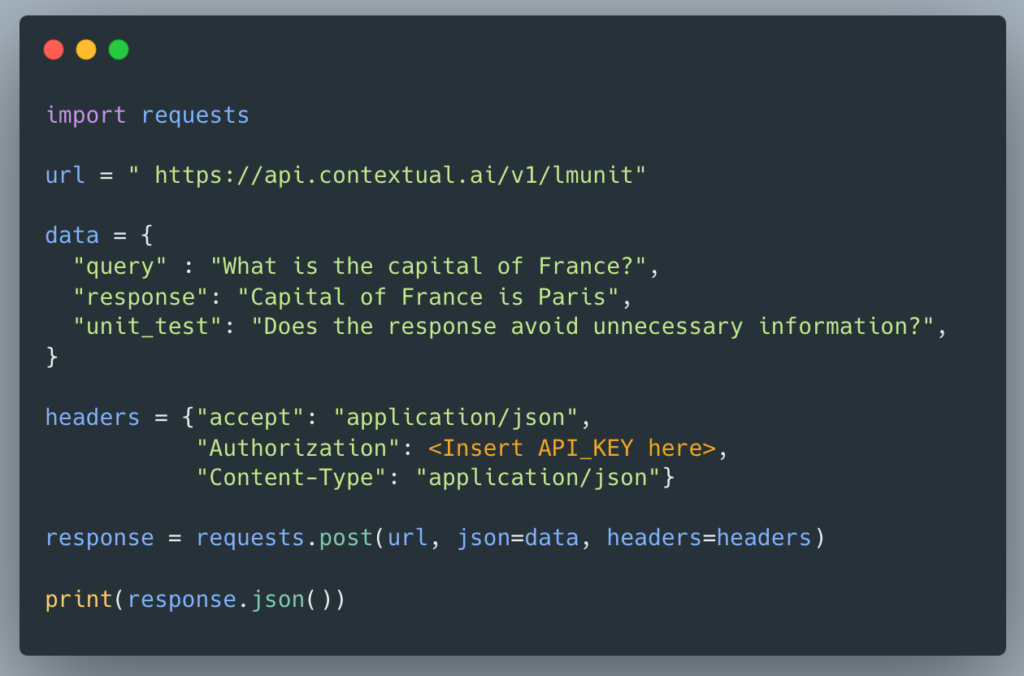

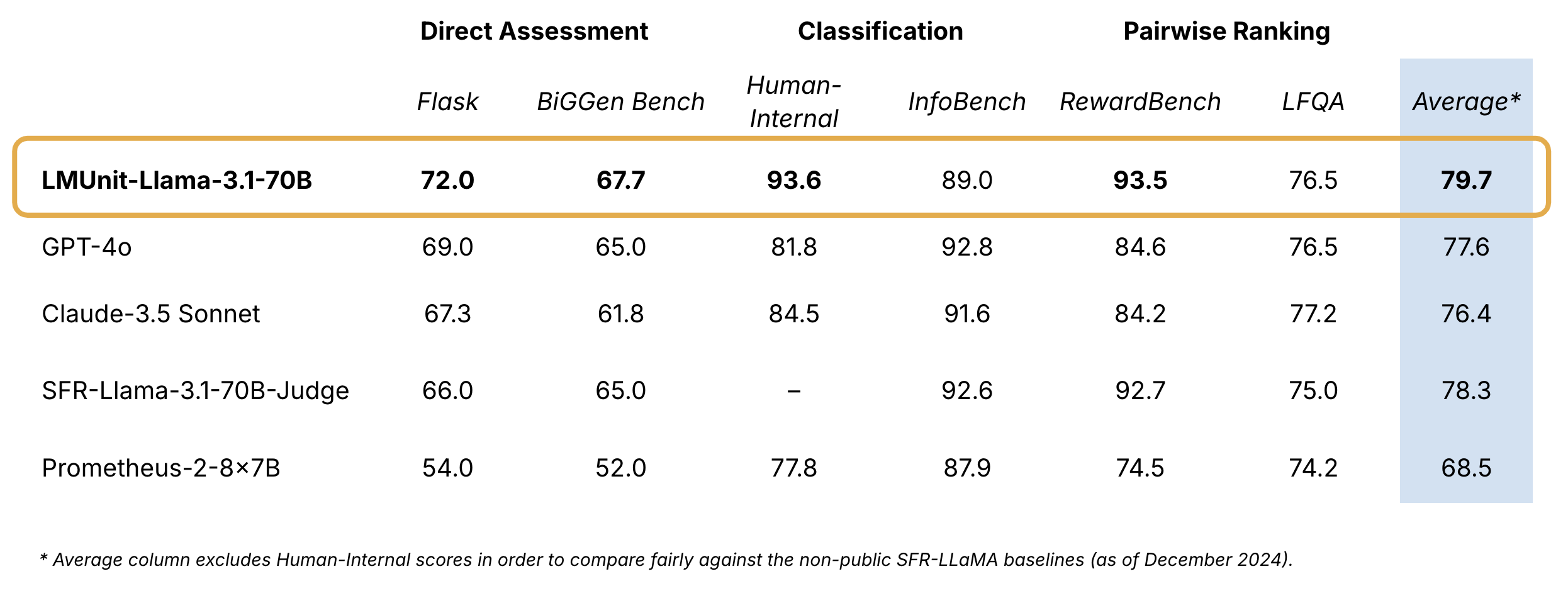

Testing Llms A Different Approach To Qa Testing Today, we’re excited to introduce natural language unit tests, a new paradigm that brings the rigor, familiarity, and accessibility of traditional software engineering unit testing to large language model (llm) evaluation. We introduce natural language unit tests, a paradigm that decomposes response quality into explicit, testable criteria, along with a unified scoring model, lmunit, which combines multi objective training across preferences, direct ratings, and natural language rationales.

Software Testing And Automation With Large Language Models Llms Lmunit: fine grained evaluation with natural language unit tests this repository provides code for evaluation and reproduction of our results in lmunit: fine grained evaluation with natural language unit tests. Lmunit is a unified evaluation model. the same forward pass can be optimized with ratings, preferences, natural language rationales and fine grained unit testing data. Lmunit: fine grained evaluation with natural language unit tests this repository provides code for evaluation and reproduction of our results in lmunit: fine grained evaluation with natural language unit tests. Contextual ai has introduced lmunit, a new framework designed for natural language unit testing aimed at evaluating large language models (llms). this initiative addresses the current challenges in llm evaluation, which many experts describe as inadequate for high value enterprise applications.

Introducing Lmunit Natural Language Unit Testing For Llm Evaluation Lmunit: fine grained evaluation with natural language unit tests this repository provides code for evaluation and reproduction of our results in lmunit: fine grained evaluation with natural language unit tests. Contextual ai has introduced lmunit, a new framework designed for natural language unit testing aimed at evaluating large language models (llms). this initiative addresses the current challenges in llm evaluation, which many experts describe as inadequate for high value enterprise applications. The notebook includes working code for: ⚡ batch evaluation of response quality 🎯 polar plot visualizations of multi dimensional scores 🔬 k means clustering. This paper introduces natural language unit tests to evaluate language models more precisely by breaking down response quality into specific criteria, combined with lmunit, a scoring model that integrates multi objective training, direct ratings, and rationales. Semantic scholar extracted view of "lmunit: fine grained evaluation with natural language unit tests" by jon saad falcon et al.

Introducing Lmunit Natural Language Unit Testing For Llm Evaluation The notebook includes working code for: ⚡ batch evaluation of response quality 🎯 polar plot visualizations of multi dimensional scores 🔬 k means clustering. This paper introduces natural language unit tests to evaluate language models more precisely by breaking down response quality into specific criteria, combined with lmunit, a scoring model that integrates multi objective training, direct ratings, and rationales. Semantic scholar extracted view of "lmunit: fine grained evaluation with natural language unit tests" by jon saad falcon et al.

Unit Testing Large Language Models Agentic Test Evaluation With Semantic scholar extracted view of "lmunit: fine grained evaluation with natural language unit tests" by jon saad falcon et al.

Comments are closed.