Unique Stable Code 3b Developed By Stability Ai

How Stability Ai S Stable Code Instruct 3b Outperforms Larger Models Hardware: stable code 3b was trained on the stability ai cluster across 256 nvidia a100 40gb gpus (aws p4d instances). the model is intended to be used as a foundational base model for application specific fine tuning. developers must evaluate and fine tune the model for safe performance in downstream applications. Stable code, an upgrade from stable code alpha 3b, specializes in code completion and outperforms predecessors in efficiency and multi language support. it is compatible with standard laptops, including non gpu models, and features capabilities like fim and expanded context size.

.png?format=1500w)

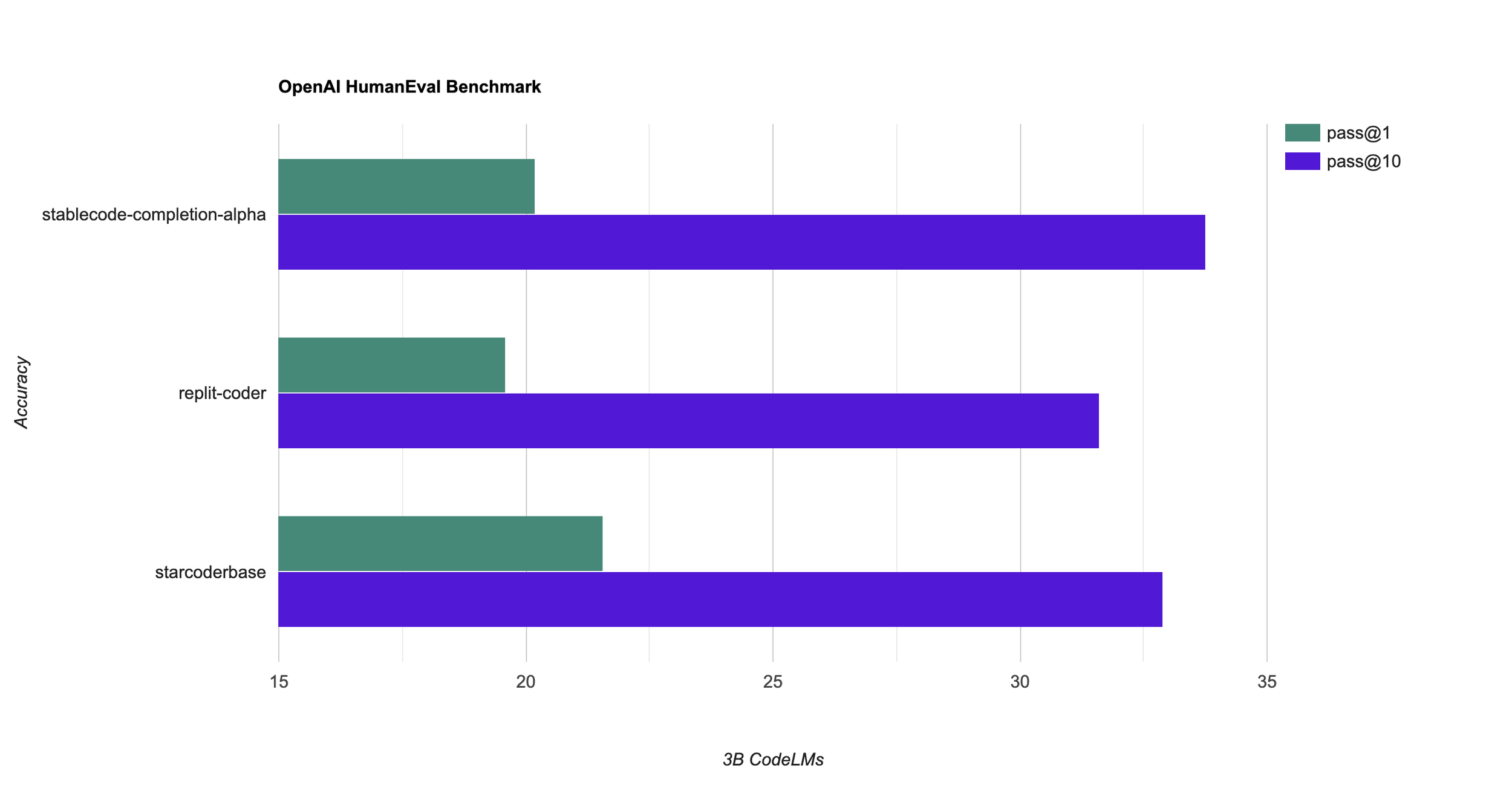

Announcing Stable Code Alpha Stability Ai This repository contains stability ai's ongoing development of the stablecode series of code models and will be continuously updated with new checkpoints. the following provides an overview of all currently available models. Stable ai has recently released a new state of the art model, stable code 3b, designed for code completion in various programming languages with multiple additional capabilities. the model is a follow up on the stable code alpha 3b. Released in january 2024 by stability ai, stable code 3b is a 3 billion parameter llm developed for coding purposes. it’s an advanced version of its predecessor, stable code alpha 3b. stable code 3b excels in code completion tasks and can be used as an excellent educational tool for novel programmers. Stable code 3b is an advanced language model tailored for software developers and engineers. this model excels in understanding and generating code snippets, making it an indispensable tool for various coding tasks, including auto completion and debugging assistance.

Stability Ai Launches New Stablecode Ai Coding Assistant Geeky Gadgets Released in january 2024 by stability ai, stable code 3b is a 3 billion parameter llm developed for coding purposes. it’s an advanced version of its predecessor, stable code alpha 3b. stable code 3b excels in code completion tasks and can be used as an excellent educational tool for novel programmers. Stable code 3b is an advanced language model tailored for software developers and engineers. this model excels in understanding and generating code snippets, making it an indispensable tool for various coding tasks, including auto completion and debugging assistance. Stable code 3b instruct delivers 65% humaneval pass@1 at 3b scale, outperforming larger models in efficiency for edge computing. fine tuning with lora reduces training costs by 80% while preserving 95% of base performance—ideal for custom code domains. Hardware: stable code 3b was trained on the stability ai cluster across 256 nvidia a100 40gb gpus (aws p4d instances). the model is intended to be used as a foundational base model for application specific fine tuning. developers must evaluate and fine tune the model for safe performance in downstream applications. Stable code instruct 3b is a 2.7b billion parameter decoder only language model tuned from stable code 3b. this model was trained on a mix of publicly available datasets, synthetic datasets using direct preference optimization (dpo). More than just impressive benchmarks, stable code 3b promises to empower developers with newfound efficiency and creativity. by automating tiresome coding tasks, the model frees developers to focus on handling more complex challenges and releasing their innovative potential.

Stabilityai Stable Code 3b Using With Ollama Stable code 3b instruct delivers 65% humaneval pass@1 at 3b scale, outperforming larger models in efficiency for edge computing. fine tuning with lora reduces training costs by 80% while preserving 95% of base performance—ideal for custom code domains. Hardware: stable code 3b was trained on the stability ai cluster across 256 nvidia a100 40gb gpus (aws p4d instances). the model is intended to be used as a foundational base model for application specific fine tuning. developers must evaluate and fine tune the model for safe performance in downstream applications. Stable code instruct 3b is a 2.7b billion parameter decoder only language model tuned from stable code 3b. this model was trained on a mix of publicly available datasets, synthetic datasets using direct preference optimization (dpo). More than just impressive benchmarks, stable code 3b promises to empower developers with newfound efficiency and creativity. by automating tiresome coding tasks, the model frees developers to focus on handling more complex challenges and releasing their innovative potential.

Comments are closed.