Unicode Gcse Computer Science Definition

What Is Unicode य न नक ड Unicode Provides A Unique Number For Find a definition of the key term for your gcse computer science studies, and links to revision materials to help you prepare for your exams. Unicode uses 16 bits, giving a range of over 65,000 characters. this makes it more suitable for those situations.

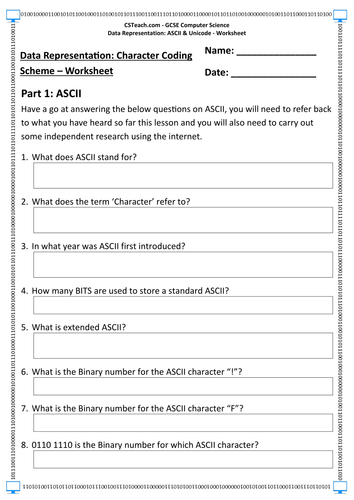

Gcse Computer Science Lesson Resources Aqa Ocr Edexcel Unicode is a character set which was released because of the need to standardise character sets internationally. unicode aims to represent every possible character in the world. the most common form of unicode is utf 8 and uses between eight and 32 bit binary codes to represent each character. While ascii is limited to 128 (or 256) characters, unicode aims to represent every character in human history. to do this, it uses a larger number of bits per character—typically starting at 16 bits and going up to 32 bits. For computers to be able to communicate and exchange text between each other efficiently, they must have an agreed standard that defines which character code is used for which character. a standardised collection of characters and the bit patterns used to represent them is called a character set. In conclusion, understanding ascii and unicode is crucial for anyone studying computer science or working with data representation. while ascii is a basic character encoding standard, unicode offers a much wider range of characters and has become the universal standard for data representation.

Unicode Gcse Computer Science Definition For computers to be able to communicate and exchange text between each other efficiently, they must have an agreed standard that defines which character code is used for which character. a standardised collection of characters and the bit patterns used to represent them is called a character set. In conclusion, understanding ascii and unicode is crucial for anyone studying computer science or working with data representation. while ascii is a basic character encoding standard, unicode offers a much wider range of characters and has become the universal standard for data representation. Unicode is a more extensive character encoding system that overcomes the limitations of ascii and extended ascii. it uses a variable number of bits for each character, typically 8, 16, or 32 bits. A glossary of keywords and definitions to help students revise for the topic ascii & unicode. Unicode assigns a unique number to every character, regardless of the platform, program, or language. before unicode, various character encodings existed, each with limitations. these early encoding methods could not cover all languages and often conflicted with one another. This allows unicode to represent over a million unique characters, covering most of the world's writing systems, symbols, and even emojis. the increased number of bits makes unicode more versatile but also requires more storage space compared to ascii for some characters.

Unicode Gcse Computer Science Definition Unicode is a more extensive character encoding system that overcomes the limitations of ascii and extended ascii. it uses a variable number of bits for each character, typically 8, 16, or 32 bits. A glossary of keywords and definitions to help students revise for the topic ascii & unicode. Unicode assigns a unique number to every character, regardless of the platform, program, or language. before unicode, various character encodings existed, each with limitations. these early encoding methods could not cover all languages and often conflicted with one another. This allows unicode to represent over a million unique characters, covering most of the world's writing systems, symbols, and even emojis. the increased number of bits makes unicode more versatile but also requires more storage space compared to ascii for some characters.

Gcse Computer Science Data Representation Ascii Unicode Unicode assigns a unique number to every character, regardless of the platform, program, or language. before unicode, various character encodings existed, each with limitations. these early encoding methods could not cover all languages and often conflicted with one another. This allows unicode to represent over a million unique characters, covering most of the world's writing systems, symbols, and even emojis. the increased number of bits makes unicode more versatile but also requires more storage space compared to ascii for some characters.

Ada Computer Science

Comments are closed.