Understanding Synchronization In Pthreads How Threads Access Shared Memory Safely

Threads Synchronization Pdf Process Computing Method Computer Learn how pthread synchronization works in multithreading, why locks are cooperative, and how threads coordinate access to shared memory without temporary buffers. This blog demystifies initializing `pthread` mutexes for inter process use. we’ll cover the prerequisites, step by step implementation, best practices, and critical pitfalls to avoid. by the end, you’ll be able to safely synchronize shared resources across processes using `pthread` mutexes.

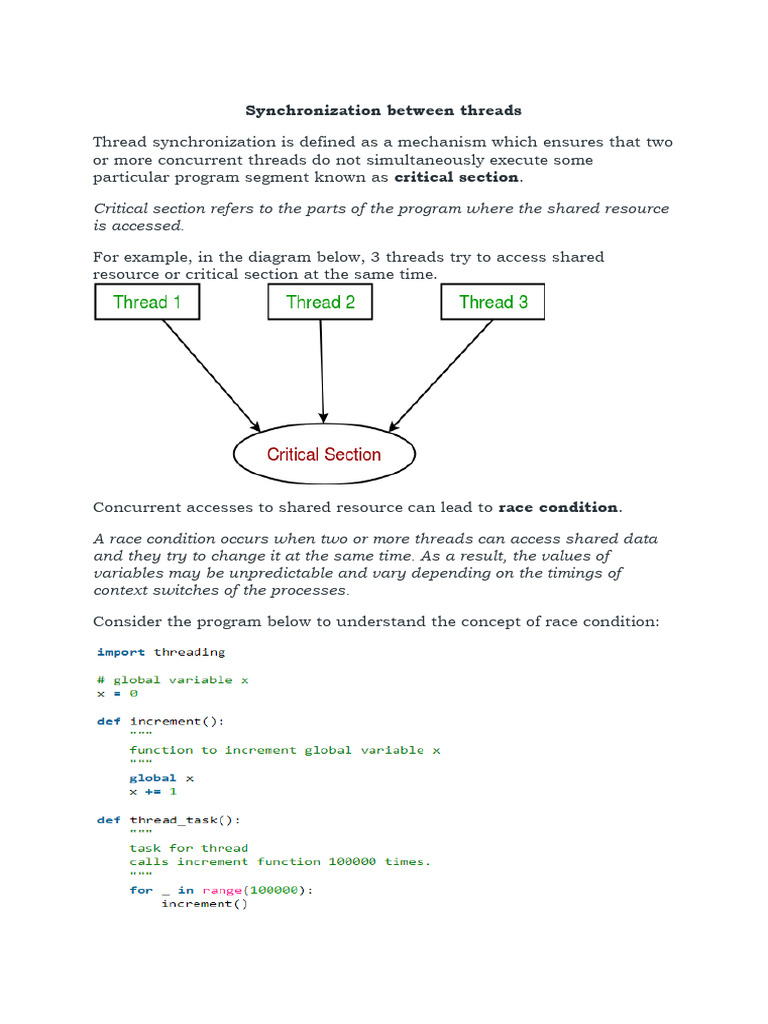

Synchronization Between Threads Pdf Thread Computing Process In real time, when two or more threads need access to a shared resource at the same time, the threads coordinate using the thread synchronization mechanism. thread synchronization ensures that only one thread uses the shared resource at a time. Learn how to coordinate multiple posix threads safely using synchronization mechanisms such as mutexes, condition variables, semaphores, and barriers to control access to shared data and ensure correct concurrent execution. A thread in shared memory programming is analogous to a process in distributed memory programming. however, a thread is often lighter weight than a full fledged process. Thread synchronization ensures that multiple threads or processes can safely access shared resources without conflicts. the critical section is the part of the program where a shared resource is accessed, and only one thread should execute it at a time.

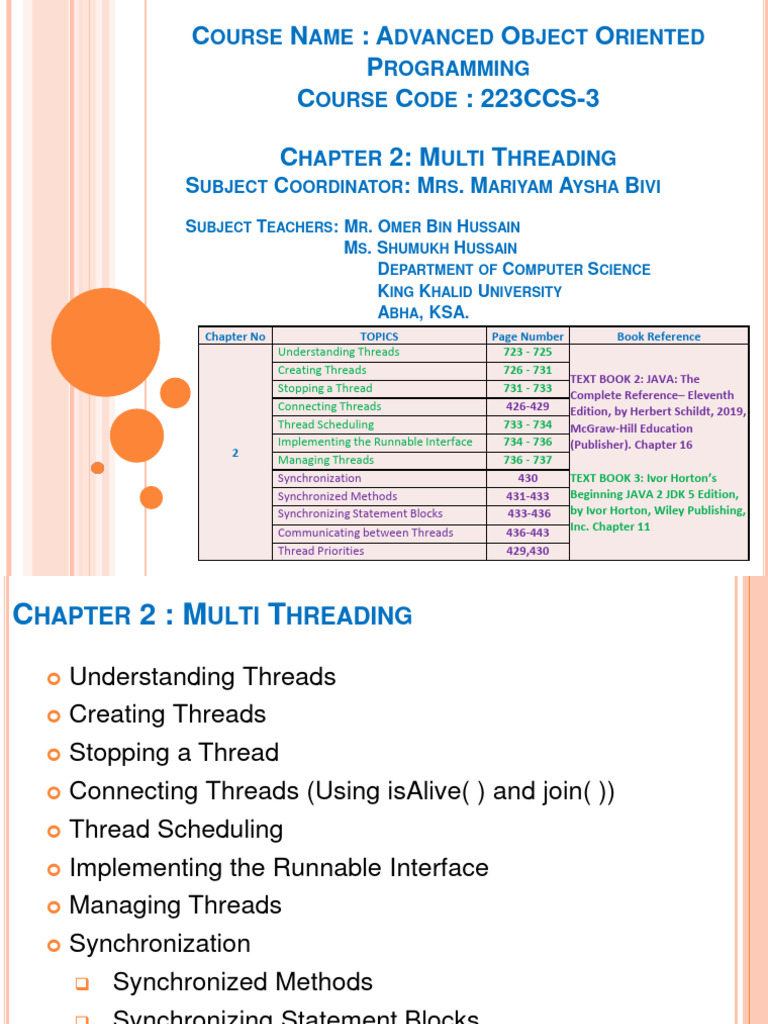

Ch2 Threads Synchronization Pdf Method Computer Programming A thread in shared memory programming is analogous to a process in distributed memory programming. however, a thread is often lighter weight than a full fledged process. Thread synchronization ensures that multiple threads or processes can safely access shared resources without conflicts. the critical section is the part of the program where a shared resource is accessed, and only one thread should execute it at a time. Thread synchronization is a fundamental concept in operating systems that ensures multiple threads can safely access shared resources without causing data corruption or unpredictable behavior. A race condition is when non deterministic behavior results from threads accessing shared data or resources without following a defined synchronization protocol for serializing such access. General thread structure typically, a thread is a concurrent execution of a function or a procedure • so, your program needs to be restructured such that parallel parts form separate procedures or functions. The most important aspect of parallel programming that is different from sequential (i.e., using 1 thread) programming is synchronization among the various parallel executing threads that access a common resource (in our case: shared variables) concurrently.

Comments are closed.