Understanding Sampling Methods In Llms

Understanding Llms A Comprehensive Overview From Training To Inference While simple methods like greedy decoding offer consistency, more sophisticated approaches like nucleus sampling provide better balance between quality and creativity. understanding these methods helps in choosing the right approach for specific applications and achieving optimal results. Learn how llms choose their next token using probabilities, log probabilities, greedy sampling, and random sampling—with real examples and code.

Understanding Sampling Methods In Llms Temperature And Top K Explained This post will describe some common techniques of sampling available on many different llms, using a simple, hypothetical training set. it will also show code for doing this with a much larger training set with gemma. Discover and understand how simple settings like temperature and top k can shape the creativity and precision of texts generated by llms. Dummy's guide to modern llm sampling (via) this is an extremely useful, detailed set of explanations by @alpindale covering the various different sampling strategies used by modern llms. In this chapter, our machine learning engineer, darek kleczek, clarifies the various sampling methods used in large language models (llms). sampling techniques unveiled: dive into the core concept of sampling methods in llms, understanding how text is generated through token probabilities.

Understanding Sampling Methods In Llms Dummy's guide to modern llm sampling (via) this is an extremely useful, detailed set of explanations by @alpindale covering the various different sampling strategies used by modern llms. In this chapter, our machine learning engineer, darek kleczek, clarifies the various sampling methods used in large language models (llms). sampling techniques unveiled: dive into the core concept of sampling methods in llms, understanding how text is generated through token probabilities. Every llm output is a probability distribution over ~50,000 tokens. sampling strategy determines whether you get deterministic garbage or creative genius. most engineers get this wrong. llms don't think in words. final layer outputs a vector of raw scores (logits) one per token in vocabulary. As we’ll explore throughout this blog, the strategic selection and application of sampling methodologies when fine tuning llms directly impacts their performance. In this beginner friendly guide, we’ve explored four fundamental sampling methods used in large language models: beam search, top k sampling, top p (nucleus) sampling, and temperature. Advanced llm sampling methods have the potential to transform ai outputs in various domains. by selecting the right sampling technique, optimizing parameters, and combining output from multiple methods, developers can generate more diverse and coherent results.

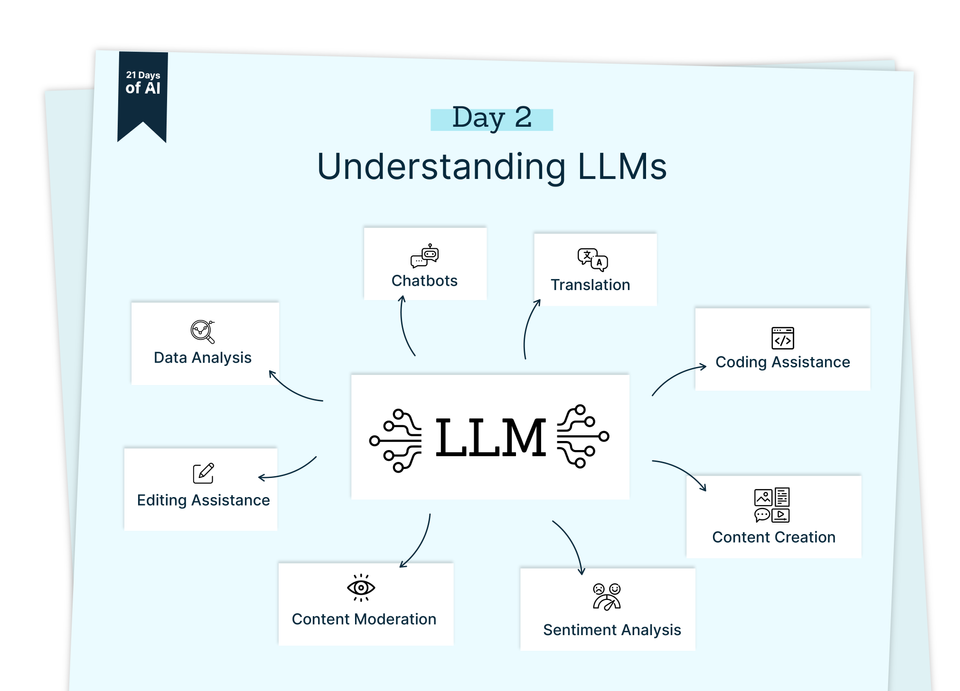

Understanding Llms Every llm output is a probability distribution over ~50,000 tokens. sampling strategy determines whether you get deterministic garbage or creative genius. most engineers get this wrong. llms don't think in words. final layer outputs a vector of raw scores (logits) one per token in vocabulary. As we’ll explore throughout this blog, the strategic selection and application of sampling methodologies when fine tuning llms directly impacts their performance. In this beginner friendly guide, we’ve explored four fundamental sampling methods used in large language models: beam search, top k sampling, top p (nucleus) sampling, and temperature. Advanced llm sampling methods have the potential to transform ai outputs in various domains. by selecting the right sampling technique, optimizing parameters, and combining output from multiple methods, developers can generate more diverse and coherent results.

30 Days Of Llms Day 6 Sampling Methods In Llms Explained 30 Days In this beginner friendly guide, we’ve explored four fundamental sampling methods used in large language models: beam search, top k sampling, top p (nucleus) sampling, and temperature. Advanced llm sampling methods have the potential to transform ai outputs in various domains. by selecting the right sampling technique, optimizing parameters, and combining output from multiple methods, developers can generate more diverse and coherent results.

Comments are closed.