Understanding Regularization In Statistical Learning Techniques

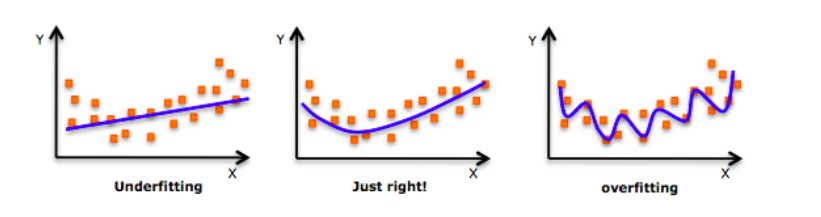

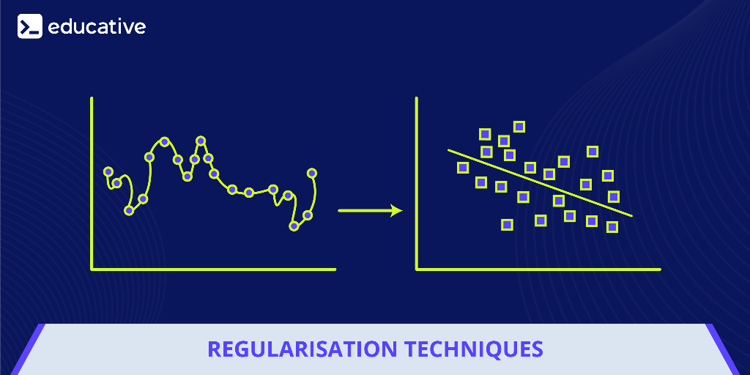

Understanding Regularization Techniques In Machine Learning Regularization is a main technique that prevent overfitting by adding a penalty term to the model's loss function. l1 regularization (lasso) encourages sparsity in coefficients, effectively selecting essential features, while l2 regularization (ridge) maintains all coefficients small but not zero. In this part of the book we will talk about the notion of regularization (what is regularization, what is the purpose of regularization, what approaches are used for regularization) all of this within the context of linear models.

5 Regularization Techniques You Should Know In this article, we will explore five popular regularization techniques: l1 regularization, l2 regularization, dropout, data augmentation, and early stopping. In machine learning, this paper introduced sparse regularization, low rank regularization, and manifold regularization. in deep learning, data augmentation, dropout, early stopping, batch normalization are seen as the common regularization strategies. Discover the power of regularization in statistical computing, from basics to advanced techniques for model optimization and improved predictive performance. Regularization is one of the techniques that is used to control overfitting in high flexibility models. while regularization is used with many different machine learning algorithms including deep neural networks, in this article we use linear regression to explain regularization and its usage.

Regularization Techniques Discover the power of regularization in statistical computing, from basics to advanced techniques for model optimization and improved predictive performance. Regularization is one of the techniques that is used to control overfitting in high flexibility models. while regularization is used with many different machine learning algorithms including deep neural networks, in this article we use linear regression to explain regularization and its usage. In this blog, we will learn about 5 most popular regularization techniques used in machine learning, particularly in deep neural networks with multiple layers of neurons. Potential barriers include low trust in new methods, limited statistical experience, an unsupportive environment that does not encourage engagement with new methods, and the perception that learning new methods is too time consuming. this is particularly evident in the context of regularization methods. Regularization is a powerful tool in machine learning, striking a balance between simplicity and predictive power in models. by applying l1 or l2 regularization, we can create models that generalize better to new data, avoiding the pitfalls of overfitting. Regularization is a set of methods for reducing overfitting in machine learning models. typically, regularization trades a marginal decrease in training accuracy for an increase in generalizability.

Understanding Regularization Techniques In Machine Learning In this blog, we will learn about 5 most popular regularization techniques used in machine learning, particularly in deep neural networks with multiple layers of neurons. Potential barriers include low trust in new methods, limited statistical experience, an unsupportive environment that does not encourage engagement with new methods, and the perception that learning new methods is too time consuming. this is particularly evident in the context of regularization methods. Regularization is a powerful tool in machine learning, striking a balance between simplicity and predictive power in models. by applying l1 or l2 regularization, we can create models that generalize better to new data, avoiding the pitfalls of overfitting. Regularization is a set of methods for reducing overfitting in machine learning models. typically, regularization trades a marginal decrease in training accuracy for an increase in generalizability.

Understanding Regularization In Machine Learning Interviewplus Regularization is a powerful tool in machine learning, striking a balance between simplicity and predictive power in models. by applying l1 or l2 regularization, we can create models that generalize better to new data, avoiding the pitfalls of overfitting. Regularization is a set of methods for reducing overfitting in machine learning models. typically, regularization trades a marginal decrease in training accuracy for an increase in generalizability.

What Are Regularization Techniques In Regression

Comments are closed.