Understanding Llm Int8 Quantization Picovoice

Understanding Llm Quantization With The Surge In Applications Using Llms are highly useful, yet their runtime requirements are eye watering. learn how llm.int8 () quantizes llms and reduces their memory requirements. Understanding the landscape of quantization methods—from simple int8 post training quantization to sophisticated techniques like gptq and awq—is essential for anyone deploying llms in resource constrained environments.

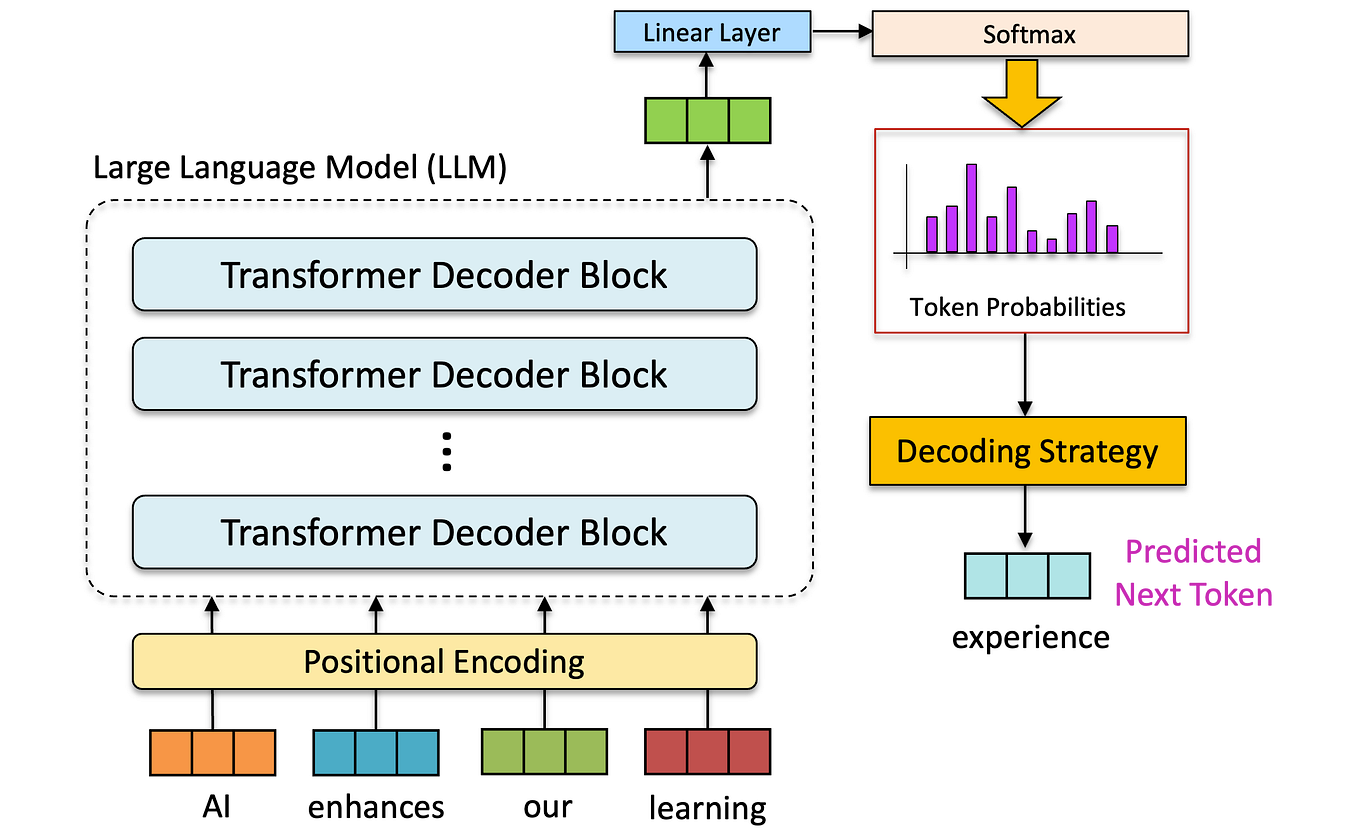

Understanding Llm Quantization With The Surge In Applications Using Picollm compression is a novel large language model (llm) quantization algorithm developed within picovoice. given a task specific cost function, picollm compression automatically learns the optimal bit allocation strategy across and within llm's weights. In my previous blog, we explored different data types for representing numbers and some basic quantization technique such as absmax and zero point. in this blog, i will introduce more nuanced. To cope with these features, we develop a two part quantization procedure, {\bf llm.int8 ()}. we first use vector wise quantization with separate normalization constants for each inner product in the matrix multiplication, to quantize most of the features. Unlike naive 8 bit quantization, which can result in loss of critical information and accuracy, llm.int8 () dynamically adapts to ensure sensitive components of the computation retain higher precision when needed.

Understanding Llm Quantization With The Surge In Applications Using To cope with these features, we develop a two part quantization procedure, {\bf llm.int8 ()}. we first use vector wise quantization with separate normalization constants for each inner product in the matrix multiplication, to quantize most of the features. Unlike naive 8 bit quantization, which can result in loss of critical information and accuracy, llm.int8 () dynamically adapts to ensure sensitive components of the computation retain higher precision when needed. Master llm quantization schemes. learn how fp32, int8, nf4, and mx formats impact vram, latency, and model accuracy for efficient ai deployment. The complete guide to llm quantization. learn how quantization reduces model size by up to 75% while maintaining performance, enabling powerful ai models to run on consumer hardware. What quantization is, when to use int8 or int4, how it affects quality, and a simple evaluation loop you can run before shipping. Understanding how bit rates and quantization shape llm deployment, from precision trade offs to practical quantization methods like gptq, awq, and smoothquant.

Understanding Llm Quantization With The Surge In Applications Using Master llm quantization schemes. learn how fp32, int8, nf4, and mx formats impact vram, latency, and model accuracy for efficient ai deployment. The complete guide to llm quantization. learn how quantization reduces model size by up to 75% while maintaining performance, enabling powerful ai models to run on consumer hardware. What quantization is, when to use int8 or int4, how it affects quality, and a simple evaluation loop you can run before shipping. Understanding how bit rates and quantization shape llm deployment, from precision trade offs to practical quantization methods like gptq, awq, and smoothquant.

Understanding Llm Quantization With The Surge In Applications Using What quantization is, when to use int8 or int4, how it affects quality, and a simple evaluation loop you can run before shipping. Understanding how bit rates and quantization shape llm deployment, from precision trade offs to practical quantization methods like gptq, awq, and smoothquant.

Comments are closed.