Understanding Graph Attention Networks

Github Nicoboou Graph Attention Networks Graph Attention Networks To gain an intuitive understanding of how gats operate, i will present a step by step progression of the computation, accompanied by a simple numeric example. additionally, i will assess the. Graph attention networks (gats) have emerged as a powerful and versatile framework in this direction, inspiring numerous extensions and applications in several areas. in this review, we.

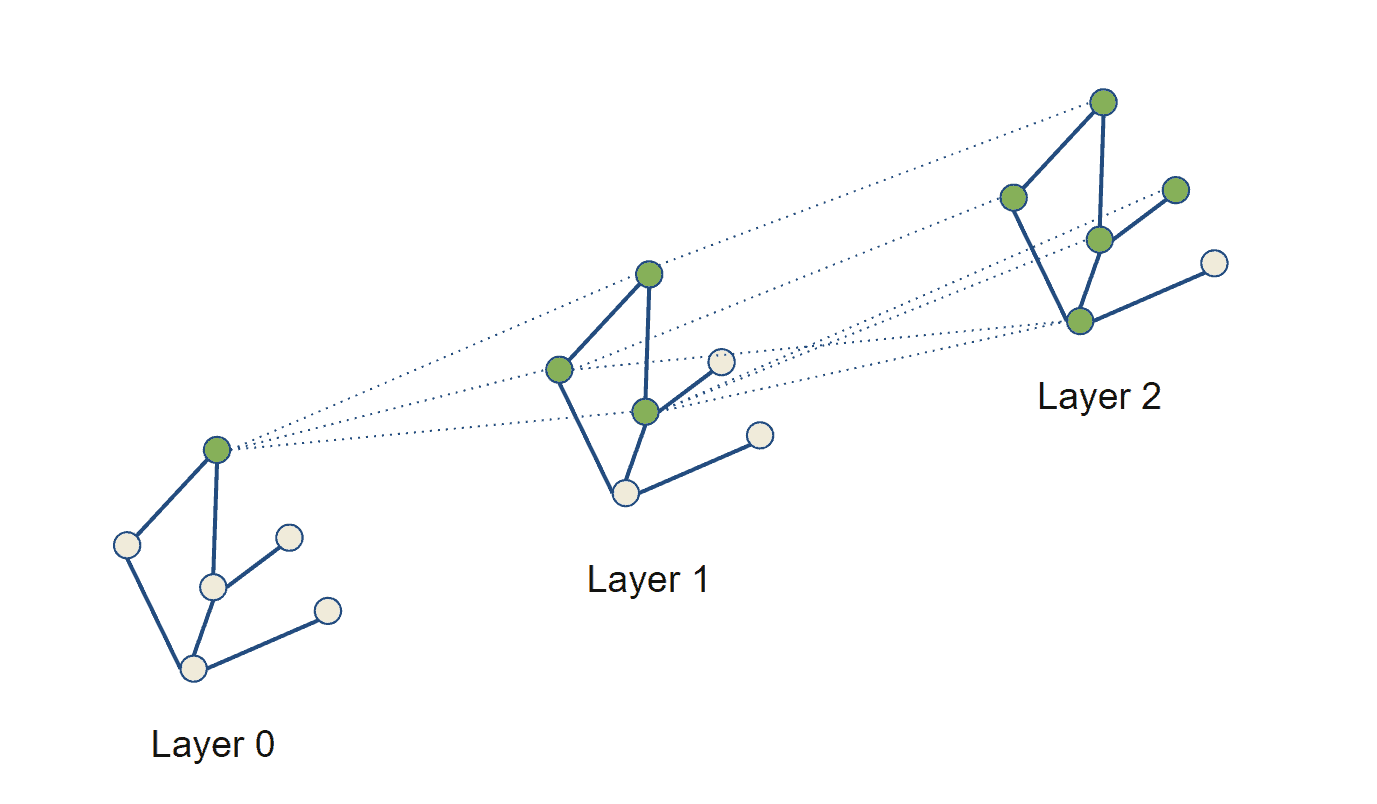

Understanding Attention In Graph Neural Networks Deepai Abstract identify factors influencing its effectiveness. motivated by insights from the work on graph isomorphism networks (xu et al., 2019), we design simple graph rea soning tasks that allow u. A detailed look at the gat architecture, which uses self attention mechanisms to assign different weights to different neighbors. Graph attention networks (gats) are neural networks designed to work with graph structured data. we encounter such data in a variety of real world applications such as social networks, biological networks, and recommendation systems. Gat (graph attention network), is a novel neural network architecture that operate on graph structured data, leveraging masked self attentional layers to address the shortcomings of prior methods based on graph convolutions or their approximations.

Graph Attention Networks Baeldung On Computer Science Graph attention networks (gats) are neural networks designed to work with graph structured data. we encounter such data in a variety of real world applications such as social networks, biological networks, and recommendation systems. Gat (graph attention network), is a novel neural network architecture that operate on graph structured data, leveraging masked self attentional layers to address the shortcomings of prior methods based on graph convolutions or their approximations. A detailed overview of graph attention networks (gat), including its structure, benefits, trade offs, and practical use cases in enhancing node feature aggregation. Get started with graph attention networks, a powerful tool in data science and mathematics, with this beginner friendly guide that covers the basics and beyond. We explore and introduce the challenge of overwhelming propagation in deep gats. the novel deep gat model lsgat is introduced to mitigate the oversmoothing problem. extensive experiments demonstrate the superiority of our proposed model. This article provides a brief overview of the graph attention networks architecture, complete with code examples in pytorch geometric and interactive visualizations using w&b.

Graph Attention Networks Gat Explained A detailed overview of graph attention networks (gat), including its structure, benefits, trade offs, and practical use cases in enhancing node feature aggregation. Get started with graph attention networks, a powerful tool in data science and mathematics, with this beginner friendly guide that covers the basics and beyond. We explore and introduce the challenge of overwhelming propagation in deep gats. the novel deep gat model lsgat is introduced to mitigate the oversmoothing problem. extensive experiments demonstrate the superiority of our proposed model. This article provides a brief overview of the graph attention networks architecture, complete with code examples in pytorch geometric and interactive visualizations using w&b.

Pdf Graph Attention Networks We explore and introduce the challenge of overwhelming propagation in deep gats. the novel deep gat model lsgat is introduced to mitigate the oversmoothing problem. extensive experiments demonstrate the superiority of our proposed model. This article provides a brief overview of the graph attention networks architecture, complete with code examples in pytorch geometric and interactive visualizations using w&b.

Comments are closed.