Understanding Byte Pair Encoding Bpe A Key Tokenization Algorithm In

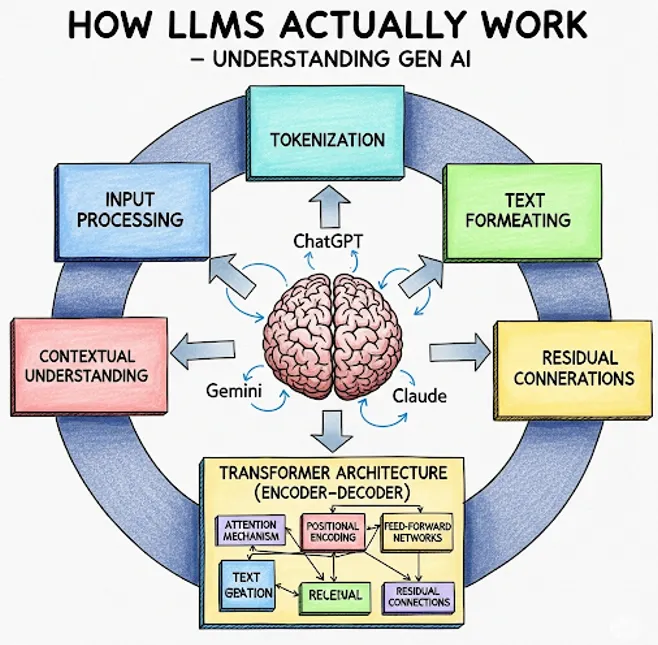

Understanding Byte Pair Encoding Bpe A Key Tokenization Algorithm In Byte pair encoding (bpe) is a text tokenization technique in natural language processing. it breaks down words into smaller, meaningful pieces called subwords. it works by repeatedly finding the most common pairs of characters in the text and combining them into a new subword until the vocabulary reaches a desired size. One of the most widely used tokenization strategies in modern nlp is byte pair encoding (bpe). originally introduced as a data compression algorithm in 1994 by phil gage, bpe was later.

Understanding Byte Pair Encoding Bpe A Key Tokenization Algorithm In Byte pair encoding (bpe) was initially developed as an algorithm to compress texts, and then used by openai for tokenization when pretraining the gpt model. it’s used by a lot of transformer models, including gpt, gpt 2, roberta, bart, and deberta. In modern nlp, subword tokenization algorithms like bpe (byte pair encoding) dominate, balancing the semantic integrity of tokens with efficient vocabulary usage. in this notebook, we will examine the bpe algorithm in detail and learn to work with tokenizers from the hugging face library. In this comprehensive guide, we’ll demystify byte pair encoding, explore its origins, applications, and impact on modern ai, and show you how to leverage bpe in your own data science projects. So let’s get started with knowing first what subword based tokenizers are and then understanding the byte pair encoding (bpe) algorithm used by the state of the art nlp models.

Understanding Byte Pair Encoding Bpe A Key Tokenization Algorithm In In this comprehensive guide, we’ll demystify byte pair encoding, explore its origins, applications, and impact on modern ai, and show you how to leverage bpe in your own data science projects. So let’s get started with knowing first what subword based tokenizers are and then understanding the byte pair encoding (bpe) algorithm used by the state of the art nlp models. Byte pair encoding (bpe) is one of the most popular subword tokenization techniques used in natural language processing (nlp). it plays a crucial role in improving the efficiency of large language models (llms) like gpt, bert, and others. The original bpe algorithm operates by iteratively replacing the most common contiguous sequences of characters in a target text with unused 'placeholder' bytes. Master byte pair encoding (bpe), the subword tokenization algorithm powering gpt and modern llms. learn how bpe builds a vocabulary through iterative merge operations, handles unknown words, and controls sequence length. choose your expertise level to adjust how many terms are explained. Bpe is the algorithm that decides "running" should become two tokens ["run", "ning"] while "the" stays as one. it's remarkably simple: start with individual characters, repeatedly merge the most frequent pair, and stop when you hit the target vocabulary size. let's build one from scratch.

Comments are closed.