Understanding Big O Notation Dev Community

Demystifying Big O Notation In Software Engineering Norbix Dev In this blog, we'll break down all of these topics so that the reader can gain a clear understanding of big o notation and its significance, enabling them to analyze and optimize algorithms effectively. Learn how to analyze algorithm efficiency using big o notation. understand time and space complexity with practical c examples that will help you write better, faster code.

Understanding The Importance Of Big O Notation In Coding Interviews Here i go trying to understand big o notation again. ah, the silence that pervades u spez, a silence that stifles the exchange of ideas and constructive feedback. that right there is all you need!. Big o notation is a mathematical shorthand used to describe how an algorithm’s resource usage grows as the input size increases. it focuses on growth behavior, not exact execution time. This article will demystify big o, explaining what it is, why it's crucial, and how you can apply it in your daily work with practical c# examples. Understand big o notation and time complexity through real world examples, visual guides, and code walkthroughs. learn how algorithm efficiency impacts performance and how to write scalable code that stands up under pressure.

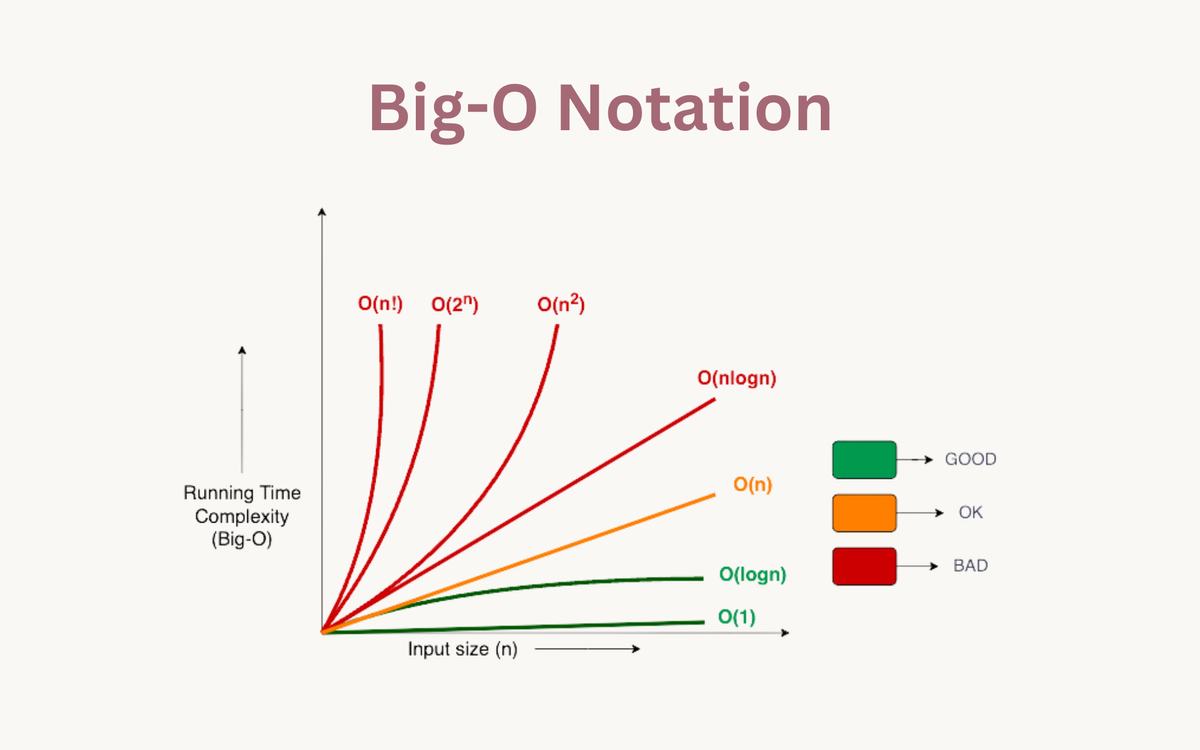

Understanding Big O Notation For Beginners Dev Community This article will demystify big o, explaining what it is, why it's crucial, and how you can apply it in your daily work with practical c# examples. Understand big o notation and time complexity through real world examples, visual guides, and code walkthroughs. learn how algorithm efficiency impacts performance and how to write scalable code that stands up under pressure. Big o notation is like the metric system for algorithms—it helps us measure how efficiently they scale. whether you're refreshing your knowledge or learning for the first time, this guide breaks down complexity from o (1) (constant time) to o (n!) (factorial time) with real world comparisons. Understanding big o notation: a beginner's guide to algorithm efficiency demystifies one of computer science's fundamental concepts. this blog post breaks down big o notation into digestible chunks, explaining why it matters for programmers and how it impacts real world applications. Understand big o notation with clear javascript examples. learn to analyze time and space complexity, compare algorithms, and boost code performance effectively. Big o is a mathematical way to describe how the performance of an algorithm changes as the size of the input grows. it doesn’t tell you the exact time your code will take. instead, it gives you a high level growth trend, how fast the number of operations increases relative to the input size.

Understanding Big O Notation For Beginners Dev Community Big o notation is like the metric system for algorithms—it helps us measure how efficiently they scale. whether you're refreshing your knowledge or learning for the first time, this guide breaks down complexity from o (1) (constant time) to o (n!) (factorial time) with real world comparisons. Understanding big o notation: a beginner's guide to algorithm efficiency demystifies one of computer science's fundamental concepts. this blog post breaks down big o notation into digestible chunks, explaining why it matters for programmers and how it impacts real world applications. Understand big o notation with clear javascript examples. learn to analyze time and space complexity, compare algorithms, and boost code performance effectively. Big o is a mathematical way to describe how the performance of an algorithm changes as the size of the input grows. it doesn’t tell you the exact time your code will take. instead, it gives you a high level growth trend, how fast the number of operations increases relative to the input size.

Understanding Big O Notation Dev Community Understand big o notation with clear javascript examples. learn to analyze time and space complexity, compare algorithms, and boost code performance effectively. Big o is a mathematical way to describe how the performance of an algorithm changes as the size of the input grows. it doesn’t tell you the exact time your code will take. instead, it gives you a high level growth trend, how fast the number of operations increases relative to the input size.

Demystifying Big O Notation Dev Community

Comments are closed.