Tutorial A Practical Deep Dive Into Memory Optimization For Agentic

Research Memory Optimization Lab Learn how to optimize memory for ai agents, understanding the difference between memory, knowledge, and tools, and why context management is crucial for production agent systems. In part 1, we covered the fundamentals of agentic systems, understanding how ai agents can act autonomously to perform structured tasks. a deep dive into the fundamentals of agentic systems, building blocks, and how to build them.

Research Memory Optimization Lab A curated collection of systems, benchmarks, and papers et. on memory mechanisms for large language models (llms) and multimodal large language models (mllms), exploring how different approaches enable long term context, retrieval, and efficient reasoning. In this article, you will learn how to design, implement, and evaluate memory systems that make agentic ai applications more reliable, personalized, and effective over time. An in depth guide to building production grade agentic memory for llms, covering architecture, real time pipelines, and optimization strategies. By integrating long term memory for context retention and the model context protocol (mcp) for connecting to external data, you will walk away with the skills to build robust, intelligent.

Memory Optimization Techniques In Deep Learning Frameworks Peerdh An in depth guide to building production grade agentic memory for llms, covering architecture, real time pipelines, and optimization strategies. By integrating long term memory for context retention and the model context protocol (mcp) for connecting to external data, you will walk away with the skills to build robust, intelligent. This article explores 9 powerful techniques to optimize memory in ai agents, helping developers and researchers design smarter, more scalable ai systems. Memory optimization for llms reduces peak and steady state memory by combining quantization (weights kv cache), efficient attention (paged kv cache), activation gradient checkpointing, sharding offloading, and context compression. A practical tutorial on implementing long term and short term memory systems in ai agents using langchain, pydantic ai, and agno frameworks for production applications. Memory management in agentic ai agents is crucial for context retention, multi turn reasoning, and long term learning. in this article, we’ll explore why memory is vital, what types exist, and how you can implement memory strategies using popular frameworks like langchain, llamaindex, and crewai.

Deep Dive Into Agentic Ai This article explores 9 powerful techniques to optimize memory in ai agents, helping developers and researchers design smarter, more scalable ai systems. Memory optimization for llms reduces peak and steady state memory by combining quantization (weights kv cache), efficient attention (paged kv cache), activation gradient checkpointing, sharding offloading, and context compression. A practical tutorial on implementing long term and short term memory systems in ai agents using langchain, pydantic ai, and agno frameworks for production applications. Memory management in agentic ai agents is crucial for context retention, multi turn reasoning, and long term learning. in this article, we’ll explore why memory is vital, what types exist, and how you can implement memory strategies using popular frameworks like langchain, llamaindex, and crewai.

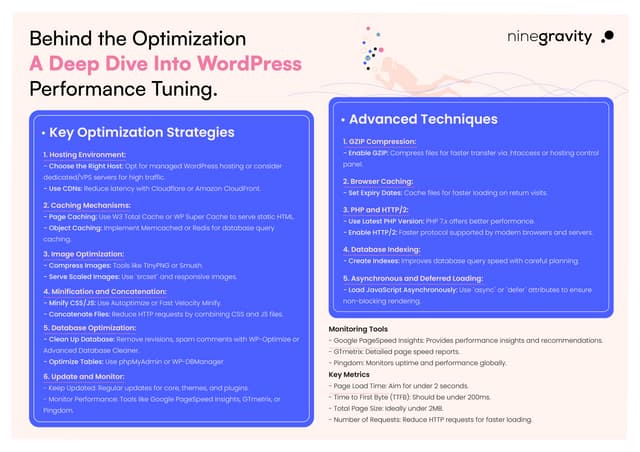

Behind The Optimization A Deep Dive Into Wordpress Performance Tuning Pdf A practical tutorial on implementing long term and short term memory systems in ai agents using langchain, pydantic ai, and agno frameworks for production applications. Memory management in agentic ai agents is crucial for context retention, multi turn reasoning, and long term learning. in this article, we’ll explore why memory is vital, what types exist, and how you can implement memory strategies using popular frameworks like langchain, llamaindex, and crewai.

Comments are closed.