Tutorial 01 Nlp Tokenization

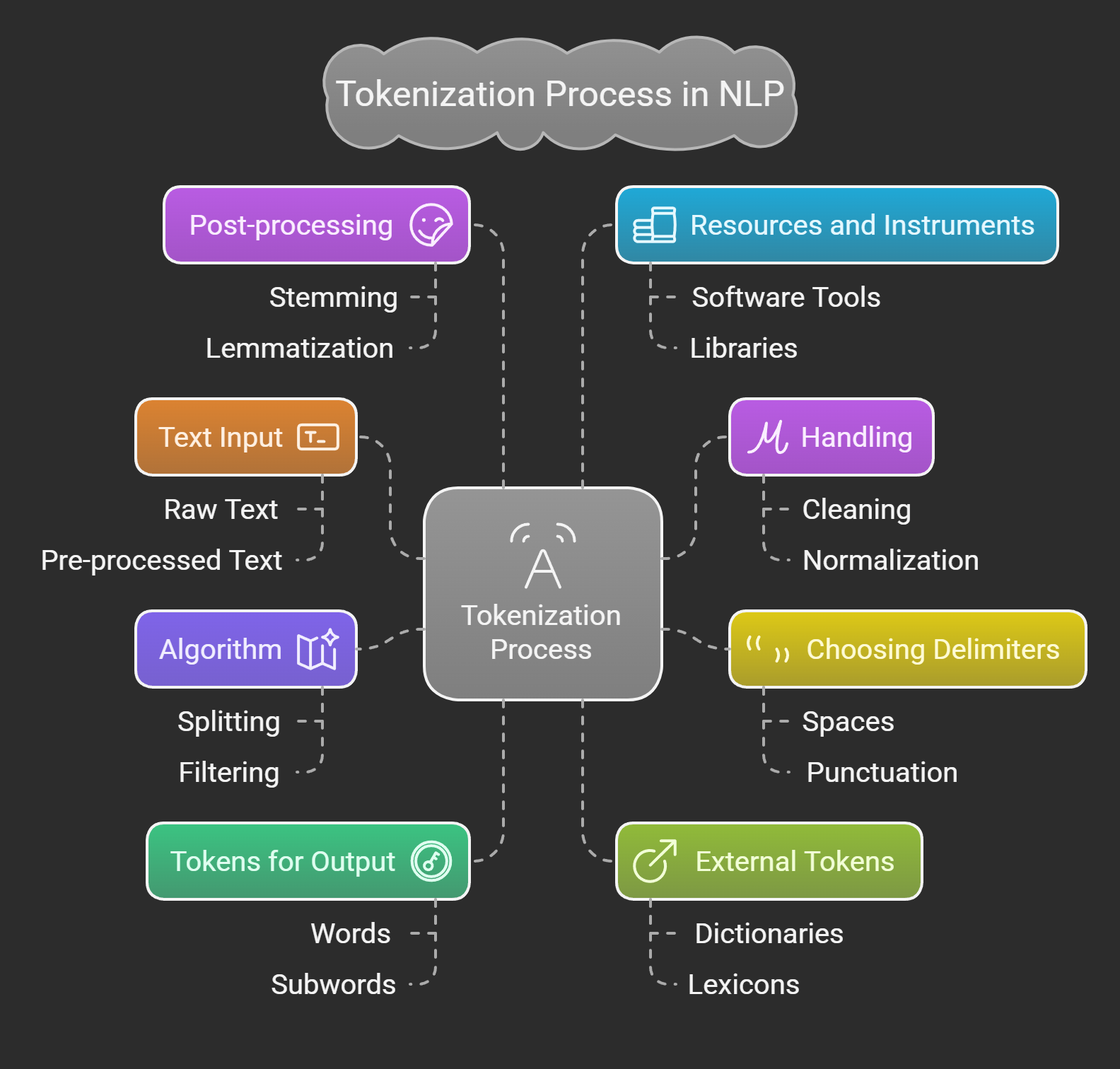

Nlp Tokenization Types Comparison Complete Guide The goal is to find the most meaningful representation — that is, the one that makes the most sense to the model — and, if possible, the smallest representation. let’s take a look at some examples of tokenization algorithms, and try to answer some of the questions you may have about tokenization. Tokenization is a foundation step in nlp pipeline that shapes the entire workflow. involves dividing a string or text into a list of smaller units known as tokens.

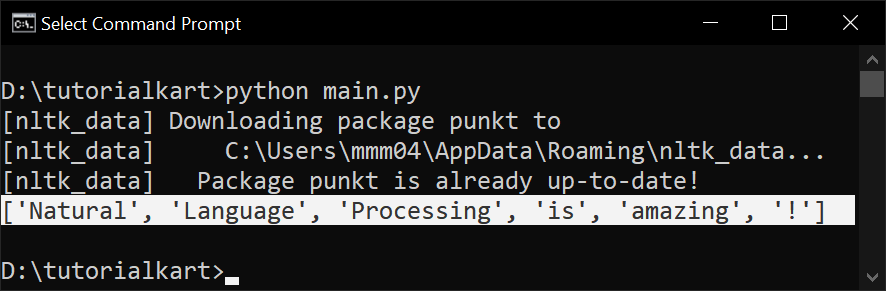

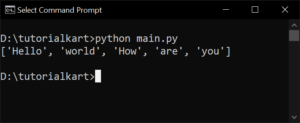

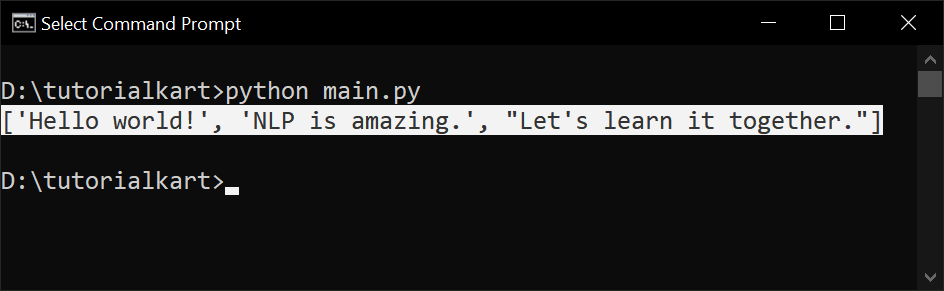

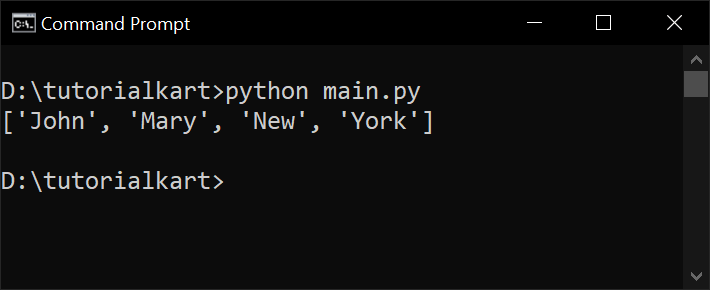

Nlp Tokenization Types Comparison Complete Guide We will look at the big picture of what nlp is really about and also give an overview of common tasks. then we will take the first step in any nlp problem, which is tokenization. In this video, we explain tokenization in natural language processing (nlp) using the powerful python library nltk in a simple and beginner friendly way. #nl. Tokenization is a fundamental step in natural language processing (nlp). it involves breaking down a text string into individual units called tokens. these tokens can be words, characters, or subwords. this tutorial explores various tokenization techniques with practical python examples. Tokenization is the process of breaking down a stream of text into smaller, meaningful units called tokens. why can't we just use the python .split(' ') function?.

Nlp Tokenization Types Comparison Complete Guide Tokenization is a fundamental step in natural language processing (nlp). it involves breaking down a text string into individual units called tokens. these tokens can be words, characters, or subwords. this tutorial explores various tokenization techniques with practical python examples. Tokenization is the process of breaking down a stream of text into smaller, meaningful units called tokens. why can't we just use the python .split(' ') function?. In this article, we dive into practical tokenization techniques — an essential step in text preprocessing — using python and the popular nltk (natural language toolkit) library. Tokenization is the process of breaking down text into smaller units called tokens. in this tutorial, we cover different types of tokenisation, comparison, and scenarios where a specific tokenisation is used. Tokenization is a fundamental step in natural language processing (nlp), where text is split into smaller units called tokens. while it may sound simple, designing robust tokenizers can be challenging due to language variations, punctuation, and edge cases. Tokenization is a fundamental process in natural language processing (nlp), essential for preparing text data for various analytical and computational tasks. in nlp, tokenization involves breaking down a piece of text into smaller, meaningful units called tokens.

Nlp Tokenization Types Comparison Complete Guide In this article, we dive into practical tokenization techniques — an essential step in text preprocessing — using python and the popular nltk (natural language toolkit) library. Tokenization is the process of breaking down text into smaller units called tokens. in this tutorial, we cover different types of tokenisation, comparison, and scenarios where a specific tokenisation is used. Tokenization is a fundamental step in natural language processing (nlp), where text is split into smaller units called tokens. while it may sound simple, designing robust tokenizers can be challenging due to language variations, punctuation, and edge cases. Tokenization is a fundamental process in natural language processing (nlp), essential for preparing text data for various analytical and computational tasks. in nlp, tokenization involves breaking down a piece of text into smaller, meaningful units called tokens.

Github Surge Dan Nlp Tokenization 如何利用最大匹配算法进行中文分词 Tokenization is a fundamental step in natural language processing (nlp), where text is split into smaller units called tokens. while it may sound simple, designing robust tokenizers can be challenging due to language variations, punctuation, and edge cases. Tokenization is a fundamental process in natural language processing (nlp), essential for preparing text data for various analytical and computational tasks. in nlp, tokenization involves breaking down a piece of text into smaller, meaningful units called tokens.

What Is Tokenization In Nlp How Does Tokenization Work

Comments are closed.