Transformers Diffusion Llms Whats The Connection

Diffusion Llms A New Era Of Large Language Models Let’s dive a bit deeper into how this plays out with different types of language models, particularly focusing on the newest kid on the block: diffusion based large language models (llms). The video explores the connection between transformers and diffusion based large language models (llms), clarifying that transformers are the foundational architecture powering both traditional autoregressive models like gpt and newer diffusion llms.

Diffusion Llms A New Era Of Large Language Models In this video, i unpack how transformers evolved from a simple machine translation tool into the universal backbone of modern ai — powering everything from auto regressive models like gpt to. Among the plethora of generative architectures, diffusion models and transformer models have emerged as two of the most prominent, each leveraging distinct underlying principles to achieve compelling results. Transformers: the neural network architecture that powers modern llms. we’ll explain how self attention replaced older rnns and why models like gpt are essentially stacks of transformer. While they are often discussed together, they serve different purposes and operate under distinct principles. this article will delve into the key differences between llms and transformers, helping you understand how each contributes to the field of ai.

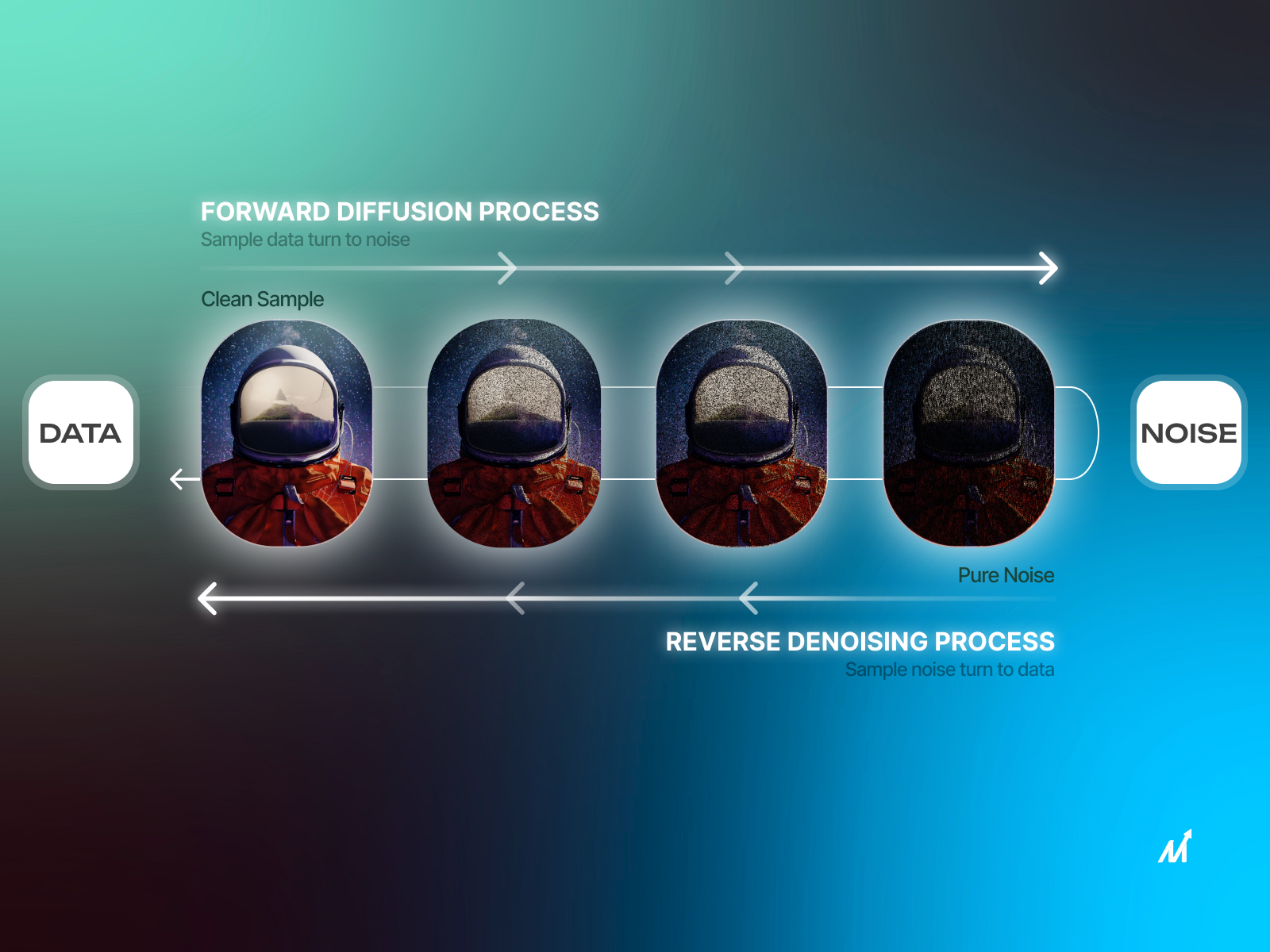

Transformers And Llms Explained Datatunnel Transformers: the neural network architecture that powers modern llms. we’ll explain how self attention replaced older rnns and why models like gpt are essentially stacks of transformer. While they are often discussed together, they serve different purposes and operate under distinct principles. this article will delve into the key differences between llms and transformers, helping you understand how each contributes to the field of ai. In the rapidly evolving field of artificial intelligence (ai), two terms frequently dominate discussions — large language models (llms) and transformers. while they are closely related, they. We challenge this notion by introducing llada, a diffusion model trained from scratch under the pre training and supervised fine tuning (sft) paradigm. llada employs a forward data masking process and a reverse generation process, parameterized by a transformer to predict masked tokens. Diffusion transformers (dits) are a new class of generative models that combine diffusion models with a transformer architecture. they replace the commonly used u net backbone in diffusion models with a transformer network, operating on latent space representations instead of pixel space. This is not just academic knowledge — understanding the transformer architecture will help you make better decisions about model selection, optimize your prompts, and debug issues when your llm applications behave unexpectedly.

Transformers And Llms In the rapidly evolving field of artificial intelligence (ai), two terms frequently dominate discussions — large language models (llms) and transformers. while they are closely related, they. We challenge this notion by introducing llada, a diffusion model trained from scratch under the pre training and supervised fine tuning (sft) paradigm. llada employs a forward data masking process and a reverse generation process, parameterized by a transformer to predict masked tokens. Diffusion transformers (dits) are a new class of generative models that combine diffusion models with a transformer architecture. they replace the commonly used u net backbone in diffusion models with a transformer network, operating on latent space representations instead of pixel space. This is not just academic knowledge — understanding the transformer architecture will help you make better decisions about model selection, optimize your prompts, and debug issues when your llm applications behave unexpectedly.

Comments are closed.