Transformers And Llms Explained Datatunnel

Transformers And Llms Explained Datatunnel Explore transformers and llms in this insightful video by 3blue1brown. Large language models (llms) are deep neural networks based on the transformer architecture, trained on vast text corpora to predict the next token in a sequence.

Decoder Only Transformers Explained The Engine Behind Llms By Yash An interactive visualization tool showing you how transformer models work in large language models (llm) like gpt. We’re on a journey to advance and democratize artificial intelligence through open source and open science. While they are often discussed together, they serve different purposes and operate under distinct principles. this article will delve into the key differences between llms and transformers, helping you understand how each contributes to the field of ai. Learn how llms work step by step. build an inference simulator in python — tokenize, embed, compute attention, sample, and decode with runnable code at every stage.

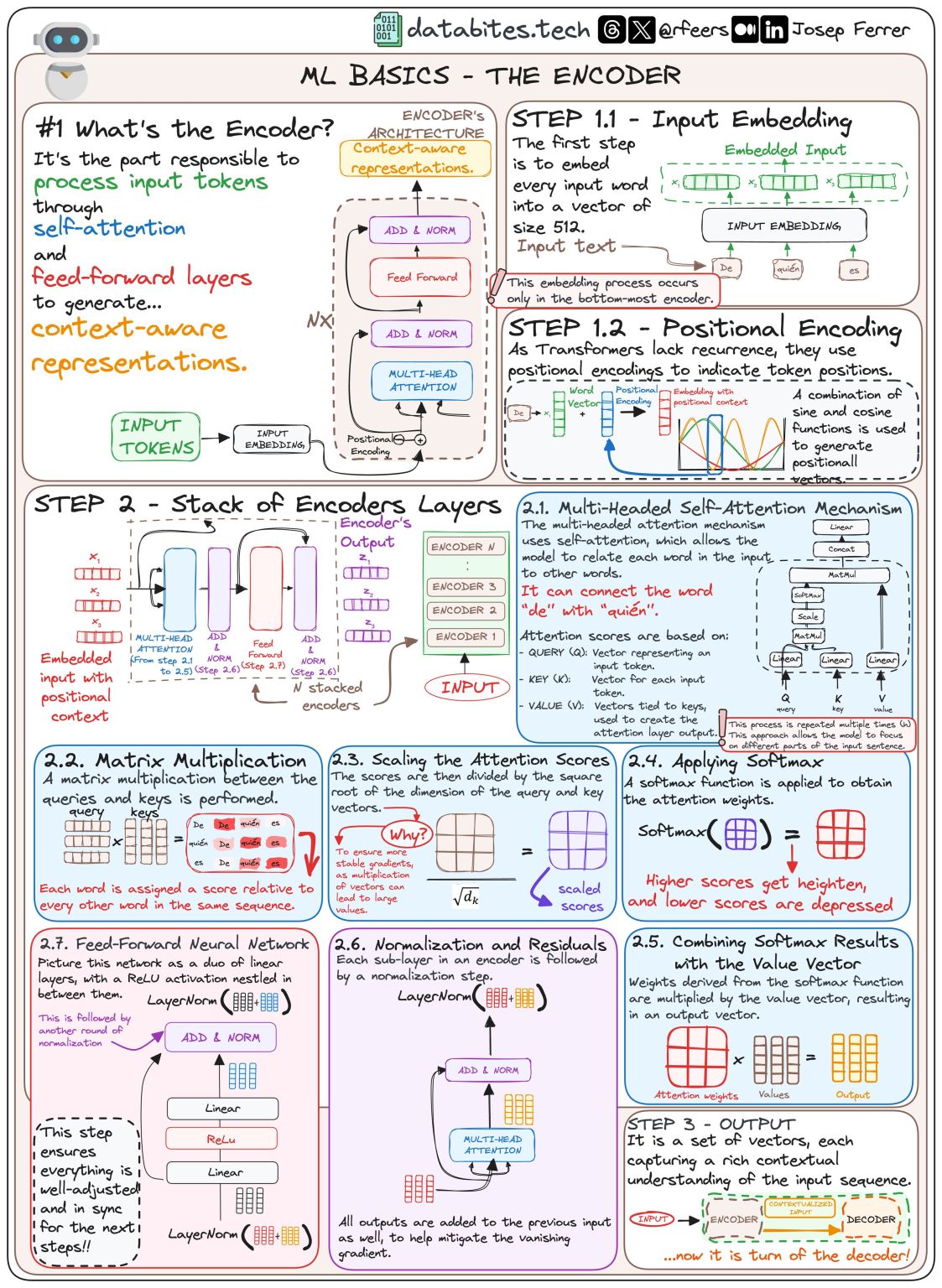

Kalyan Ks On Linkedin Transformers Llms Nlproc Deeplearning While they are often discussed together, they serve different purposes and operate under distinct principles. this article will delve into the key differences between llms and transformers, helping you understand how each contributes to the field of ai. Learn how llms work step by step. build an inference simulator in python — tokenize, embed, compute attention, sample, and decode with runnable code at every stage. This article is my attempt to bridge that gap — explaining transformers the way i wish someone had explained them to me. for an intro into what large language model (llm) means, refer this article i published previously. We will simplify the working of transformer architecture for llms and see in 9 steps how it makes the working of llms the way it is. step 1. before passing texts into the model to process,. In this article, we break down how transformers work, starting from text representation to self attention, multi head attention, and the full transformer block, showing how these components come together to generate language effectively. Understand how large language models actually work — from tokenization and embeddings through self attention and transformers to next token prediction — explained for practitioners, not researchers.

Comments are closed.