Transformers And Llms

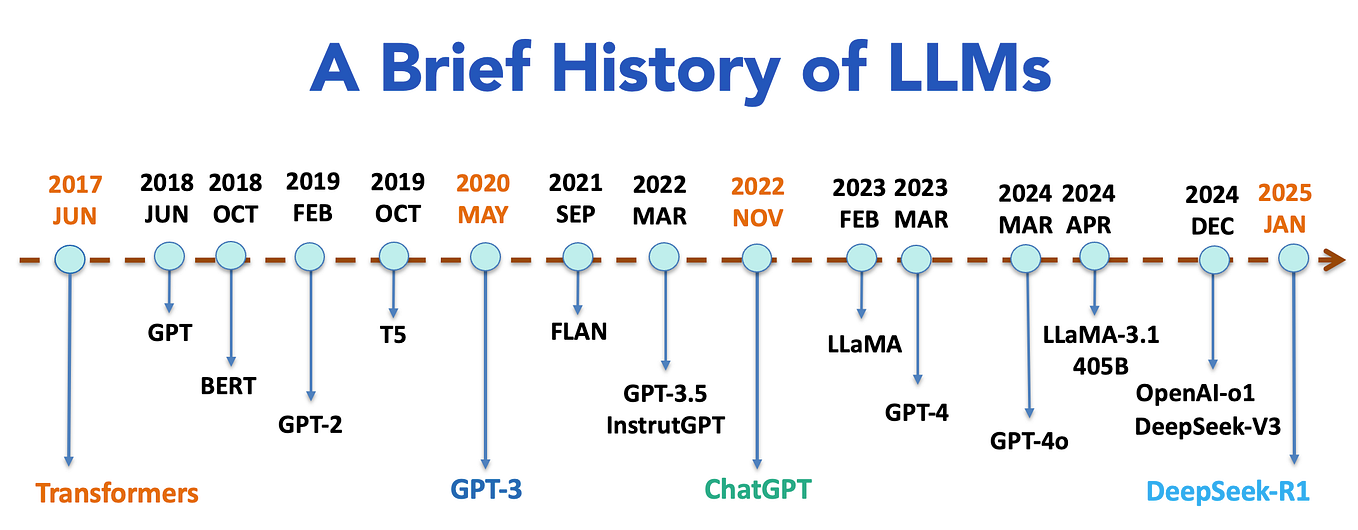

Transformers And Llms Explained Datatunnel Large language models (llms) are deep neural networks based on the transformer architecture, trained on vast text corpora to predict the next token in a sequence. While they are often discussed together, they serve different purposes and operate under distinct principles. this article will delve into the key differences between llms and transformers, helping you understand how each contributes to the field of ai.

Knowron Knowledge Corner Transformers And Llms 101 We’re on a journey to advance and democratize artificial intelligence through open source and open science. By converting text to numerical representations, using attention mechanisms to capture relationships between words, and stacking many layers to learn increasingly abstract patterns, transformers enable modern llms to produce coherent and useful text. In this course, you’ll learn how a transformer network architecture that powers llms works. you’ll build the intuition of how llms process text and work with code examples that illustrate the key components of the transformer architecture. After spending months studying transformer architectures and building llm applications, i realized something: most explanations are overwhelming or missing out some details. this article is my attempt to bridge that gap — explaining transformers the way i wish someone had explained them to me.

Transformers тйа Llms But Llms ткж Transformers By Akash Chandrasekar In this course, you’ll learn how a transformer network architecture that powers llms works. you’ll build the intuition of how llms process text and work with code examples that illustrate the key components of the transformer architecture. After spending months studying transformer architectures and building llm applications, i realized something: most explanations are overwhelming or missing out some details. this article is my attempt to bridge that gap — explaining transformers the way i wish someone had explained them to me. This section covers the transition from traditional deep learning to the modern transformer based architectures that power large language models (llms). the curriculum is split into two primary tracks: the mathematical mechanics of the transformer architecture (phase 7) and the engineering pipeline required to build and train llms (phase 10). Understanding transformer mechanics is not just academic. it changes how you think about adopting llms responsibly and effectively. here are practical implications for canadian tech and business leaders: 1) context window limits affect real deployments transformers can only attend within the sequence length they were designed to handle. Explore the reasons behind the development of the transformer architecture, including its advantages over previous models like rnns and lstms in handling sequential data. Learn how llms work step by step. build an inference simulator in python — tokenize, embed, compute attention, sample, and decode with runnable code at every stage.

Comments are closed.