Transformer Aiworks

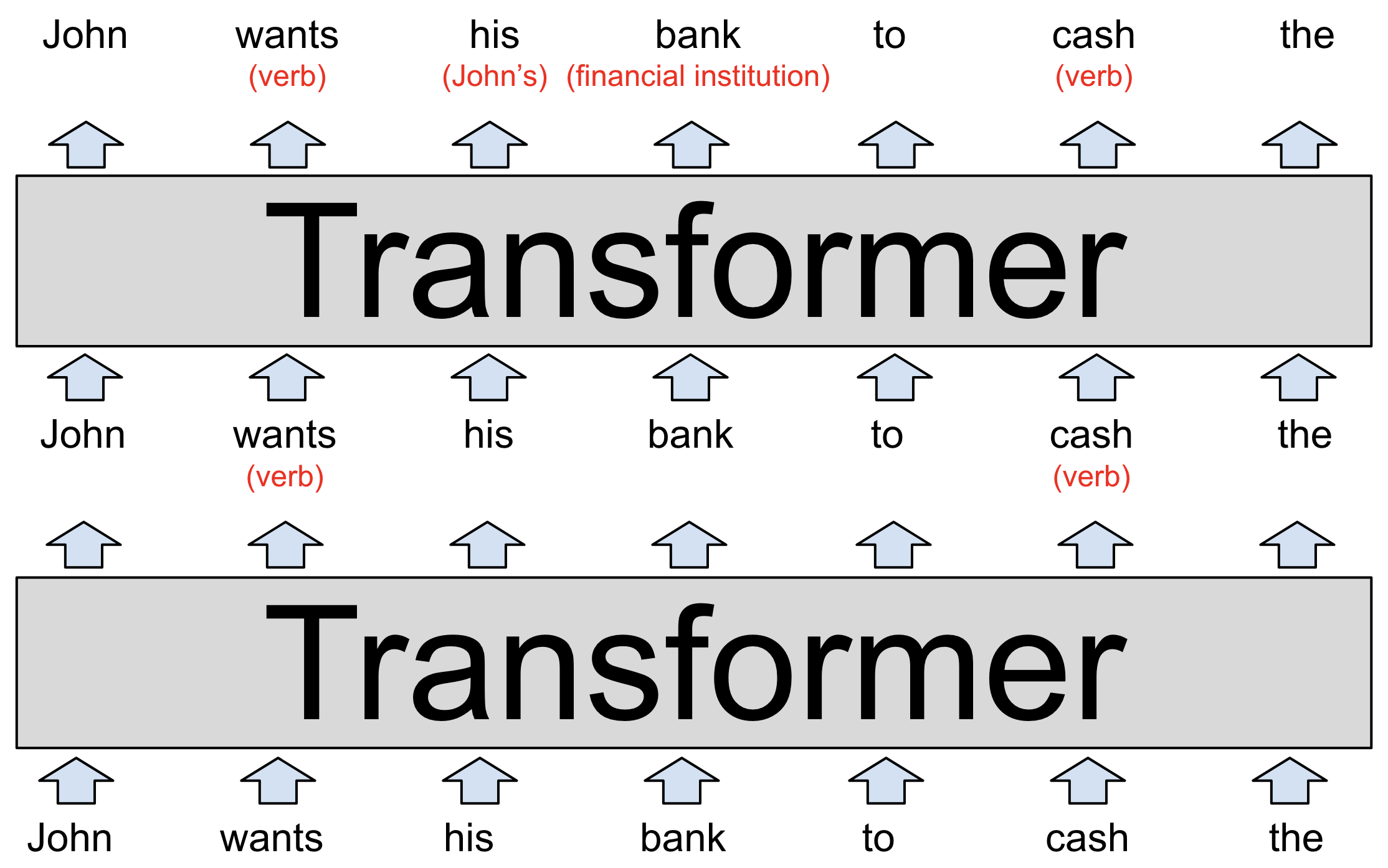

Transformer Aiworks Transformer is the core architecture behind modern ai, powering models like chatgpt and gemini. introduced in 2017, it revolutionized how ai processes information. the same architecture is used for training on massive datasets and for inference to generate outputs. Transformers are a type of deep learning model that utilizes self attention mechanisms to process and generate sequences of data efficiently. they capture long range dependencies and contextual relationships making them highly effective for tasks like language modeling, machine translation and text generation.

Aiworks Youtube We work exclusively with ceos and operating partners ready to commit to rapid ai transformation, bridging technology and business strategy to drive immediate roi and long term business defensibility. A transformer is a type of artificial intelligence model that learns to understand and generate human like text by analyzing patterns in large amounts of text data. A transformer is an architecture that allows llms to understand text context by processing data in parallel. it is a deep learning architecture that relies on the parallel multi head attention mechanism. In this section, we will take a look at the architecture of transformer models and dive deeper into the concepts of attention, encoder decoder architecture, and more. 🚀 we’re taking things up a notch here. this section is detailed and technical, so don’t worry if you don’t understand everything right away.

Aiworks A transformer is an architecture that allows llms to understand text context by processing data in parallel. it is a deep learning architecture that relies on the parallel multi head attention mechanism. In this section, we will take a look at the architecture of transformer models and dive deeper into the concepts of attention, encoder decoder architecture, and more. 🚀 we’re taking things up a notch here. this section is detailed and technical, so don’t worry if you don’t understand everything right away. The team’s goal was to give non experts a hands on introduction to what goes on under the hood of a transformer based language model, which learns from large scale data how to mimic human generated text. the tool integrates a live gpt 2 model that runs locally on the user’s web browser. Discover the real architecture behind transformer models—the foundation of chatgpt and modern ai. learn how multi head attention, layers, and positional encoding work step by step. Transformers process an entire sequence at once — be that a sentence, paragraph or an entire article — analysing all its parts and not just individual words. this allows the software to capture. Transformer is a neural network architecture used for various machine learning tasks, especially in natural language processing and computer vision. it focuses on understanding relationships within data to process information more effectively. uses attention mechanisms to capture relationships between inputs processes entire sequences at once instead of step by step improves performance on.

Aiworks Linktree The team’s goal was to give non experts a hands on introduction to what goes on under the hood of a transformer based language model, which learns from large scale data how to mimic human generated text. the tool integrates a live gpt 2 model that runs locally on the user’s web browser. Discover the real architecture behind transformer models—the foundation of chatgpt and modern ai. learn how multi head attention, layers, and positional encoding work step by step. Transformers process an entire sequence at once — be that a sentence, paragraph or an entire article — analysing all its parts and not just individual words. this allows the software to capture. Transformer is a neural network architecture used for various machine learning tasks, especially in natural language processing and computer vision. it focuses on understanding relationships within data to process information more effectively. uses attention mechanisms to capture relationships between inputs processes entire sequences at once instead of step by step improves performance on.

Comments are closed.