Towards Aligning Language Models With Textual Feedback Acl Anthology

Accurate And Nuanced Open Qa Evaluation Through Textual Entailment Alt aligns the model by conditioning its generation on the textual feedback. our method relies solely on language modeling techniques and requires minimal hyper parameter tuning, though it still presents the main benefit of rl based algorithms and can effectively learn from textual feedback. We argue that text offers greater expressiveness, enabling users to provide richer feedback than simple comparative preferences and this richer feedback can lead to more efficient and effective alignment.

Aoe Angle Optimized Embeddings For Semantic Textual Similarity Acl In this work, we propose a text based feedback mechanism for aligning language models. we propose that providing models with textual feedback, rather than numerical scores, can offer a more nuanced and informative learning signal for understanding human preferences. We explore the efficacy and efficiency of textual feedback across different tasks such as toxicity reduction, summarization, and dialog response generation. Alt aligns the model by conditioning its genera tions on the textual feedback. our method re lies solely on language modeling techniques and requires minimal hyper parameter tuning while retaining the main benets of rl based alignment algorithms. This work proposes a text based feedback mechanism for aligning language models. we posit that providing models with textual feedback, rather than numerical scores, can offer a more nuanced and informative learning signal for understanding human preferences.

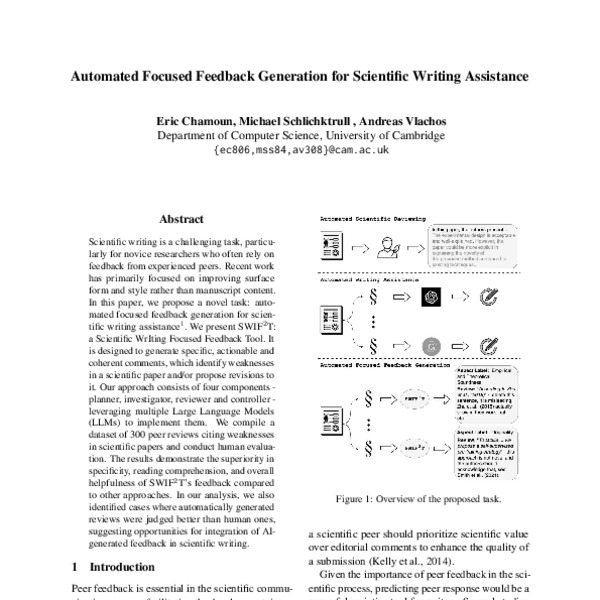

Automated Focused Feedback Generation For Scientific Writing Assistance Alt aligns the model by conditioning its genera tions on the textual feedback. our method re lies solely on language modeling techniques and requires minimal hyper parameter tuning while retaining the main benets of rl based alignment algorithms. This work proposes a text based feedback mechanism for aligning language models. we posit that providing models with textual feedback, rather than numerical scores, can offer a more nuanced and informative learning signal for understanding human preferences. We present alt (alignment with textual feedback), an approach that aligns models toward certain user preferences expressed in text. Keerthiram.murugesan@ibm abstract we present alt (alignment with textual feedback), an approach that aligns language models w. th user preferences expressed in text. we argue that text offers greater expressive ness, enabling users to provide richer feedback than simple comparative preferences, leading to . This work proposes a novel technique, chain of hindsight, that is easy to optimize and can learn from any form of feedback, regardless of its polarity, and significantly surpasses previous methods in aligning language models with human preferences. Alt for llm alignment this repository is the official implementation of towards aligning language models with textual feedback.

Comments are closed.