Top Parallelization Techniques For Enhancing Ai Training

Top Parallelization Techniques For Enhancing Ai Training To overcome these limitations, leveraging parallelism techniques can significantly boost your ai workflows. here’s a quick overview of the most effective strategies. Training large llms often faces gpu memory and compute limitations. this blog explores parallelization techniques like data, model, and tensor parallelism to enhance efficiency, speed up training, and optimize ai deployment across multiple gpus.

Enhancing Ai Model Training Techniques And Best Practices Green Tomato Therefore, this blog summarizes some commonly used distributed parallel training and memory management techniques, hoping to help everyone better train and optimize large models. With the right parallelization strategy, you can train models that would otherwise be computationally infeasible, explore architectural innovations that require massive scale, and deliver ai. A clear, system level guide to data, pipeline, tensor, and expert parallelism used in large scale ai model training. Parallel processing is crucial for building high performance llm applications. key takeaways: this track will guide you through google ai studio's new "build apps with gemini" feature, where you can turn a simple text prompt into a fully functional, deployed web application in minutes. read more →. stop waiting 24–48 hours for dns changes.

Different Training Parallelization Strategies Download Scientific Diagram A clear, system level guide to data, pipeline, tensor, and expert parallelism used in large scale ai model training. Parallel processing is crucial for building high performance llm applications. key takeaways: this track will guide you through google ai studio's new "build apps with gemini" feature, where you can turn a simple text prompt into a fully functional, deployed web application in minutes. read more →. stop waiting 24–48 hours for dns changes. The increasing complexity and size of large language models (llms) have necessitated the development of sophisticated parallel strategies to optimize their training and deployment. to enhance the utilization of computational resources, parallelization techniques are. Here are the main parallel training methods, their benefits, and how they are revolutionizing the field of ai. data parallelism is one of the most widely used parallel training techniques in ai. this method involves copying the model parameters to multiple gpus and assigning different data examples to each gpu for simultaneous processing. Learn how data, model, and hybrid parallelism optimize large generative ai model training with distributed machine learning methods. In this paper, we review the literature on parallel strategies for llms in both training and inference scenarios, emphasizing the need for adaptable parallel strategies.

Different Training Parallelization Strategies Download Scientific Diagram The increasing complexity and size of large language models (llms) have necessitated the development of sophisticated parallel strategies to optimize their training and deployment. to enhance the utilization of computational resources, parallelization techniques are. Here are the main parallel training methods, their benefits, and how they are revolutionizing the field of ai. data parallelism is one of the most widely used parallel training techniques in ai. this method involves copying the model parameters to multiple gpus and assigning different data examples to each gpu for simultaneous processing. Learn how data, model, and hybrid parallelism optimize large generative ai model training with distributed machine learning methods. In this paper, we review the literature on parallel strategies for llms in both training and inference scenarios, emphasizing the need for adaptable parallel strategies.

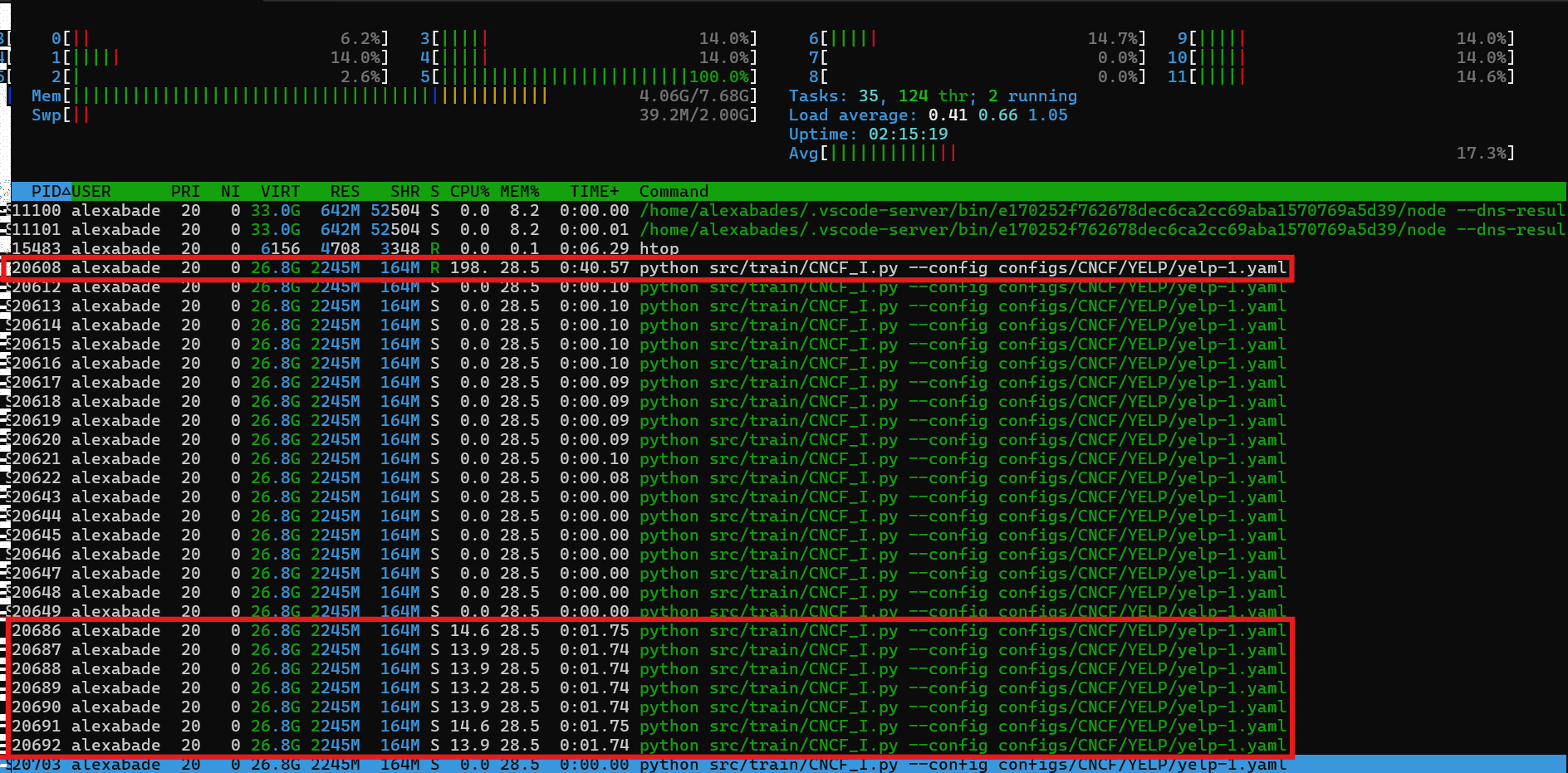

Parallelization Speed Training Distributed Pytorch Forums Learn how data, model, and hybrid parallelism optimize large generative ai model training with distributed machine learning methods. In this paper, we review the literature on parallel strategies for llms in both training and inference scenarios, emphasizing the need for adaptable parallel strategies.

Comments are closed.