Tokenization Techniques In Nlp Pdf

Tokenization In Nlp Pdf Word Information We start by outlining the various tokenization techniques, including word, subword, and character level tokenization. the benefits and drawbacks of various tokenization strategies, including rule based, statistical, and neural network based techniques, are then covered. Bpe algorithm to tokenize new text at test time, we split it into the characters and apply merge rules in order.

Nlp Tokenization Types Comparison Complete Guide Pdf | in this paper, the authors address the significance and complexity of tokenization, the beginning step of nlp. The document outlines a laboratory practice course focused on natural language processing (nlp), detailing various experiments such as tokenization, stemming, lemmatization, and the bag of words approach. Multiple tokenization methods exist, including whitespace, rule based, statistical, and subword tokenization, each with its own advantages and challenges depending on the linguistic context. download as a pdf or view online for free. Abstract a critical step in the nlp pipeline. the use of token representations is widely credited with increased model performance but is also the source of many undesirable behaviors, such a spurious ambiguity or inconsistency. despite its recognized importance as a standard representation method in nlp, the theoretical underpinnings of toke.

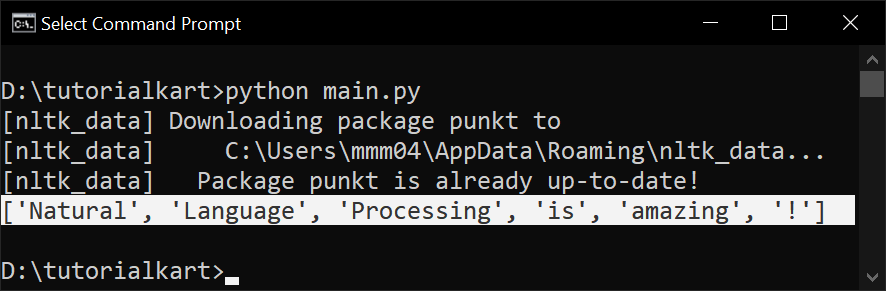

Tokenization Techniques In Nlp Comet Multiple tokenization methods exist, including whitespace, rule based, statistical, and subword tokenization, each with its own advantages and challenges depending on the linguistic context. download as a pdf or view online for free. Abstract a critical step in the nlp pipeline. the use of token representations is widely credited with increased model performance but is also the source of many undesirable behaviors, such a spurious ambiguity or inconsistency. despite its recognized importance as a standard representation method in nlp, the theoretical underpinnings of toke. Tokenization standards any actual nlp system will assume a particular tokenization standard. because so much nlp is based on systems that are trained on particular corpora (text datasets) that everybody uses, these corpora often define a de facto standard. This section contains review of conceptual literature of tokenization in nlp, and review of literature tried to analyzed most of the techniques used for tokenization for indian and other languages. This paper delves into new tokenization methods and data handling to enhance nlp model efficiency, focusing on avoiding "cuda out of memory" errors. Tokenization plays a pivotal role in natural language processing (nlp), shaping how textual data is segmented, interpreted, and processed by language models.

Comments are closed.