Tokenization Natural Language Processing Marketingmind

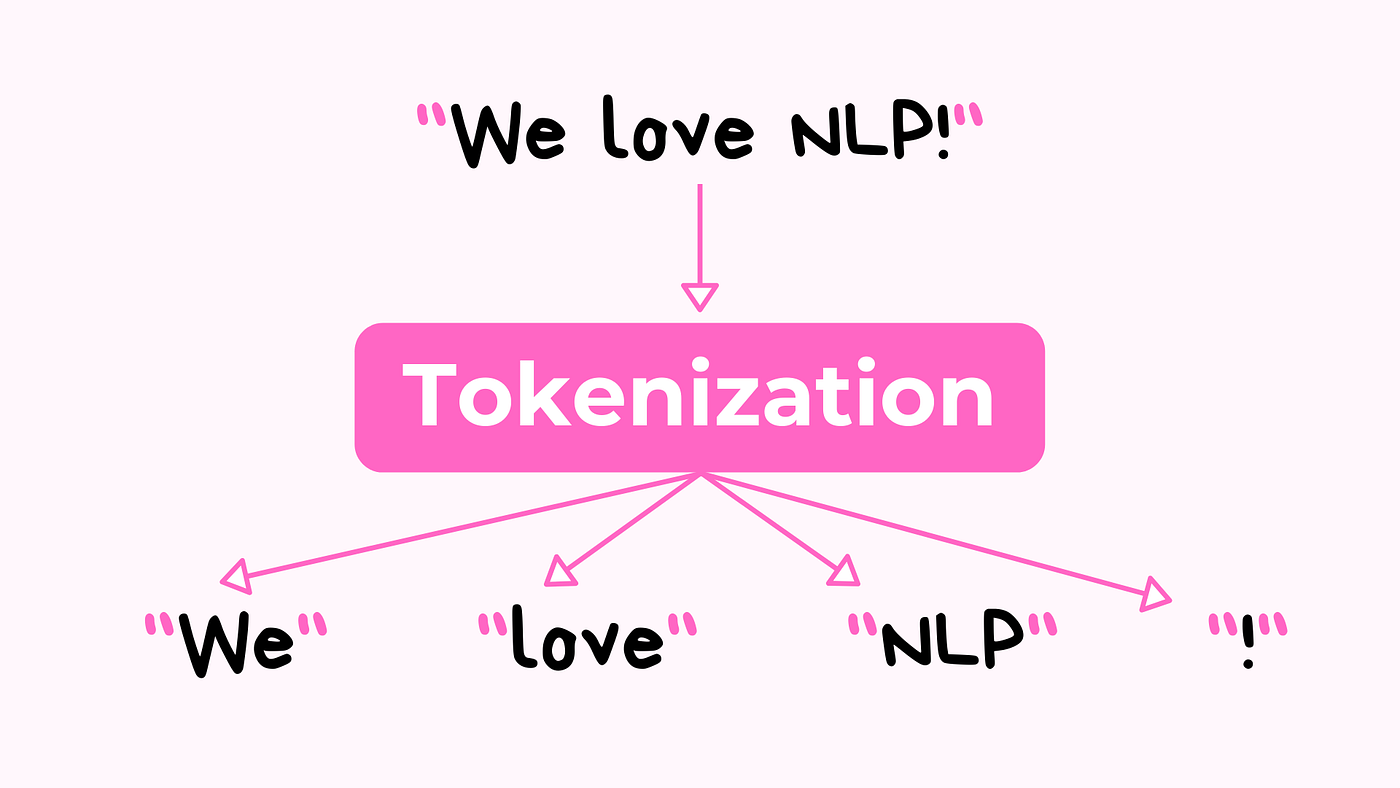

Tokenization Algorithms In Natural Language Processing 59 Off Tokenization is the process of breaking text into smaller units called tokens words, phrases, or characters essential for meaningful nlp analysis. Natural language processing (nlp) has rapidly evolved in recent years, enabling machines to understand and process human language. at the core of any nlp pipeline lies tokenization, a.

Tokenization Techniques In Nlp Pdf Tokenization is a fundamental process in natural language processing (nlp), essential for preparing text data for various analytical and computational tasks. in nlp, tokenization involves breaking down a piece of text into smaller, meaningful units called tokens. This study analyzes tokenization in natural language processing (nlp) at the intersection of language, technology, and political economy. recent studies show that subword tokenization in multilingual large language models (llms) distributes processing costs unevenly across languages, as non latin scripts are disproportionately fragmented by statistical segmentation. taking this disparity as a. The landscape of natural language processing offers many tools, each tailored to specific needs and complexities. here's a guide to some of the most prominent tools and methodologies available for tokenization. The process of tokenization, which breaks down text into smaller units, plays a vital role in natural language processing (nlp). this study evaluates different tokenization methods, including word‐based, character‐based, and sub‐word‐based methods.

What Is Tokenization In Natural Language Processing Nlp Geeksforgeeks The landscape of natural language processing offers many tools, each tailored to specific needs and complexities. here's a guide to some of the most prominent tools and methodologies available for tokenization. The process of tokenization, which breaks down text into smaller units, plays a vital role in natural language processing (nlp). this study evaluates different tokenization methods, including word‐based, character‐based, and sub‐word‐based methods. Learn what tokenization is in nlp and why it matters. covers word, subword, and character tokenization methods with practical python examples. The present study aims to compare and analyze the performance of two tokenizers, mecab ko and sentencepiece, in the context of natural language processing for sentiment analysis. After text standardization, the next critical step in natural language processing is tokenization. tokenization involves breaking down the standardized text into smaller units called tokens. In this article, you will learn about tokenization in python, explore a practical tokenization example, and follow a comprehensive tokenization tutorial in nlp.

Tokenization The Cornerstone For Nlp Tasks Machine Learning Archive Learn what tokenization is in nlp and why it matters. covers word, subword, and character tokenization methods with practical python examples. The present study aims to compare and analyze the performance of two tokenizers, mecab ko and sentencepiece, in the context of natural language processing for sentiment analysis. After text standardization, the next critical step in natural language processing is tokenization. tokenization involves breaking down the standardized text into smaller units called tokens. In this article, you will learn about tokenization in python, explore a practical tokenization example, and follow a comprehensive tokenization tutorial in nlp.

Comments are closed.