Tokenization For Improved Data Security Tokenization Process Activities

Tokenization For Improved Data Security Tokenization Working Process Learn how data tokenization works, key benefits, types and real world tokenization examples to protect sensitive information. Tokenization offers a multitude of advantages for securing sensitive data, making it a cornerstone of modern data security strategies. by replacing sensitive data with non sensitive tokens, organizations can significantly reduce their risk exposure and improve their overall security posture.

Tokenization For Improved Data Security Tokenization Process Activities Learn how tokenization enhances data security by replacing sensitive information with tokens, reducing risk of breaches and supporting robust cybersecurity strategies. In the context of modern engineering, tokenization data security is the process of replacing sensitive data—be it credit card numbers (pans), social security numbers, or internal ids—with a non sensitive equivalent called a token. unlike encryption, tokenization does not use a mathematical process to reverse the value; it uses a mapping system. this is not just a "nice to have" for. Discover how data tokenization enhances data security, minimizes risk for breaches, and improves data management across various applications. Tokenization has emerged as a powerful solution, offering enhanced security, efficiency, and adaptability across industries. but what exactly is tokenization, and how can it be leveraged effectively? this comprehensive guide delves into the tokenization process, exploring its core concepts, benefits, challenges, and applications.

Tokenization For Improved Data Security Main Data Security Tokenization Discover how data tokenization enhances data security, minimizes risk for breaches, and improves data management across various applications. Tokenization has emerged as a powerful solution, offering enhanced security, efficiency, and adaptability across industries. but what exactly is tokenization, and how can it be leveraged effectively? this comprehensive guide delves into the tokenization process, exploring its core concepts, benefits, challenges, and applications. Discover how we guide you through implementing tokenization best practices, avoiding common missteps to ensure secure data protection. Tokenization provides a powerful way to protect sensitive information while still allowing for essential data operations. by replacing sensitive data with non sensitive substitutes, tokens. In a tokenization process, sensitive data is sent to a secure system that generates a surrogate value—known as a token. tokens can be completely random or generated deterministically so that the same original value always results in the same token. Industries subject to financial, data security, regulatory, or privacy compliance standards are increasingly looking for tokenization solutions to minimize distribution of sensitive data, reduce risk of exposure, improve security posture, and alleviate compliance obligations.

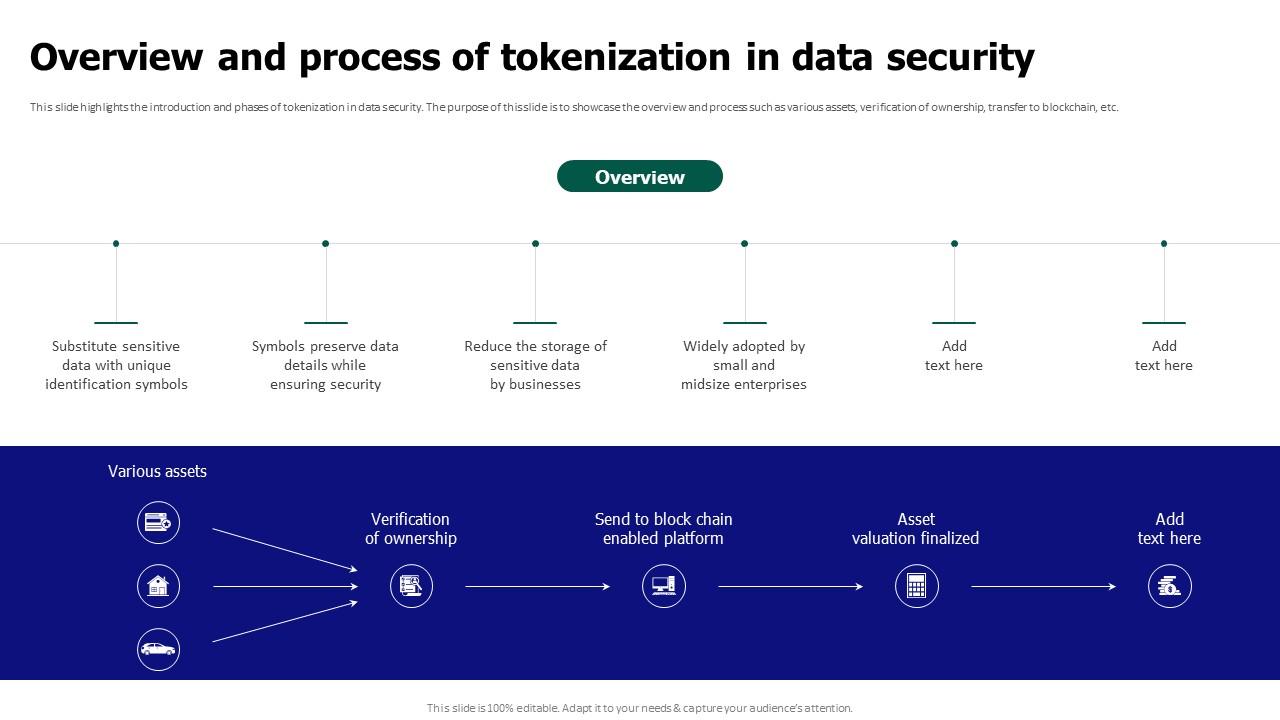

Tokenization For Improved Data Security Overview And Process Of Discover how we guide you through implementing tokenization best practices, avoiding common missteps to ensure secure data protection. Tokenization provides a powerful way to protect sensitive information while still allowing for essential data operations. by replacing sensitive data with non sensitive substitutes, tokens. In a tokenization process, sensitive data is sent to a secure system that generates a surrogate value—known as a token. tokens can be completely random or generated deterministically so that the same original value always results in the same token. Industries subject to financial, data security, regulatory, or privacy compliance standards are increasingly looking for tokenization solutions to minimize distribution of sensitive data, reduce risk of exposure, improve security posture, and alleviate compliance obligations.

Comments are closed.