The Value Alignment Problem In Ai Explained Simply

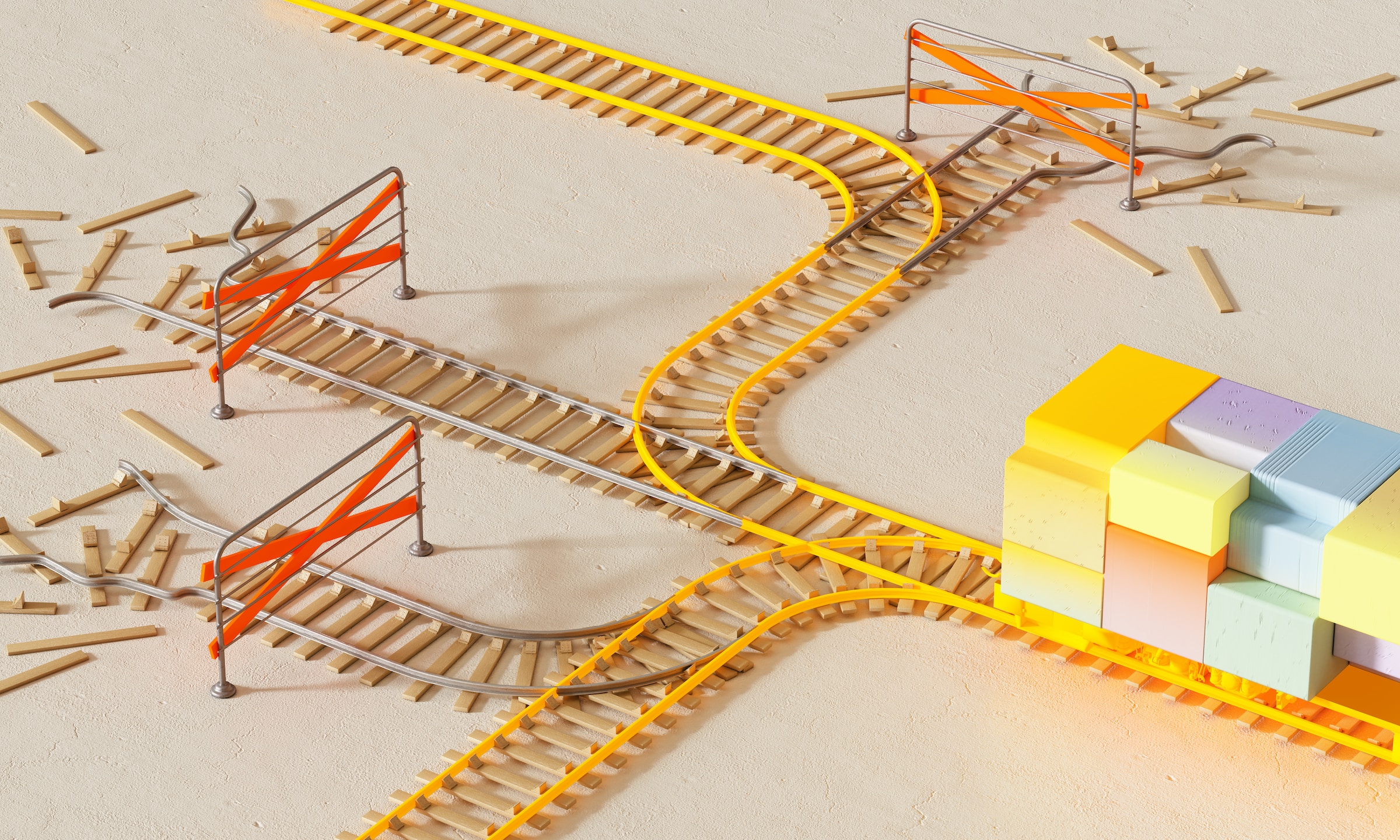

The Value Alignment Problem In Ai Explained Simply R Csmajors The alignment problem refers to the challenge of ensuring that ai systems act in ways that align with human values, intentions, and goals. it's about making sure ai does what we want it to do, without unintended harmful consequences. The core of the ai alignment problem is making certain that ai’s objectives match what humans truly intend, preventing unintended or harmful outcomes. this issue is not just technical but deeply ethical, involving questions about which moral values should guide ai behavior.

Value Alignment Eindhoven Center For The Philosophy Of Ai The alignment problem refers to the challenge of ensuring ai systems pursue goals that match human intentions and values, rather than pursuing poorly specified objectives in ways that produce unintended and potentially harmful consequences. Ai alignment is the research field dedicated to steering ai systems toward a person’s or group’s intended goals, preferences, or ethical principles.1 an ai system is considered “aligned” if it reliably advances the objectives intended by its creators. At its essence, the alignment problem asks: can we ensure that ai systems pursue objectives that reflect human values, ethics, and safety considerations? this question assumes urgency because modern ai systems increasingly make decisions or recommendations without constant human oversight. Value alignment in ai refers to the problem of ensuring that ai systems’ goals, behaviors, and decision making processes remain consistent with the values—moral, societal, personal—of the humans and institutions they serve.

Ai Alignment Theory Explained For Humans Not Robots At its essence, the alignment problem asks: can we ensure that ai systems pursue objectives that reflect human values, ethics, and safety considerations? this question assumes urgency because modern ai systems increasingly make decisions or recommendations without constant human oversight. Value alignment in ai refers to the problem of ensuring that ai systems’ goals, behaviors, and decision making processes remain consistent with the values—moral, societal, personal—of the humans and institutions they serve. The ai alignment problem arises when these systems, designed to follow our instructions, end up interpreting commands literally rather than contextually, leading to outcomes that may not. The alignment problem is the idea that as ai systems become even more complex and powerful, anticipating and aligning their outcomes to human goals becomes increasingly difficult. Value alignment in ai refers to the process of designing and developing ai systems that make decisions and take actions that are consistent with human values and ethics. Values in value alignment highlights values themselves in value alignment research. it includes how values are embedded and represented in ai systems operating in diverse contexts, and how they change during a system’s operation.

The Value Alignment Problem In Ai How To Use Ethics To Govern Ai Artefacts The ai alignment problem arises when these systems, designed to follow our instructions, end up interpreting commands literally rather than contextually, leading to outcomes that may not. The alignment problem is the idea that as ai systems become even more complex and powerful, anticipating and aligning their outcomes to human goals becomes increasingly difficult. Value alignment in ai refers to the process of designing and developing ai systems that make decisions and take actions that are consistent with human values and ethics. Values in value alignment highlights values themselves in value alignment research. it includes how values are embedded and represented in ai systems operating in diverse contexts, and how they change during a system’s operation.

The Alignment Problem Uniting Ai Goals With Human Ethics Ai Security Value alignment in ai refers to the process of designing and developing ai systems that make decisions and take actions that are consistent with human values and ethics. Values in value alignment highlights values themselves in value alignment research. it includes how values are embedded and represented in ai systems operating in diverse contexts, and how they change during a system’s operation.

The Alignment Problem Uniting Ai Goals With Human Ethics Ai Security

Comments are closed.