The Ultimate Guide To Pyspark And Delta Live Tables Real Time Data

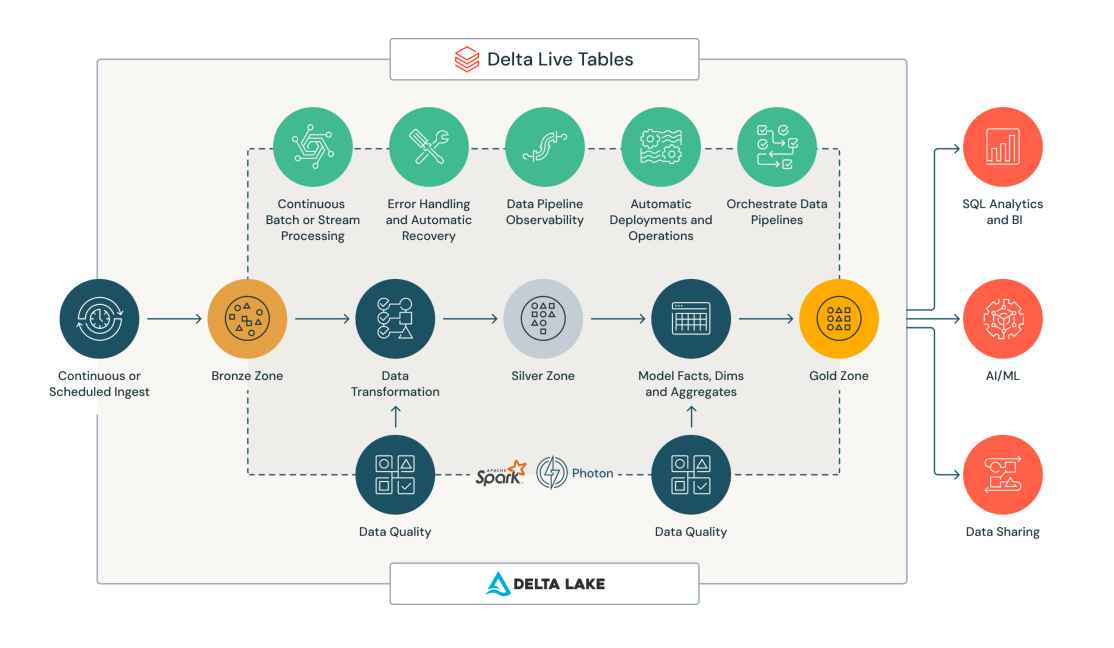

The Ultimate Guide To Pyspark And Delta Live Tables Real Time Data Recently, delta live tables have been introduced to the delta lake ecosystem, adding real time capabilities to pyspark data pipelines. in this guide, we’ll explore the significance of these two keywords in big data processing and how they can be effectively utilised. We will start by setting up the necessary environment, then move on to writing effective pyspark code, and finally, demonstrate how to utilize delta live tables to optimize your data pipelines.

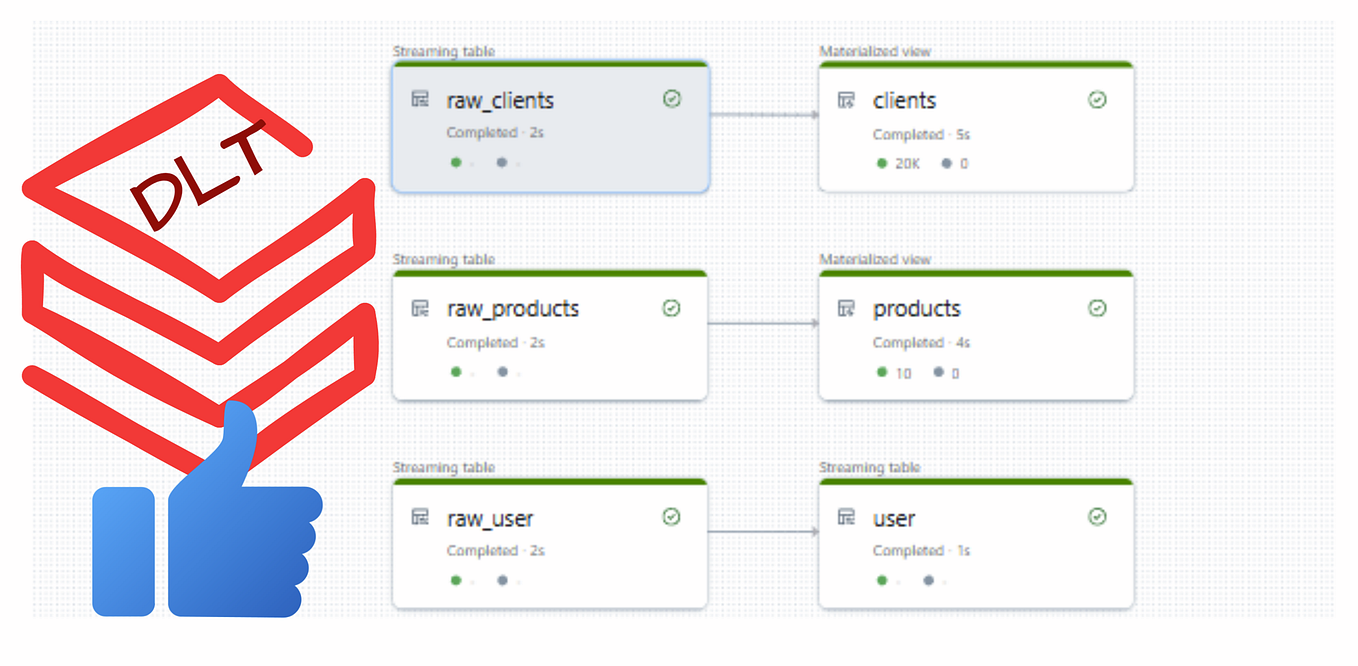

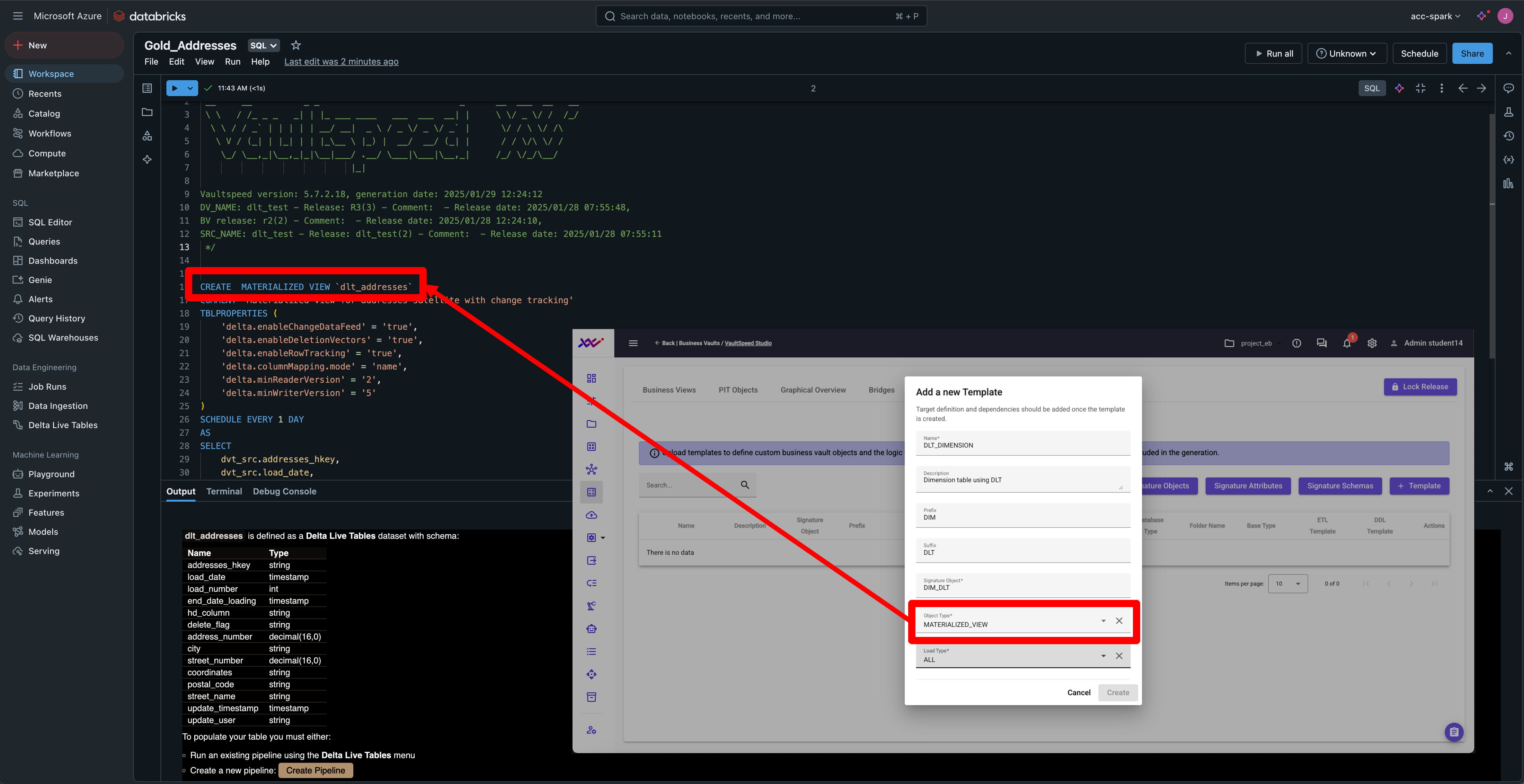

Streamlining Real Time Data A Guide To Delta Live Tables In Databricks In this article, we'll delve into the utilization of delta live tables and live streaming in databricks using pyspark, enabling organizations to harness the power of real time data. Follow this step by step guide to start building your streaming pipelines today and experience the power of real time data processing with databricks delta live tables!. Building scalable apache spark data pipelines has traditionally required a lot of orchestration, monitoring, and operational effort. that’s where spark declarative pipelines, also known as delta live tables (dlt), change the game. This project demonstrates how to build real time data pipelines with pyspark on databricks. it covers key patterns such as data ingestion, json flattening, deduplication, incremental upserts, streaming writes, and slowly changing dimension (scd) type 2 implementation.

Cdc For Delta Live Tables A Game Changer For Real Time Data Pipelines Building scalable apache spark data pipelines has traditionally required a lot of orchestration, monitoring, and operational effort. that’s where spark declarative pipelines, also known as delta live tables (dlt), change the game. This project demonstrates how to build real time data pipelines with pyspark on databricks. it covers key patterns such as data ingestion, json flattening, deduplication, incremental upserts, streaming writes, and slowly changing dimension (scd) type 2 implementation. All dlt capabilities — including streaming tables, materialized views and data quality expectations — remain available, and pipelines are now even more tightly integrated into the lakehouse. our new hands on sdp tutorial puts you in the cockpit with a real world avionics example. Delta tables support time travel, allowing users to access and revert to previous versions of the data. this feature is useful for debugging, auditing, correcting accidental deletions, and reproducing experiments. Getting started with delta lake this guide helps you quickly explore the main features of delta lake. it provides code snippets that show how to read from and write to delta tables from interactive, batch, and streaming queries. it also demonstrates table updates and time travel. set up apache spark with delta lake. Spark includes native support for streaming data through spark structured streaming, an api that is based on a boundless dataframe in which streaming data is captured.

Databricks Delta Live Tables Daimlinc All dlt capabilities — including streaming tables, materialized views and data quality expectations — remain available, and pipelines are now even more tightly integrated into the lakehouse. our new hands on sdp tutorial puts you in the cockpit with a real world avionics example. Delta tables support time travel, allowing users to access and revert to previous versions of the data. this feature is useful for debugging, auditing, correcting accidental deletions, and reproducing experiments. Getting started with delta lake this guide helps you quickly explore the main features of delta lake. it provides code snippets that show how to read from and write to delta tables from interactive, batch, and streaming queries. it also demonstrates table updates and time travel. set up apache spark with delta lake. Spark includes native support for streaming data through spark structured streaming, an api that is based on a boundless dataframe in which streaming data is captured.

What Is Delta Live Tables In Azure Databricks Sql Infoupdate Org Getting started with delta lake this guide helps you quickly explore the main features of delta lake. it provides code snippets that show how to read from and write to delta tables from interactive, batch, and streaming queries. it also demonstrates table updates and time travel. set up apache spark with delta lake. Spark includes native support for streaming data through spark structured streaming, an api that is based on a boundless dataframe in which streaming data is captured.

Comments are closed.