The Other Ai Alignment Problem Mesa Optimizers And Inner Alignment

The Other Ai Alignment Problem Mesa Optimizers And Inner Alignment Humans are therefore mesa optimizers of natural selection. in the context of ai alignment, the concern is that a base optimizer (e.g., a gradient descent process) may produce a learned model that is itself an optimizer, and that has unexpected and undesirable properties. In this deep dive, we will unpack the complex relationship between inner and outer alignment, demystify the concept of mesa optimization, and explore why teaching an ai to "want" the right things is harder than it looks.

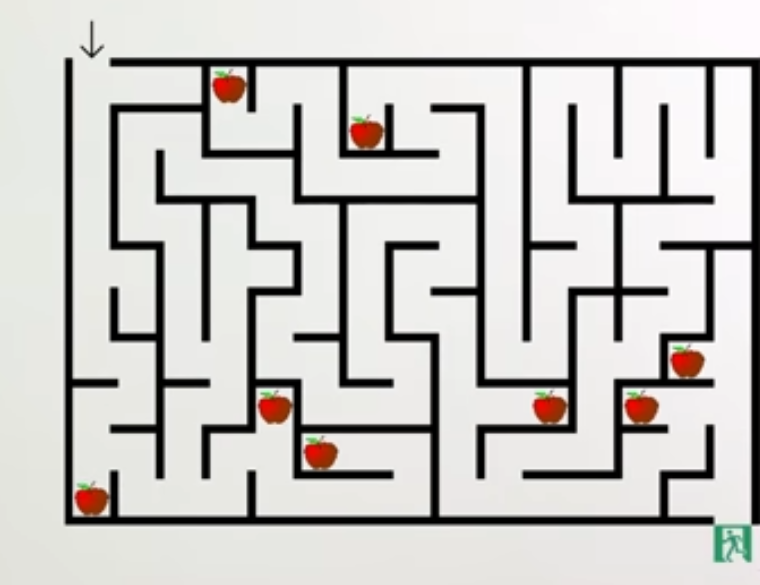

Formal Solution To The Inner Alignment Problem Ai Alignment Forum Hi so this channel is about ai safety a lot of people when they hear ai safety the first thing that comes to mind is they think it’s about how to keep potentially dangerous ai systems under control that we’re trying to answer questions like what can you do if your ai turns out to be malicious and that is part of it but actually the field has tended not to focus on that kind of thing so much because it doesn’t scale well by which i mean it seems like ai has the potential to become enormously more capable than humans sooner or later and if you have ai research being done by ai probably sooner you’re going to get super intelligence you’ll get ai systems vastly more capable than humans at all sorts of tasks just massively outperforming us across the board what can you do if a super intelligence is trying to kill you well you could die [music] or hello darkness yeah no that’s that’s pretty much your options realistically if the ai system is enormously more competent than you and it really wants to do something it’s. We refer to this problem of aligning mesa optimizers with the base objective as the inner alignment problem. this is distinct from the outer alignment problem, which is the traditional problem of ensuring that the base objective captures the intended goal of the programmers. Mesa optimizers are noteworthy because they can introduce unexpected behaviors into models. for example: inner alignment problem: the mesa optimizer may develop goals that are misaligned with the intended goals set by the base optimizer, potentially leading to undesirable outcomes. This guide dives into python implementations for detecting and mitigating mesa optimizers and inner alignment failures, empowering developers to build safer ai for edge computing, generative ai, and beyond.

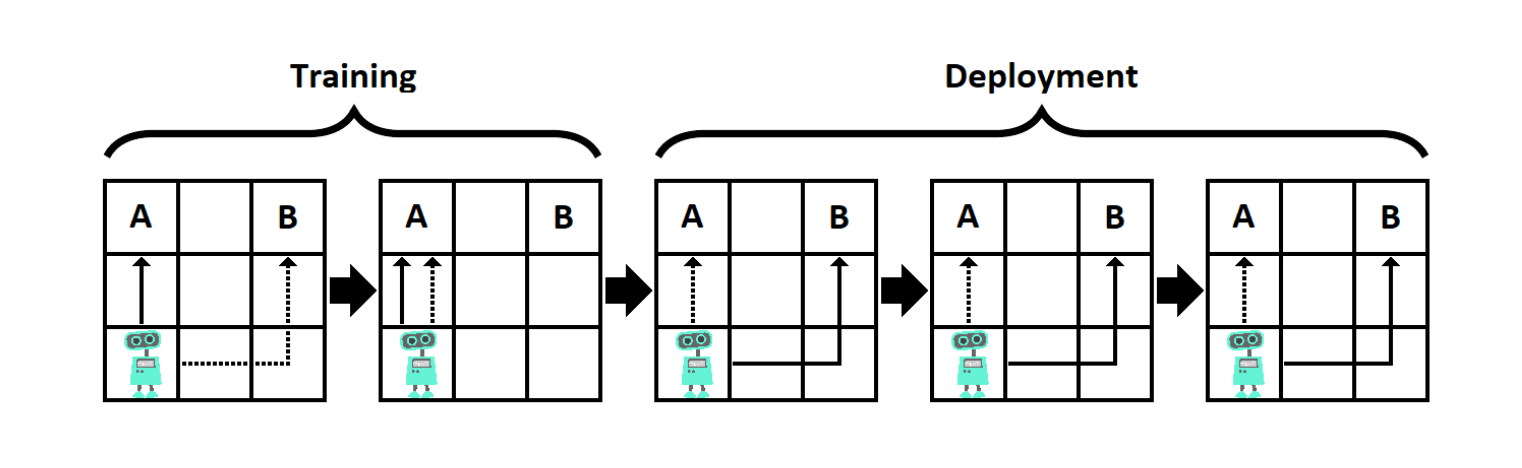

Leading Ai Scientists Without Urgent Action Advanced Ai Will Cause Mesa optimizers are noteworthy because they can introduce unexpected behaviors into models. for example: inner alignment problem: the mesa optimizer may develop goals that are misaligned with the intended goals set by the base optimizer, potentially leading to undesirable outcomes. This guide dives into python implementations for detecting and mitigating mesa optimizers and inner alignment failures, empowering developers to build safer ai for edge computing, generative ai, and beyond. If we have two optimizers, we now have two problems with getting ai to behave well (two 'alignment problems'). specifically, we have an 'outer' and an 'inner' alignment problem, relating to gradient descent and the mesa optimizer respectively. Some organizations invest heavily in alignment research divisions dedicated to understanding inner alignment and deceptive alignment, treating it as a core technical challenge equal in importance to capability scaling. We refer to this problem of aligning mesa optimizers with the base objective as the inner alignment problem. this is distinct from the outer alignment problem, which is the traditional problem of ensuring that the base objective captures the intended goal of the programmers. Pseudo alignment: a mesa optimizer is pseudo aligned with the base objective if it appears aligned on the training data but is not robustly aligned. robust alignment: a mesa optimizer is robustly aligned with the base objective if it robustly optimizes for the base objective across distributions.

Deception As The Optimal Mesa Optimizers And Inner Alignment Ea Forum If we have two optimizers, we now have two problems with getting ai to behave well (two 'alignment problems'). specifically, we have an 'outer' and an 'inner' alignment problem, relating to gradient descent and the mesa optimizer respectively. Some organizations invest heavily in alignment research divisions dedicated to understanding inner alignment and deceptive alignment, treating it as a core technical challenge equal in importance to capability scaling. We refer to this problem of aligning mesa optimizers with the base objective as the inner alignment problem. this is distinct from the outer alignment problem, which is the traditional problem of ensuring that the base objective captures the intended goal of the programmers. Pseudo alignment: a mesa optimizer is pseudo aligned with the base objective if it appears aligned on the training data but is not robustly aligned. robust alignment: a mesa optimizer is robustly aligned with the base objective if it robustly optimizes for the base objective across distributions.

Deception As The Optimal Mesa Optimizers And Inner Alignment Ea Forum We refer to this problem of aligning mesa optimizers with the base objective as the inner alignment problem. this is distinct from the outer alignment problem, which is the traditional problem of ensuring that the base objective captures the intended goal of the programmers. Pseudo alignment: a mesa optimizer is pseudo aligned with the base objective if it appears aligned on the training data but is not robustly aligned. robust alignment: a mesa optimizer is robustly aligned with the base objective if it robustly optimizes for the base objective across distributions.

Comments are closed.