The Most Complex Model We Actually Understand

Complex Model We Used Download Scientific Diagram There’s a couple ways the network can compute products like cos (kx)cos (ky). one option is to approximate the product using relu activation functions (see nanda’s notebooks for more). it’s also. When we dig deep into how it transcends human comprehension, we can say that 'learning modular arithmetic using a single layer transformer ' is the most complex ai model we have fully.

Complex Model 3d The video “the most complex model we actually understand” dives into this fascinating challenge, exploring how researchers are pushing the boundaries of machine learning to create systems that are not only accurate but also interpretable. The video discusses the complexity of modern ai, particularly focusing on how models like chat gpt generate text through billions of calculations. it raises questions about the nature of intelligence in ai, the learning process, and the emergence of specific skills in large models. 从入门到进阶,全程干货讲解,拿走不谢! (中英字幕),【麻省理工学院(mit)中英字幕版】ai人工智能公开课(完整版 配套教材)通俗易懂,这绝对是b站ai人工智能课程天花板! ,【b站最新】吴恩达详细讲解transformer工作原理,小白教程,全程干货无尿点,学完你就是agi的大佬! (附课件 代码),【双语】variational autoencoders,【双语】how llms learn to reason [grpo],强推!. Interestingly, the model develops representations that align with trigonometric identities for computing (𝑎 𝑏) mod p, and researchers apply dfts on weights to analyze how this arises.

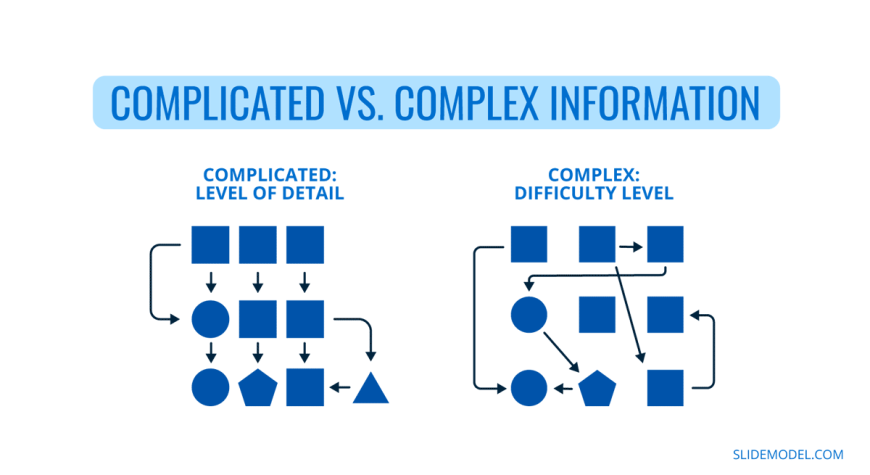

01 Complicated Vs Complex Information Slidemodel 从入门到进阶,全程干货讲解,拿走不谢! (中英字幕),【麻省理工学院(mit)中英字幕版】ai人工智能公开课(完整版 配套教材)通俗易懂,这绝对是b站ai人工智能课程天花板! ,【b站最新】吴恩达详细讲解transformer工作原理,小白教程,全程干货无尿点,学完你就是agi的大佬! (附课件 代码),【双语】variational autoencoders,【双语】how llms learn to reason [grpo],强推!. Interestingly, the model develops representations that align with trigonometric identities for computing (𝑎 𝑏) mod p, and researchers apply dfts on weights to analyze how this arises. We’re talking true autonomy in complex, real world environments: real time anomaly detection, thermal tracking, and multi hazard safety protocols that operate without constant human oversight. this isn’t a demo robot that follows a scripted path. this is a system that can perceive, reason, and act safely in dynamic, high stakes settings. The drive and discussions around model explainability is only increasing. with ai being used in language modelling, facial recognition and driverless cars, having models that can give reasoning behind their decision is more essential than ever. give one of these explainable models a try in your next project and let me know how it turns out. As artists, academics, practitioners, or as journalists, dataset investigation is one of the few tools we have available to gain insight and understanding into the most complex systems ever conceived by humans. We have identified how millions of concepts are represented inside claude sonnet, one of our deployed large language models. this is the first ever detailed look inside a modern, production grade large language model. this interpretability discovery could, in future, help us make ai models safer.

Comments are closed.