The Math Behind Transformers

Understand How Transformers Work By Demystifying The Math Behind Them In this article, you’ll delve into the math behind transformers, master their architecture, and understand how they work. Transformers play a central role in the inner workings of large language models. we develop a mathematical framework for analyzing trans formers based on their interpretation as interacting particle systems, with a particular emphasis on long time clustering behavior.

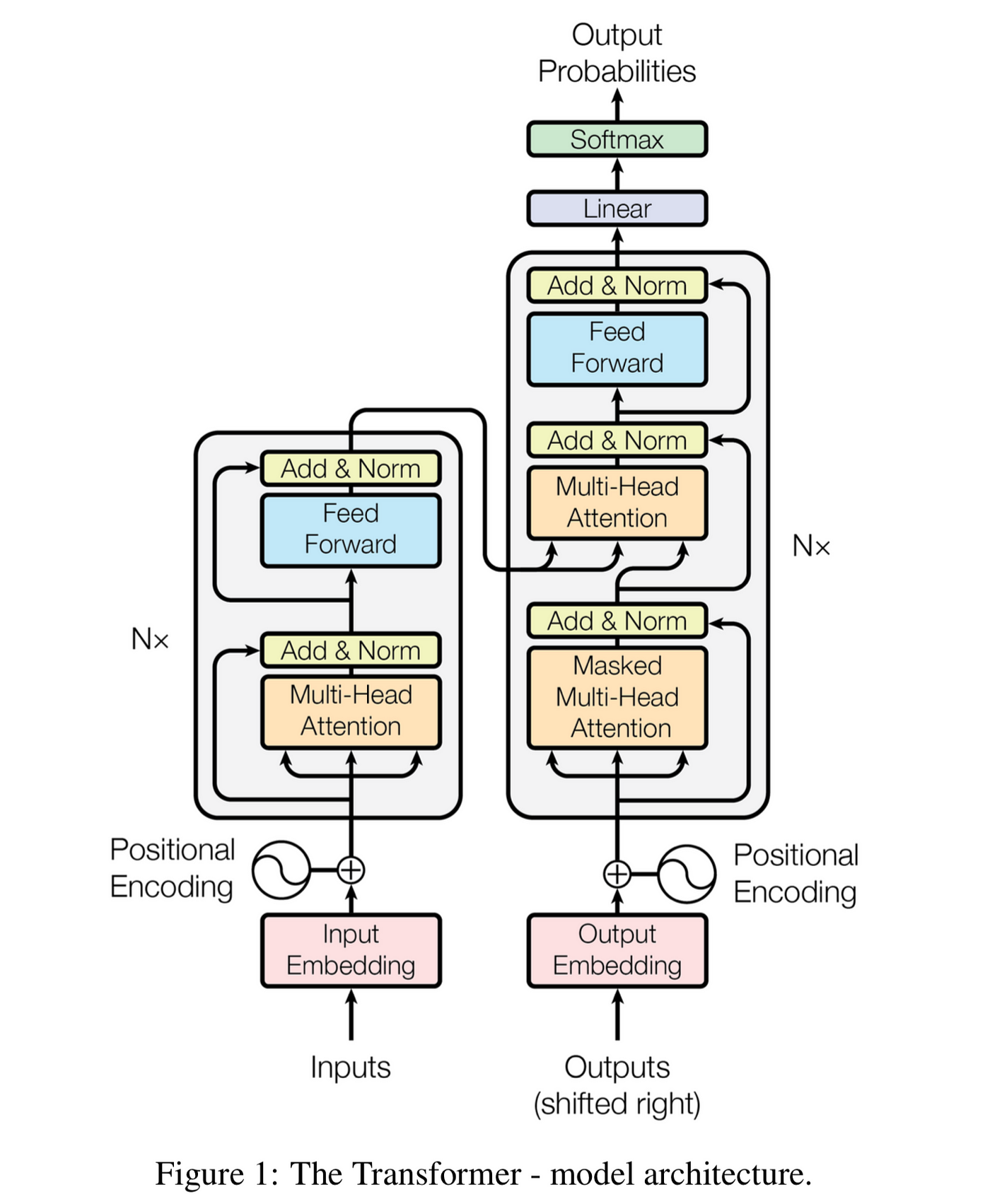

Math Insights Into Transformers Pdf Deep Learning Artificial This comprehensive guide delves into the intricacies of transformers, starting from their historical development to the sophisticated mathematics that governs their operation. Learn the math behind the transformer architecture and its applications in natural language processing. explore the sequence transduction literature leading up to the transformer, and the key concepts of attention, encoder decoder, and multi head attention. Transformers play a central role in the inner workings of large language models. we develop a mathematical framework for analyzing transformers based on their interpretation as interacting particle systems, which reveals that clusters emerge in long time. Transformers use a self attention mechanism, enabling them to handle input sequences all at once. this parallel processing allows for faster computation and better management of long range dependencies within the data.

The Math Behind Transformers Transformers play a central role in the inner workings of large language models. we develop a mathematical framework for analyzing transformers based on their interpretation as interacting particle systems, which reveals that clusters emerge in long time. Transformers use a self attention mechanism, enabling them to handle input sequences all at once. this parallel processing allows for faster computation and better management of long range dependencies within the data. Here, we will cover in detail the computations involved in transformers. we do not discuss the high level setup and use cases for them; however good articles for this type of analysis are available here and here. This document presents a precise mathematical de nition of the transformer model intro duced by vaswani et al. [2017], along with some discussion of the terminology and intuitions commonly associated with the transformer. In this blog, i have shown you a very basic way of how transformers mathematically work using matrix approaches. we have applied positional encoding, softmax, feedforward network, and most importantly, multi head attention. We present basic math related to computation and memory usage for transformers. a lot of basic, important information about transformer language models can be computed quite simply. unfortunately, the equations for this are not widely known in the nlp community.

The Math Behind Transformers Medium Here, we will cover in detail the computations involved in transformers. we do not discuss the high level setup and use cases for them; however good articles for this type of analysis are available here and here. This document presents a precise mathematical de nition of the transformer model intro duced by vaswani et al. [2017], along with some discussion of the terminology and intuitions commonly associated with the transformer. In this blog, i have shown you a very basic way of how transformers mathematically work using matrix approaches. we have applied positional encoding, softmax, feedforward network, and most importantly, multi head attention. We present basic math related to computation and memory usage for transformers. a lot of basic, important information about transformer language models can be computed quite simply. unfortunately, the equations for this are not widely known in the nlp community.

Comments are closed.