The Joint Probability Mass Function

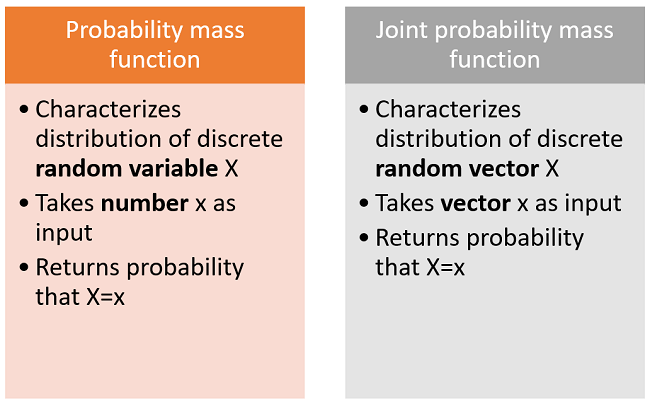

Joint Probability Mass Function Joint probability mass function (pmf) is a fundamental concept in probability theory and statistics, used to describe the likelihood of two discrete random variables occurring simultaneously. it provides a way to calculate the probability of multiple events occurring together. The joint pmf contains all the information regarding the distributions of $x$ and $y$. this means that, for example, we can obtain pmf of $x$ from its joint pmf with $y$.

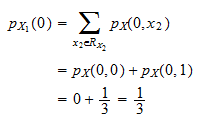

Joint Probability Mass Function A joint probability mass function specifies the probability of two or more discrete random variables taking on specific values simultaneously. joint pmfs must satisfy the basic probability axioms: non negativity and summing to 1 over the entire support. This function tells you the probability of all combinations of events (the “,” means “and”). if you want to back calculate the probability of an event only for one variable you can calculate a “marginal” from the joint probability mass function:. In this chapter we consider two or more random variables defined on the same sample space and discuss how to model the probability distribution of the random variables jointly. we will begin with the discrete case by looking at the joint probability mass function for two discrete random variables. In other words, the probability mass functions for x and y are the row and columns sums of ai,j . if x and y assume values in {1, 2, . . . , n} then we can view ai,j = p{x = i, y = j} as the entries of an n × n matrix. let’s say i don’t care about y . i just want to know p{x = i}. how do i figure that out from the matrix?.

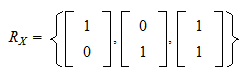

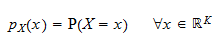

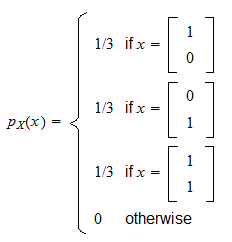

Joint Probability Mass Function In this chapter we consider two or more random variables defined on the same sample space and discuss how to model the probability distribution of the random variables jointly. we will begin with the discrete case by looking at the joint probability mass function for two discrete random variables. In other words, the probability mass functions for x and y are the row and columns sums of ai,j . if x and y assume values in {1, 2, . . . , n} then we can view ai,j = p{x = i, y = j} as the entries of an n × n matrix. let’s say i don’t care about y . i just want to know p{x = i}. how do i figure that out from the matrix?. It is straightforward to extend the concept of the probability mass function to a pair of random variables. definition 5.3: the joint probability mass function for a pair of discrete random variables x and y is given by p x, y (x, y) = pr ( {x = x} ∩ {y = y}). The joint probability distribution can be expressed in terms of a joint cumulative distribution function and either in terms of a joint probability density function (in the case of continuous variables) or joint probability mass function (in the case of discrete variables). The joint probability mass function is a function that completely characterizes the distribution of a discrete random vector. when evaluated at a given point, it gives the probability that the realization of the random vector will be equal to that point. The document discusses the joint probability mass function for discrete random variables x and y. it provides examples of how to calculate the marginal and conditional probability distributions from the joint pmf.

Joint Probability Mass Function It is straightforward to extend the concept of the probability mass function to a pair of random variables. definition 5.3: the joint probability mass function for a pair of discrete random variables x and y is given by p x, y (x, y) = pr ( {x = x} ∩ {y = y}). The joint probability distribution can be expressed in terms of a joint cumulative distribution function and either in terms of a joint probability density function (in the case of continuous variables) or joint probability mass function (in the case of discrete variables). The joint probability mass function is a function that completely characterizes the distribution of a discrete random vector. when evaluated at a given point, it gives the probability that the realization of the random vector will be equal to that point. The document discusses the joint probability mass function for discrete random variables x and y. it provides examples of how to calculate the marginal and conditional probability distributions from the joint pmf.

Joint Probability Mass Function The joint probability mass function is a function that completely characterizes the distribution of a discrete random vector. when evaluated at a given point, it gives the probability that the realization of the random vector will be equal to that point. The document discusses the joint probability mass function for discrete random variables x and y. it provides examples of how to calculate the marginal and conditional probability distributions from the joint pmf.

Joint Probability Mass Function Geeksforgeeks Videos

Comments are closed.