The Intuitive Transformer

Pulse Tao Xia Chemically Intuitive Graph Transformer Github Join us for a guided tour through the intuition behind transformer and attention mechanisms. this talk will attempt to describe how transformers and attentio. In this post, we will look at the transformer – a model that uses attention to boost the speed with which these models can be trained. the transformer outperforms the google neural machine translation model in specific tasks.

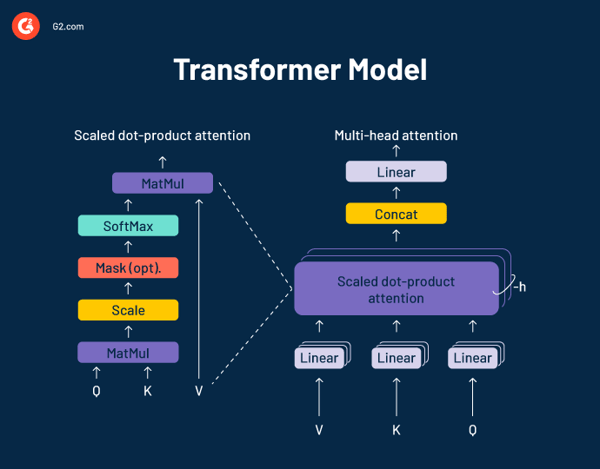

Gallery Intuitive Machines A transformer is a type of neural network designed for language tasks (like translation, summarization, chat). it replaces older models like rnns (recurrent neural networks) and lstms. The illustrated transformer by jay alammar provides a visual and simplified explanation of the transformer model, which utilizes attention mechanisms to enhance the speed and performance of machine translation tasks. This article provides a visual and intuitive explanation of the transformer architecture, which has revolutionized natural language processing (nlp) by enabling efficient handling of sequential data through self attention mechanisms. Transformers are built on the concept of self attention, enabling the model to weigh the importance of different words in a sentence based on their relevance to each other.

An Intuitive Explanation Of Transformer Based Models Ppli This article provides a visual and intuitive explanation of the transformer architecture, which has revolutionized natural language processing (nlp) by enabling efficient handling of sequential data through self attention mechanisms. Transformers are built on the concept of self attention, enabling the model to weigh the importance of different words in a sentence based on their relevance to each other. The transformer may well be the simplest machine learning architecture to dominate the field in decades. there are good reasons to start paying attention to them if you haven’t been already. Now that we’ve covered the entire forward pass process through a trained transformer, it would be useful to glance at the intuition of training the model. during training, an untrained model would go through the exact same forward pass. Namely, we unpacked a transformer, a building block for large language models. however, there were a number of details we glossed over — the most important of which, is how to perform a weighted sum to add context to each word. Inside today's episode, we will learn how to stop thinking and start sensing: come on in and let’s explore how to align your inner architect, strategist, and transformer, as well as master the.

An Intuitive Introduction To The Vision Transformer Thalles Blog The transformer may well be the simplest machine learning architecture to dominate the field in decades. there are good reasons to start paying attention to them if you haven’t been already. Now that we’ve covered the entire forward pass process through a trained transformer, it would be useful to glance at the intuition of training the model. during training, an untrained model would go through the exact same forward pass. Namely, we unpacked a transformer, a building block for large language models. however, there were a number of details we glossed over — the most important of which, is how to perform a weighted sum to add context to each word. Inside today's episode, we will learn how to stop thinking and start sensing: come on in and let’s explore how to align your inner architect, strategist, and transformer, as well as master the.

Comments are closed.