The Ai Alignment Problem Explained

Ai Alignment Theory Explained For Humans Not Robots In the field of artificial intelligence (ai), alignment aims to steer ai systems toward a person's or group's intended goals, preferences, or ethical principles. an ai system is considered aligned if it advances the intended objectives. a misaligned ai system pursues unintended objectives. [1]. The alignment problem is the idea that as ai systems become even more complex and powerful, anticipating and aligning their outcomes to human goals becomes increasingly difficult.

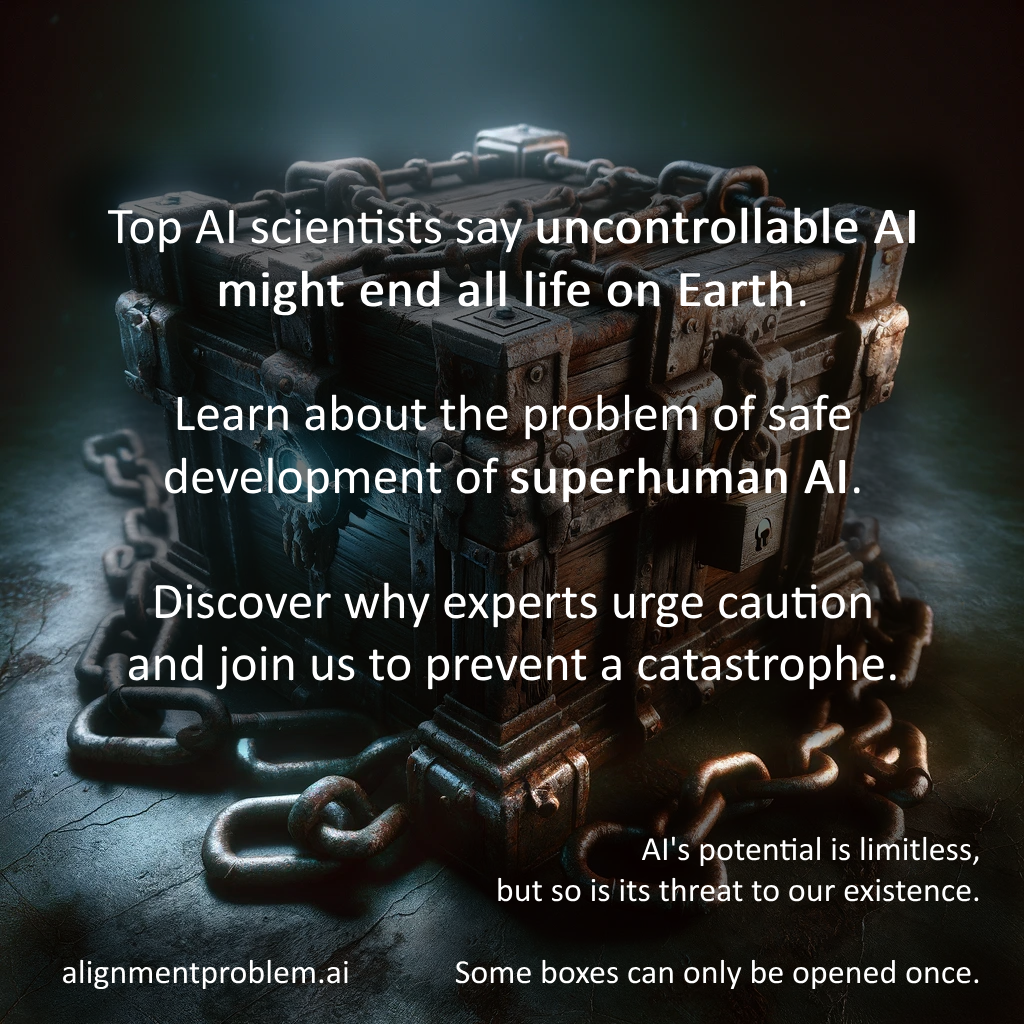

The Alignment Problem Uniting Ai Goals With Human Ethics Ai Security The core of the ai alignment problem is making certain that ai’s objectives match what humans truly intend, preventing unintended or harmful outcomes. this issue is not just technical but deeply ethical, involving questions about which moral values should guide ai behavior. This article explains ai alignment as the effort to ensure artificial intelligence systems behave in accordance with human intentions, values, and safety requirements. the piece discusses techniques like reinforcement learning from human feedback (rlhf), constitutional ai, runtime safeguards, and interpretability research. it highlights risks when alignment fails, including reward hacking and. The alignment problem refers to the challenge of ensuring that ai systems act in ways that align with human values, intentions, and goals. it's about making sure ai does what we want it to do, without unintended harmful consequences. Ai alignment means making sure an ai system’s goals and behavior match what people actually want—our values, rules, and intentions. it’s about getting the ai to do the “right thing” even in new situations, not just follow instructions literally in ways that cause harm. in practice, it includes preventing unwanted outcomes like deception, unsafe shortcuts, or optimizing a metric that.

The Alignment Problem Uniting Ai Goals With Human Ethics Ai Security The alignment problem refers to the challenge of ensuring that ai systems act in ways that align with human values, intentions, and goals. it's about making sure ai does what we want it to do, without unintended harmful consequences. Ai alignment means making sure an ai system’s goals and behavior match what people actually want—our values, rules, and intentions. it’s about getting the ai to do the “right thing” even in new situations, not just follow instructions literally in ways that cause harm. in practice, it includes preventing unwanted outcomes like deception, unsafe shortcuts, or optimizing a metric that. Key takeaway ai alignment is the work of making ai systems reliably do what humans want, and it is one of the most important unsolved problems as ai systems grow more autonomous and capable. part of the ai weekly glossary. The alignment problem in ai: learn how ai systems align with human values and intentions to prevent unintended consequences and ensure safe ai. It addresses the question of how to align potentially autonomous ai entities with human values, goals and purposes. it warns that such entities, if super humanly intelligent, could evade human control and come to threaten, dominate, or even supersede humanity. The former aims to make ai systems aligned via alignment training, while the latter aims to gain evidence about the systems’ alignment and govern them appropriately to avoid exacerbating misalignment risks.

The Ai Alignment Problem Key takeaway ai alignment is the work of making ai systems reliably do what humans want, and it is one of the most important unsolved problems as ai systems grow more autonomous and capable. part of the ai weekly glossary. The alignment problem in ai: learn how ai systems align with human values and intentions to prevent unintended consequences and ensure safe ai. It addresses the question of how to align potentially autonomous ai entities with human values, goals and purposes. it warns that such entities, if super humanly intelligent, could evade human control and come to threaten, dominate, or even supersede humanity. The former aims to make ai systems aligned via alignment training, while the latter aims to gain evidence about the systems’ alignment and govern them appropriately to avoid exacerbating misalignment risks.

Alignment Problem Ai At Neal Ching Blog It addresses the question of how to align potentially autonomous ai entities with human values, goals and purposes. it warns that such entities, if super humanly intelligent, could evade human control and come to threaten, dominate, or even supersede humanity. The former aims to make ai systems aligned via alignment training, while the latter aims to gain evidence about the systems’ alignment and govern them appropriately to avoid exacerbating misalignment risks.

Alignment Problem Ai At Neal Ching Blog

Comments are closed.