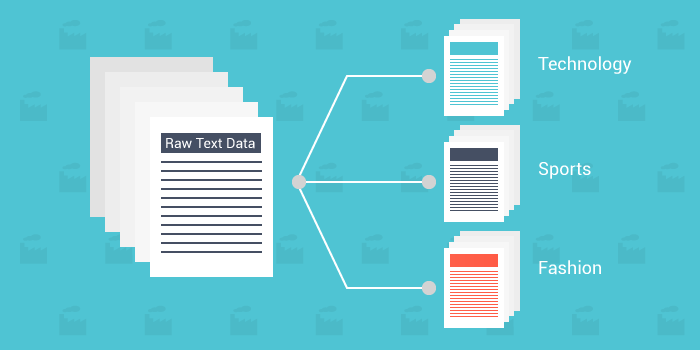

Text Vectorization Converting Text Into Vectors For Machine Learning Document Categorization

Pdf Text Document Categorization Using Support Vector Machine Vectorization is the process of transforming words, phrases or entire documents into numerical vectors that can be understood and processed by machine learning models. This blog post explores why vectorizing language matters, what word and document embeddings are, and how you can implement and visualize embeddings with matlab.

Automated Text Classification Using Machine Learning By Shashank The main purpose of document vectorization is to represent words into a series of vectors that can express the semantics of documents. whether in chinese or english, words are the most basic units to express text processing. By combining doc2vec embeddings with a text classification model, you can effectively classify documents based on their content, making it a valuable approach for tasks like sentiment analysis, topic classification, and document categorization. In this post, i’ve shared how different vectorization methods like one hot encoding, count vectorizer, n grams, and tf idf transform documents into vectors using python. We can use it to recommend top skills for each documents. there are other techniques to vectorize the document by machine learning model, i will discuss them in embedding section.

Pdf Text Categorization With Support Vector Machines In this post, i’ve shared how different vectorization methods like one hot encoding, count vectorizer, n grams, and tf idf transform documents into vectors using python. We can use it to recommend top skills for each documents. there are other techniques to vectorize the document by machine learning model, i will discuss them in embedding section. Text vectorization is the process of converting text data into a numerical representation that machine learning algorithms can comprehend. at its core, it's about mapping words,. In every nlp project, text needs to be vectorized in order to be processed by machine learning algorithms. vectorization methods are one hot encoding, counter encoding, frequency encoding, and word vector or word embeddings. several of these methods are available in scikit learn as well. When we think of an nlp pipeline, feature engineering (also known as feature extraction or text representation or text vectorization) is a very integral and important step. this step involves techniques to represent text as numbers (feature vectors). We’ll learn the most important techniques to represent a text sequence as a vector in the following lines. to understand this tutorial we’ll need to be familiar with common deep learning techniques like rnns, cnns, and transformers.

Text Vectorization And Basic Nlp For Machine Learning By David Kebert Text vectorization is the process of converting text data into a numerical representation that machine learning algorithms can comprehend. at its core, it's about mapping words,. In every nlp project, text needs to be vectorized in order to be processed by machine learning algorithms. vectorization methods are one hot encoding, counter encoding, frequency encoding, and word vector or word embeddings. several of these methods are available in scikit learn as well. When we think of an nlp pipeline, feature engineering (also known as feature extraction or text representation or text vectorization) is a very integral and important step. this step involves techniques to represent text as numbers (feature vectors). We’ll learn the most important techniques to represent a text sequence as a vector in the following lines. to understand this tutorial we’ll need to be familiar with common deep learning techniques like rnns, cnns, and transformers.

Comments are closed.