Text Mining Document Embeddings

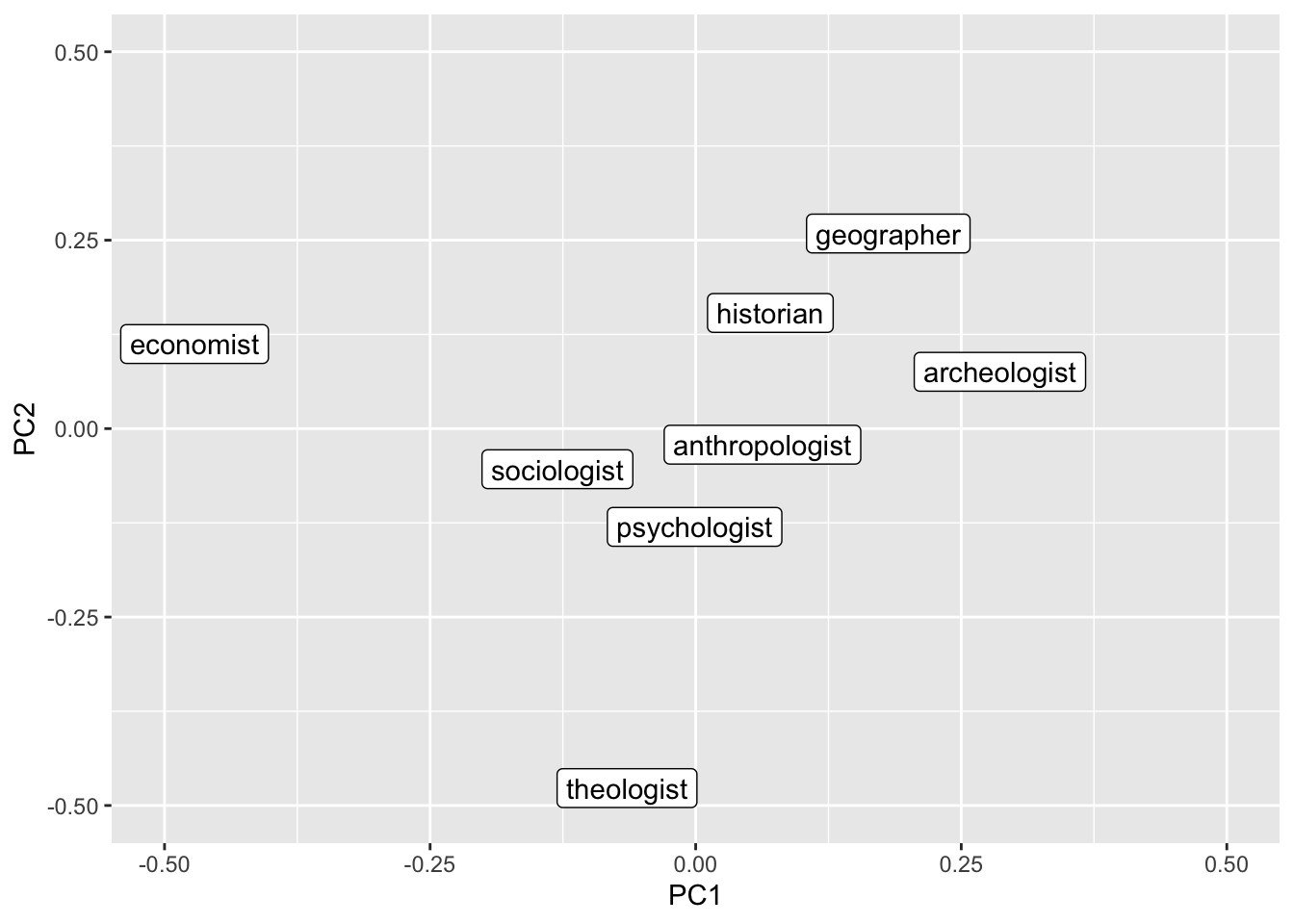

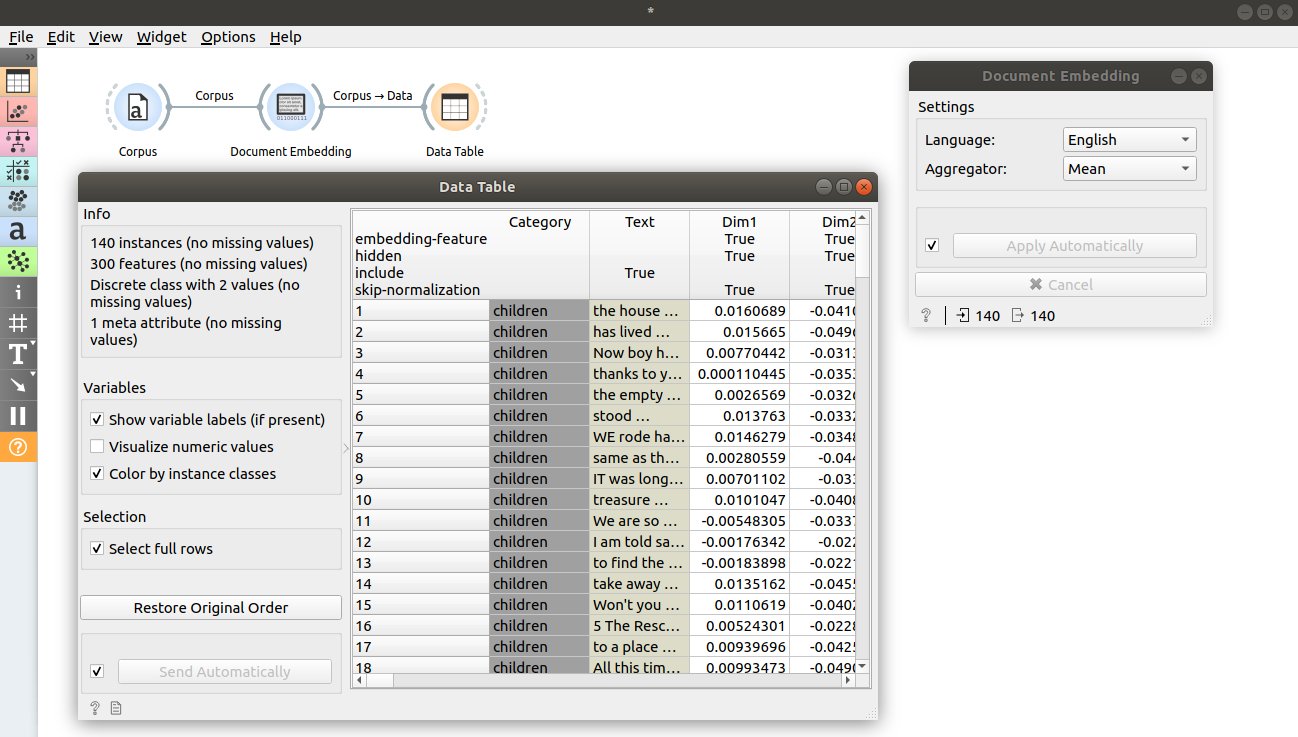

Text Mining For Social Sciences Summer 2024 10 Word Embeddings Document embedding takes n grams (tokens), usually produced by the preprocess text widget. one can see tokens in the corpus viewer widget by selection show tokens and tags or in word cloud. Document embedding parses n grams of each document in corpus, obtains embedding for each n gram using pre trained model for chosen language and obtains one vector for each document by aggregating n gram embeddings using one of offered aggregators.

Enhance Neural Retrieval With Contextual Document Embeddings In this article, you will learn how to cluster a collection of text documents using large language model embeddings and standard clustering algorithms in scikit learn. We bring together the power of word sense disambiguation and the semantic richness of word and word sense embedded vectors to construct embedded representations of document collections. our approach results in semantically enhanced and low dimensional representations. Below, we’ll overview what word embeddings are, demonstrate how to build and use them, talk about important considerations regarding bias, and apply all this to a document clustering task. This study extends traditional text clustering frameworks by integrating embeddings from llms, offering improved methodologies and suggesting new avenues for future research in various types of textual analysis.

Orange Data Mining Document Embedding Below, we’ll overview what word embeddings are, demonstrate how to build and use them, talk about important considerations regarding bias, and apply all this to a document clustering task. This study extends traditional text clustering frameworks by integrating embeddings from llms, offering improved methodologies and suggesting new avenues for future research in various types of textual analysis. In this video, we will take a look at document embeddings in text analysis. we learn how to load datasets, embed text, classify documents, and interpret results using techniques such as. So, you can use them to do a semantic search and even work with documents in different languages. in this article, i would like to dive deeper into the embedding topic and discuss all the. Document embedding parses n grams of each document in corpus, obtains embedding for each n gram using pre trained model for chosen language and obtains one vector for each document by aggregating n gram embeddings using one of offered aggregators. In this chapter, we present classical vector space model with example from biomedical text. the limitations of the model are also highlighted. this is followed by an overview of language modeling and description of word embeddings, including: word2vec, glove, fasttext, and biowordvec.

Comments are closed.