Testing Data In Apache Spark

Databricks Certified Developer For Apache Spark 3 0 Practice Tests 540 Testing your pyspark application # now let’s test our pyspark transformation function. one option is to simply eyeball the resulting dataframe. however, this can be impractical for large dataframe or input sizes. a better way is to write tests. here are some examples of how we can test our code. We’ll discuss common pitfalls, share actionable tips, and highlight best practices to help you build a robust testing culture ensuring your spark code is reliable, maintainable, and production ready, even within the unique context of databricks.

Spark Testing Pdf Unit testing pyspark code offers a scalable, disciplined solution for ensuring reliable big data applications. explore more with pyspark fundamentals and elevate your spark skills!. As mentioned, this is not ideal as there is limited control and unexpected behavior can happen. a good testing practice is to have reproducible and idempotent tests, this means: launching the tests an infinite number of times should always have the same results. Testing data in apache spark how to efficiently test large scale spark jobs, using open source libraries. In this post, we will go over why tests are necessary, the main types of tests for data pipelines, and how to effectively use pytest to test your pyspark application.

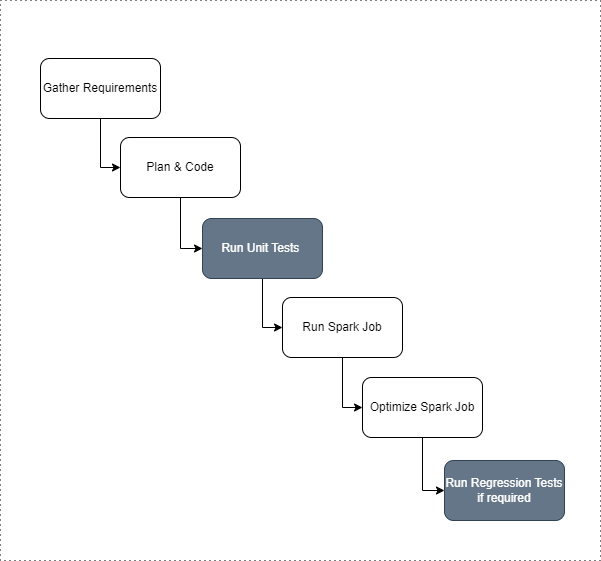

Testing Data In Apache Spark Testing data in apache spark how to efficiently test large scale spark jobs, using open source libraries. In this post, we will go over why tests are necessary, the main types of tests for data pipelines, and how to effectively use pytest to test your pyspark application. To test your code in spark, you must divide your code in at least 2 parts: domain computation, and input output. In this post, we’ll look at one of the ways to unit test spark applications and prepare test datasets. Learn how to write integration tests for apache spark apps. create input data, run etl with spark submit, and validate outputs in production like setups. Test driven development (tdd) is a software development process relying on software requirements being converted to test cases before software is fully developed, and tracking all software development by repeatedly testing the software against all test cases.

Comments are closed.