Test Time Compute Ttc Enhancing Real Time Ai Inference And Adaptive

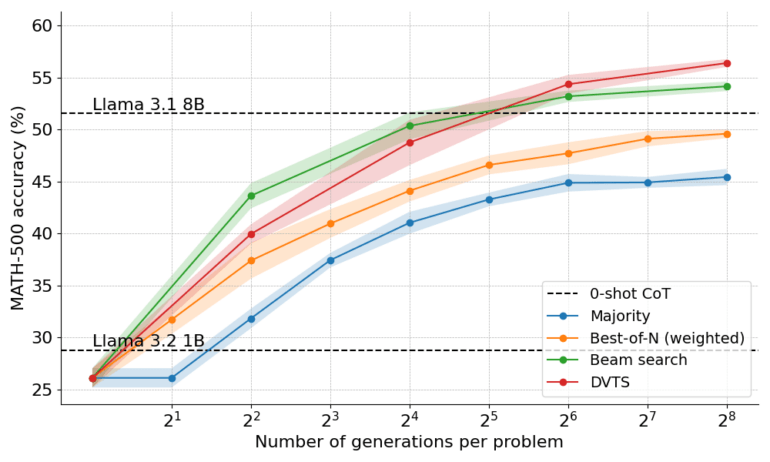

Test Time Compute Scaling In Ai Datatunnel Explore how test time compute (ttc) revolutionizes ai by dynamically allocating computational resources during inference, leading to deeper reasoning and adaptability. This survey presents a comprehensive review of efficient test time compute (ttc) strategies, which aim to improve the computational efficiency of llm reasoning.

The New Ai Frontier Exploring Test Time Compute Learn Ai With Kesse Recent breakthroughs in ai reasoning, enabled by test time compute (ttc) on compact large language models (llms), offer great potential for edge devices to effectively execute complex reasoning tasks. Explore how generative ai models are shifting from bigger training to smarter inference—using reasoning and adaptive compute at test time to deliver better answers without bloating latency or cost. Test time compute (ttc) is defined as the computational effort allocated during inference rather than during model training, with the specific objective of improving or adapting the output of pretrained models for a given prompt. At its core, ttc presents the challenge of balancing three competing priorities: maximising system throughput, optimising response speed, and enhancing reasoning quality in modern llms.

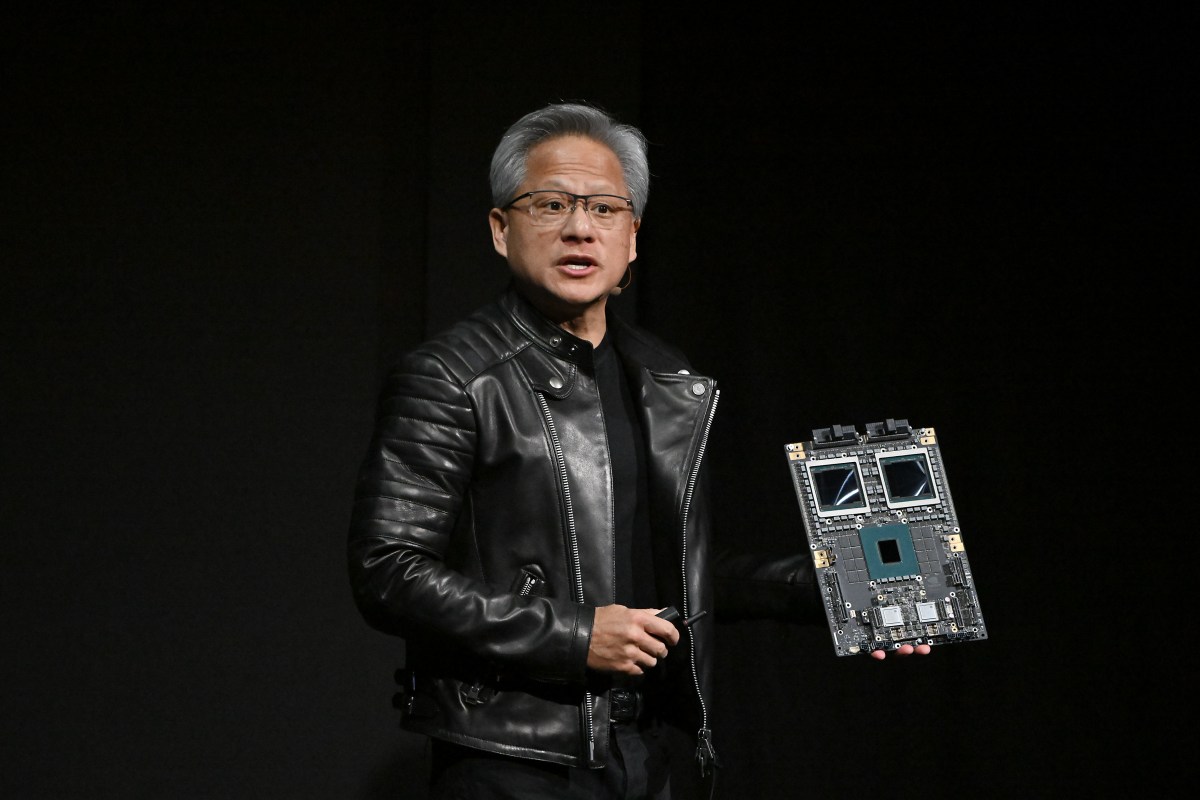

Test Time Compute Scaling Is A Path To Better Ai Systems â Meta Ai Labsâ Test time compute (ttc) is defined as the computational effort allocated during inference rather than during model training, with the specific objective of improving or adapting the output of pretrained models for a given prompt. At its core, ttc presents the challenge of balancing three competing priorities: maximising system throughput, optimising response speed, and enhancing reasoning quality in modern llms. Discover why ttc is a bottleneck for modern ai, its impact on user experience and cost, and how techniques like 'slow thinking' and meta reinforcement fine tuning are unlocking new levels of ai reasoning. But as those gains begin to plateau, the industry is pivoting toward a new frontier – test time compute. by giving models extra “think time” during inference, we’re opening up new avenues for performance improvements, smarter reasoning, and real time adaptability. In this work, we analyze two primary mechanisms to scale test time computation: (1) searching against dense, process based verifier reward models (prms); and (2) updating the model's distribution over a response adaptively, given the prompt at test time. Test time compute (also called inference time compute scaling or test time scaling) refers to the practice of allocating additional computational resources during the inference phase of a large language model (llm), rather than relying solely on computation invested during training.

Test Time Compute Scaling Is A Path To Better Ai Systems â Meta Ai Labsâ Discover why ttc is a bottleneck for modern ai, its impact on user experience and cost, and how techniques like 'slow thinking' and meta reinforcement fine tuning are unlocking new levels of ai reasoning. But as those gains begin to plateau, the industry is pivoting toward a new frontier – test time compute. by giving models extra “think time” during inference, we’re opening up new avenues for performance improvements, smarter reasoning, and real time adaptability. In this work, we analyze two primary mechanisms to scale test time computation: (1) searching against dense, process based verifier reward models (prms); and (2) updating the model's distribution over a response adaptively, given the prompt at test time. Test time compute (also called inference time compute scaling or test time scaling) refers to the practice of allocating additional computational resources during the inference phase of a large language model (llm), rather than relying solely on computation invested during training.

The Promises Of Test Time Compute Enhancing Ai Systems With Real Time In this work, we analyze two primary mechanisms to scale test time computation: (1) searching against dense, process based verifier reward models (prms); and (2) updating the model's distribution over a response adaptively, given the prompt at test time. Test time compute (also called inference time compute scaling or test time scaling) refers to the practice of allocating additional computational resources during the inference phase of a large language model (llm), rather than relying solely on computation invested during training.

Optimizing Test Time Compute Via Meta Reinforcement Fine Tuning Ai

Comments are closed.