Tensorflow Model Optimization Quantization And Pruning Tf World 19

Free Video Tensorflow Model Optimization Quantization And Pruning A suite of tools for optimizing ml models for deployment and execution. improve performance and efficiency, reduce latency for inference at the edge. Explore tensorflow model optimization techniques in this 41 minute conference talk from tf world '19. dive into quantization and pruning methods presented by raziel alvarez, a tensorflow performance expert.

Free Video Inside Tensorflow Tf Model Optimization Toolkit Tensorflow model optimization: quantization and pruning (tf world '19) come here to learn from our tensorflow performance experts who will cover topics including optimization,. The tensorflow model optimization toolkit is a suite of tools that users, both novice and advanced, can use to optimize machine learning models for deployment and execution. supported techniques include quantization and pruning for sparse weights. there are apis built specifically for keras. Explore techniques like quantization and pruning to reduce model size and improve inference speed. It’s the difference between a model that runs on actual devices and one that only works in your cozy cloud environment. we’re talking 8x smaller models, 4x faster inference, and batteries that don’t drain faster than your will to live. let me show you how to make your models actually deployable.

Tensorflow Model Optimization Toolkit Pruning Api The Tensorflow Blog Explore techniques like quantization and pruning to reduce model size and improve inference speed. It’s the difference between a model that runs on actual devices and one that only works in your cozy cloud environment. we’re talking 8x smaller models, 4x faster inference, and batteries that don’t drain faster than your will to live. let me show you how to make your models actually deployable. In this tutorial, you saw how to create sparse models with the tensorflow model optimization toolkit api for both tensorflow and tflite. you then combined pruning with post training. Thousands of videos with english chinese subtitles! now you can learn to understand native speakers, expand your vocabulary, and improve your pronunciation. Optimizing tensorflow models for inference speed is a complex yet rewarding endeavor. by employing a combination of quantization, sparsity and pruning, clustering, and collaborative optimization, we can significantly enhance the performance and efficiency of machine learning models. Following our exploration of quantization and its impact on model efficiency and size, we now delve into another crucial technique for optimizing machine learning models — pruning.

Model Optimization Tensorflow Model Optimization G3doc Guide Pruning In this tutorial, you saw how to create sparse models with the tensorflow model optimization toolkit api for both tensorflow and tflite. you then combined pruning with post training. Thousands of videos with english chinese subtitles! now you can learn to understand native speakers, expand your vocabulary, and improve your pronunciation. Optimizing tensorflow models for inference speed is a complex yet rewarding endeavor. by employing a combination of quantization, sparsity and pruning, clustering, and collaborative optimization, we can significantly enhance the performance and efficiency of machine learning models. Following our exploration of quantization and its impact on model efficiency and size, we now delve into another crucial technique for optimizing machine learning models — pruning.

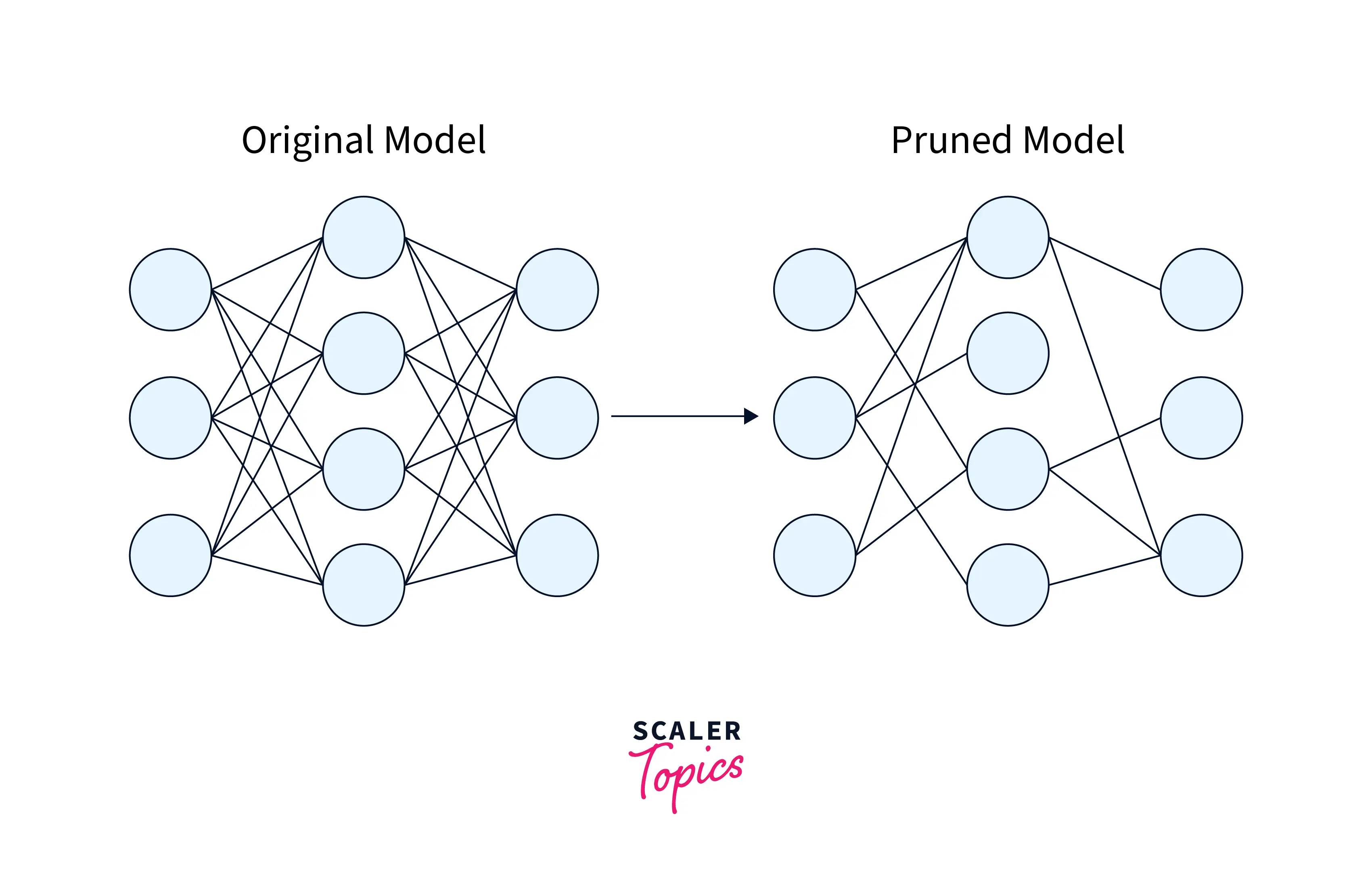

Quantization And Pruning Scaler Topics Optimizing tensorflow models for inference speed is a complex yet rewarding endeavor. by employing a combination of quantization, sparsity and pruning, clustering, and collaborative optimization, we can significantly enhance the performance and efficiency of machine learning models. Following our exploration of quantization and its impact on model efficiency and size, we now delve into another crucial technique for optimizing machine learning models — pruning.

Comments are closed.