Tensorflow Lite For Mobile Developers Google I O 18

On Device Large Language Models With Keras And Tensorflow Lite Google Train and deploy your own large language model (llm) on android using keras and tensorflow lite. large language models (llms) have revolutionized tasks like text generation and language. Tensorflow lite enables developers to deploy custom machine learning models to mobile devices. this technical session will describe in detail how to take a trained tensorflow model, and use.

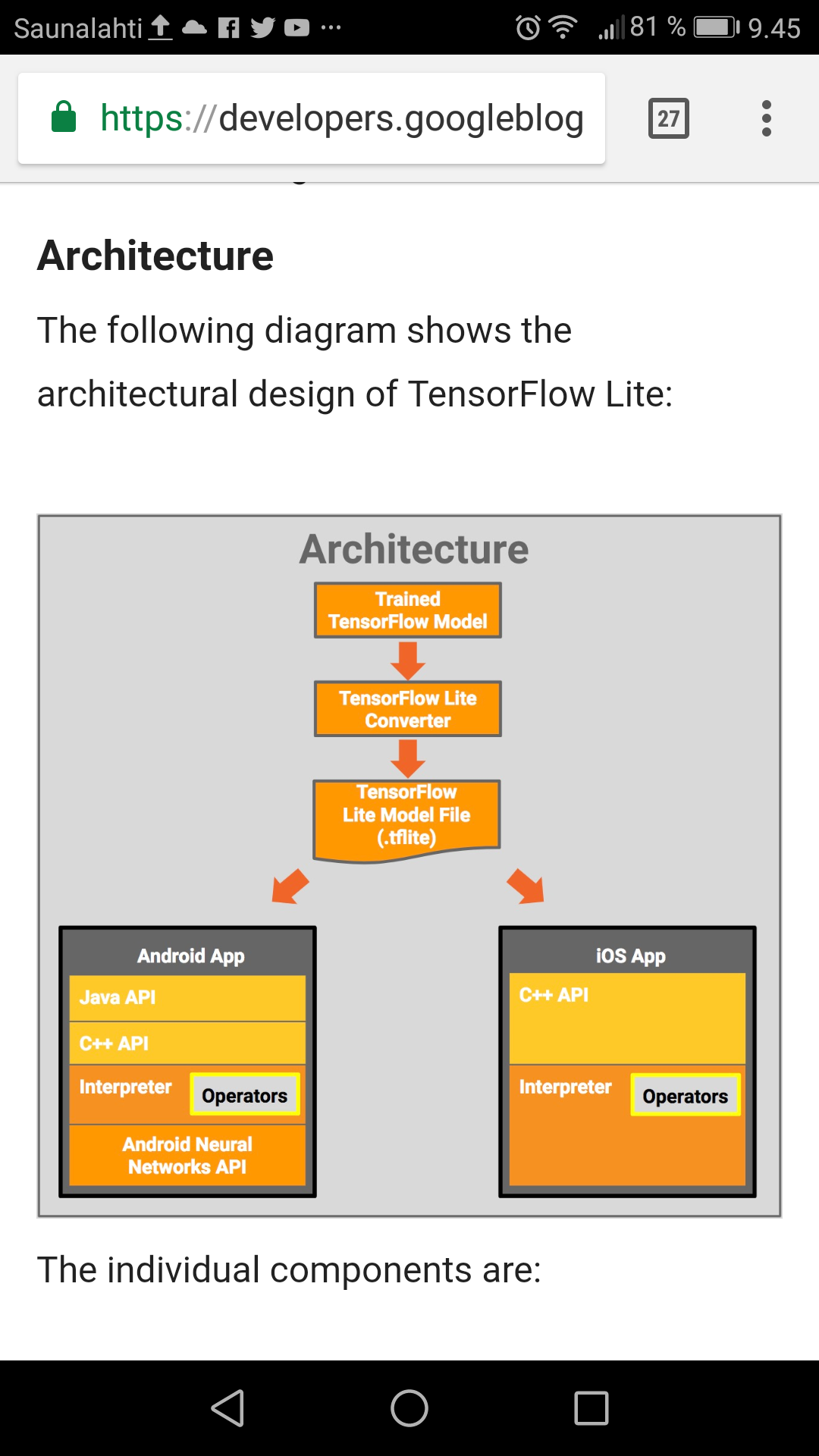

Google Developers Blog Announcing Tensorflow Lite Tensorflow lite, now named litert, is still the same high performance runtime for on device ai, but with an expanded vision to support models authored in pytorch, jax, and keras. The following litert runtime apis are available for android development: compiledmodel api: the modern standard for high performance inference, streamlining hardware acceleration across cpu gpu npu. Litert on android provides essentials for deploying high performance, custom ml features into your android app. use litert with google play services, android's official ml inference runtime, to run high performance ml inference in your app. learn more. Train a neural network to recognize gestures caught on your webcam using tensorflow.js, then use tensorflow lite to convert the model to run inference on your device.

Mobile Ai Development Using Tensorflow Lite Practical Guide Moldstud Litert on android provides essentials for deploying high performance, custom ml features into your android app. use litert with google play services, android's official ml inference runtime, to run high performance ml inference in your app. learn more. Train a neural network to recognize gestures caught on your webcam using tensorflow.js, then use tensorflow lite to convert the model to run inference on your device. Tensorflow lite (tflite) is google’s solution for deploying machine learning models on mobile and edge devices. it enables developers to run pre trained ai models directly on android devices, offering benefits like improved privacy (data stays on device), faster inference speeds (no network latency), and offline functionality. Tensorflow lite lets you run tensorflow machine learning (ml) models in your android apps. the tensorflow lite system provides prebuilt and customizable execution environments for running models on android quickly and efficiently, including options for hardware acceleration. In this chapter, we lay out a battle tested playbook to deploy tensorflow lite android and tensorflow lite ios apps, leveraging convert model to tensorflow lite foundations, guided by the tensorflow lite tutorial, and tuned with the tensorflow lite gpu delegate where hardware allows. Tensorflow lite is an open source deep learning framework designed for on device inference, commonly referred to as edge computing. it enables developers to deploy trained machine learning models directly on edge devices such as mobile phones, embedded systems and iot devices without relying on cloud resources.

Google Launches Tensorflow Lite Developer Preview For Machine Learning Tensorflow lite (tflite) is google’s solution for deploying machine learning models on mobile and edge devices. it enables developers to run pre trained ai models directly on android devices, offering benefits like improved privacy (data stays on device), faster inference speeds (no network latency), and offline functionality. Tensorflow lite lets you run tensorflow machine learning (ml) models in your android apps. the tensorflow lite system provides prebuilt and customizable execution environments for running models on android quickly and efficiently, including options for hardware acceleration. In this chapter, we lay out a battle tested playbook to deploy tensorflow lite android and tensorflow lite ios apps, leveraging convert model to tensorflow lite foundations, guided by the tensorflow lite tutorial, and tuned with the tensorflow lite gpu delegate where hardware allows. Tensorflow lite is an open source deep learning framework designed for on device inference, commonly referred to as edge computing. it enables developers to deploy trained machine learning models directly on edge devices such as mobile phones, embedded systems and iot devices without relying on cloud resources.

Google Launches Tensorflow Lite 1 0 For Mobile And Embedded Devices In this chapter, we lay out a battle tested playbook to deploy tensorflow lite android and tensorflow lite ios apps, leveraging convert model to tensorflow lite foundations, guided by the tensorflow lite tutorial, and tuned with the tensorflow lite gpu delegate where hardware allows. Tensorflow lite is an open source deep learning framework designed for on device inference, commonly referred to as edge computing. it enables developers to deploy trained machine learning models directly on edge devices such as mobile phones, embedded systems and iot devices without relying on cloud resources.

Announcing Tensorflow Lite In Google Play Services General Availability

Comments are closed.