Temporal Difference Td Learning In Rl

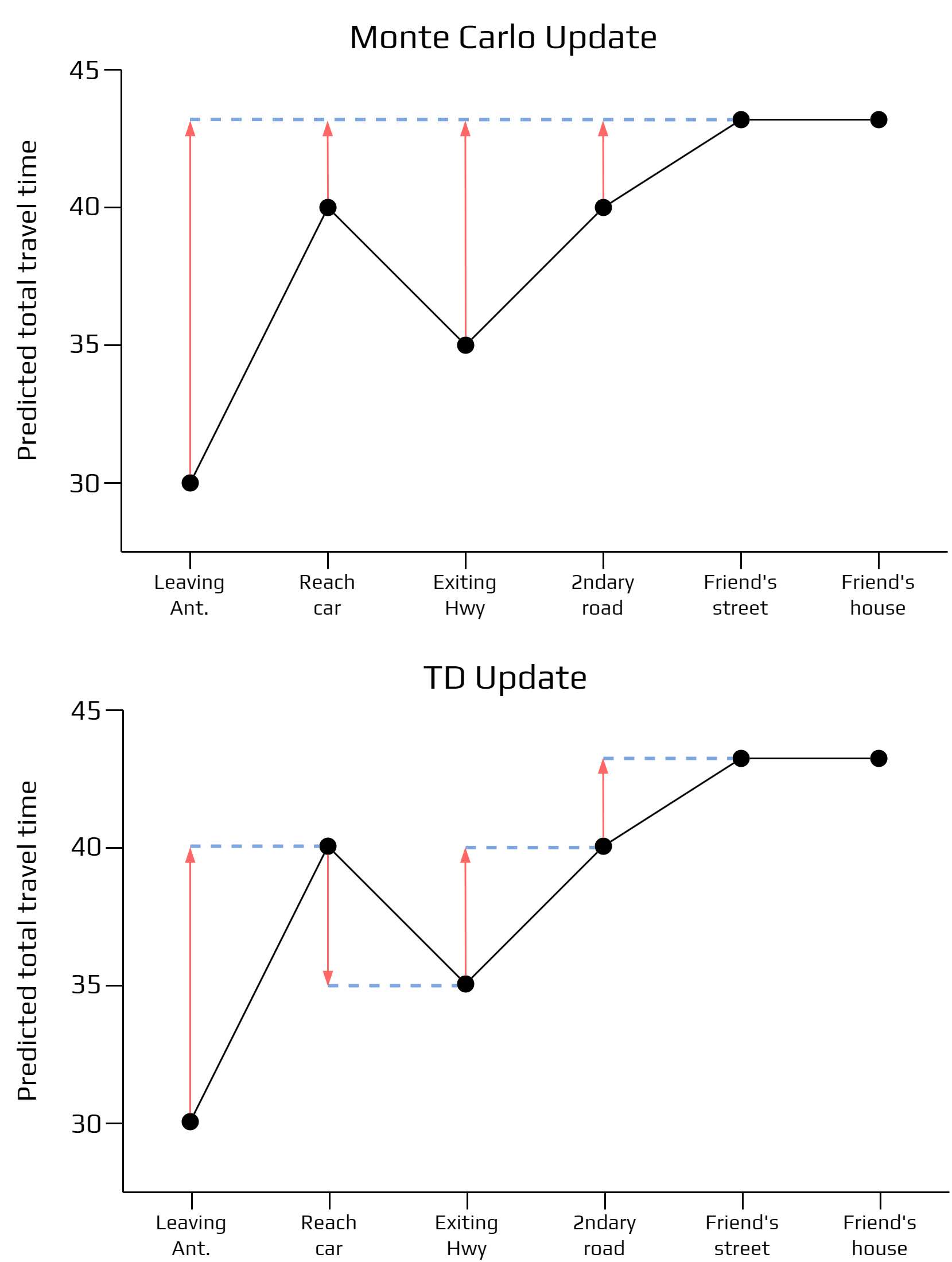

Temporal Difference Td Learning Geeksforgeeks Unlike monte carlo methods that require full episodes, td learning updates value estimates after each time step using the bellman equation and temporal difference error. So, what exactly is temporal difference learning? here’s the deal: it’s all about updating the value function (a fancy way of saying the predicted long term reward) based on the difference.

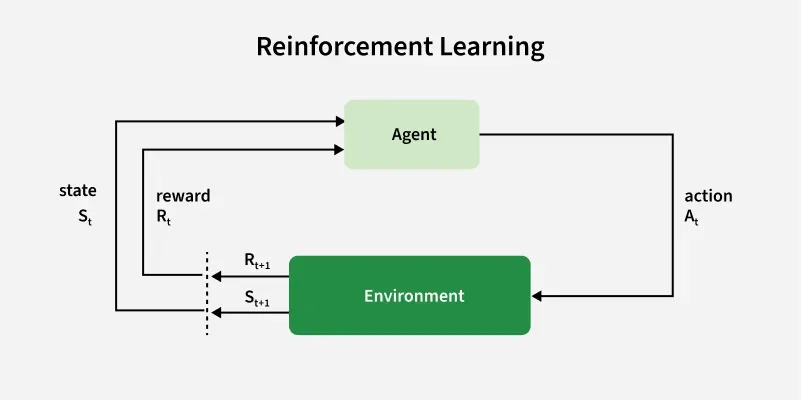

Temporal Difference Learning Csci 531 Reinforcement Learning What is temporal difference learning? temporal difference (td) learning is a core idea in reinforcement learning (rl), where an agent learns to make better decisions by interacting with its environment and improving its predictions over time. Temporal difference learning offers an efficient way to learn value functions and optimal policies in reinforcement learning. by bootstrapping and updating estimates based on experience, td methods can effectively solve a wide range of control problems. Learn about temporal difference (td) learning, including sarsa and q learning algorithms. While there are a variety of techniques for unsupervised learning in prediction problems, we will focus specifically on the method of temporal difference (td) learning (sutton, 1988).

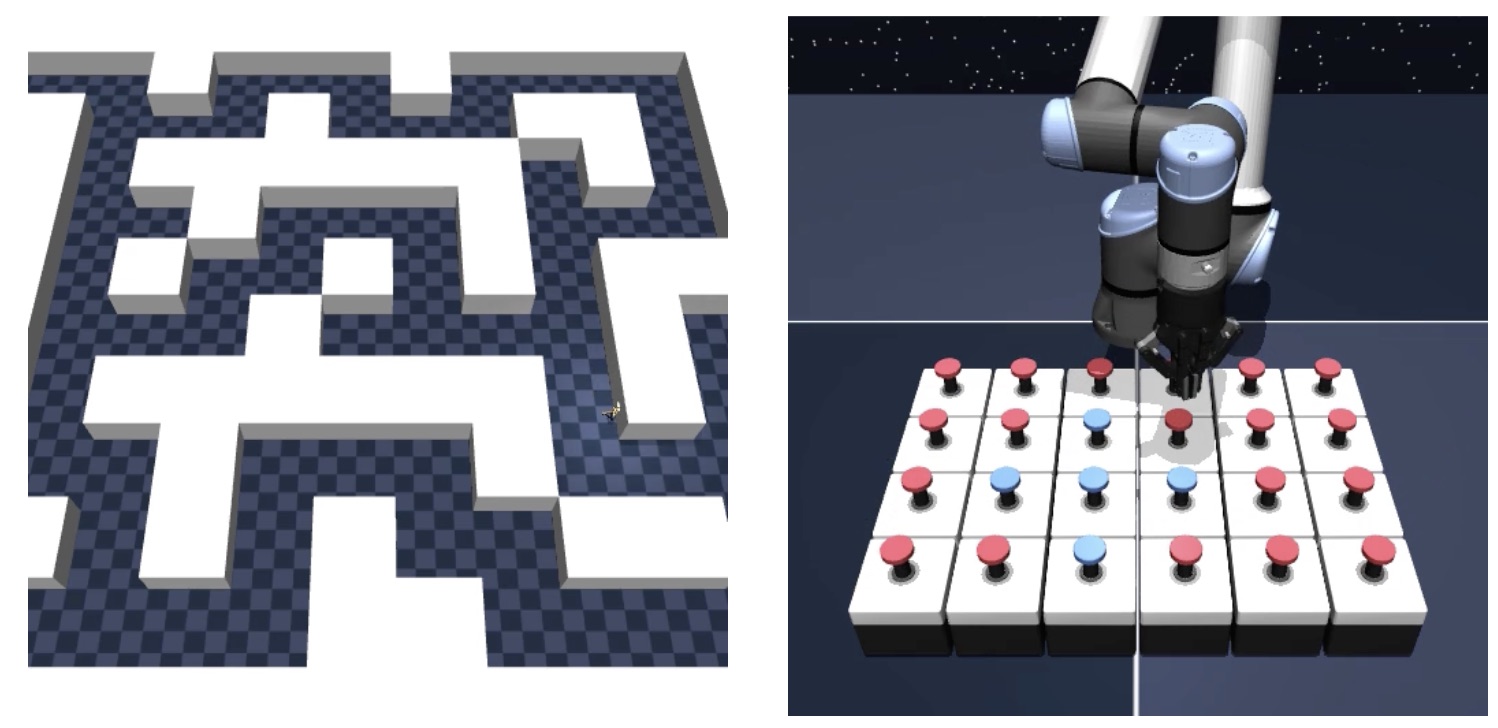

Temporal Difference Td Learning Basics Pdf Artificial Learn about temporal difference (td) learning, including sarsa and q learning algorithms. While there are a variety of techniques for unsupervised learning in prediction problems, we will focus specifically on the method of temporal difference (td) learning (sutton, 1988). In this paper we solve the decades old puzzle of why temporal difference learning (td) can solve complex reinforcement learning (rl) tasks that gradient descent cannot. Online: in rl, samples are generated on the fly, in a non i.i.d. fashion. hence, the chosen learning algorithm should be eligible for online learning (train incrementally by introducing one sample at a time). Temporal difference (td) learning is a powerful, model free rl method that efficiently updates value estimates in real time. it serves as the foundation for modern reinforcement learning algorithms like q learning, sarsa, and deep q networks (dqn). Temporal difference learning (td learning) methods are a popular subset of rl algorithms. td learning methods combine key aspects of monte carlo and dynamic programming methods to accelerate learning without requiring a perfect model of the environment dynamics.

Github Riashat Temporal Difference Learning Code For Td Learning In this paper we solve the decades old puzzle of why temporal difference learning (td) can solve complex reinforcement learning (rl) tasks that gradient descent cannot. Online: in rl, samples are generated on the fly, in a non i.i.d. fashion. hence, the chosen learning algorithm should be eligible for online learning (train incrementally by introducing one sample at a time). Temporal difference (td) learning is a powerful, model free rl method that efficiently updates value estimates in real time. it serves as the foundation for modern reinforcement learning algorithms like q learning, sarsa, and deep q networks (dqn). Temporal difference learning (td learning) methods are a popular subset of rl algorithms. td learning methods combine key aspects of monte carlo and dynamic programming methods to accelerate learning without requiring a perfect model of the environment dynamics.

Temporal Difference Td Learning By Sagi Shaier Tds Archive Medium Temporal difference (td) learning is a powerful, model free rl method that efficiently updates value estimates in real time. it serves as the foundation for modern reinforcement learning algorithms like q learning, sarsa, and deep q networks (dqn). Temporal difference learning (td learning) methods are a popular subset of rl algorithms. td learning methods combine key aspects of monte carlo and dynamic programming methods to accelerate learning without requiring a perfect model of the environment dynamics.

Rl Without Td Learning αιhub

Comments are closed.