Tcgbench Better Llm Code Testing

Llm Testing Llm Testing Github This paper critically re evaluates llm based test case generation (tcg), highlighting current verifier limitations and formalizing key quality metrics alongside tcgbench, a foundational tcg research dataset. In this ai research roundup episode, alex discusses the paper:'rethinking verification for llm code generation: from generation to testing'this paper highlig.

Llm Test Cases Testingdocs Tcg bench is a contamination proof benchmark designed to evaluate large language models in strategic decision making tasks. by using a custom designed trading card game that doesn't exist in training data, we ensure truly unbiased evaluation. 🤖 llm coding benchmark suite rigorous evaluation framework for assessing large language model code generation capabilities a curated collection of algorithmically complex coding problems designed to stress test llm reasoning, code generation accuracy, and edge case handling. The definitive llm leaderboard — ranking the best ai models including claude, gpt, gemini, deepseek, llama, and more across coding, reasoning, math, agentic, and chat benchmarks. compare llm rankings, tier lists, and pricing. We ranked every major llm by benchlm's current coding formula — swe rebench, swe bench pro, livecodebench, and swe bench verified. here's which models actually come out on top and why.

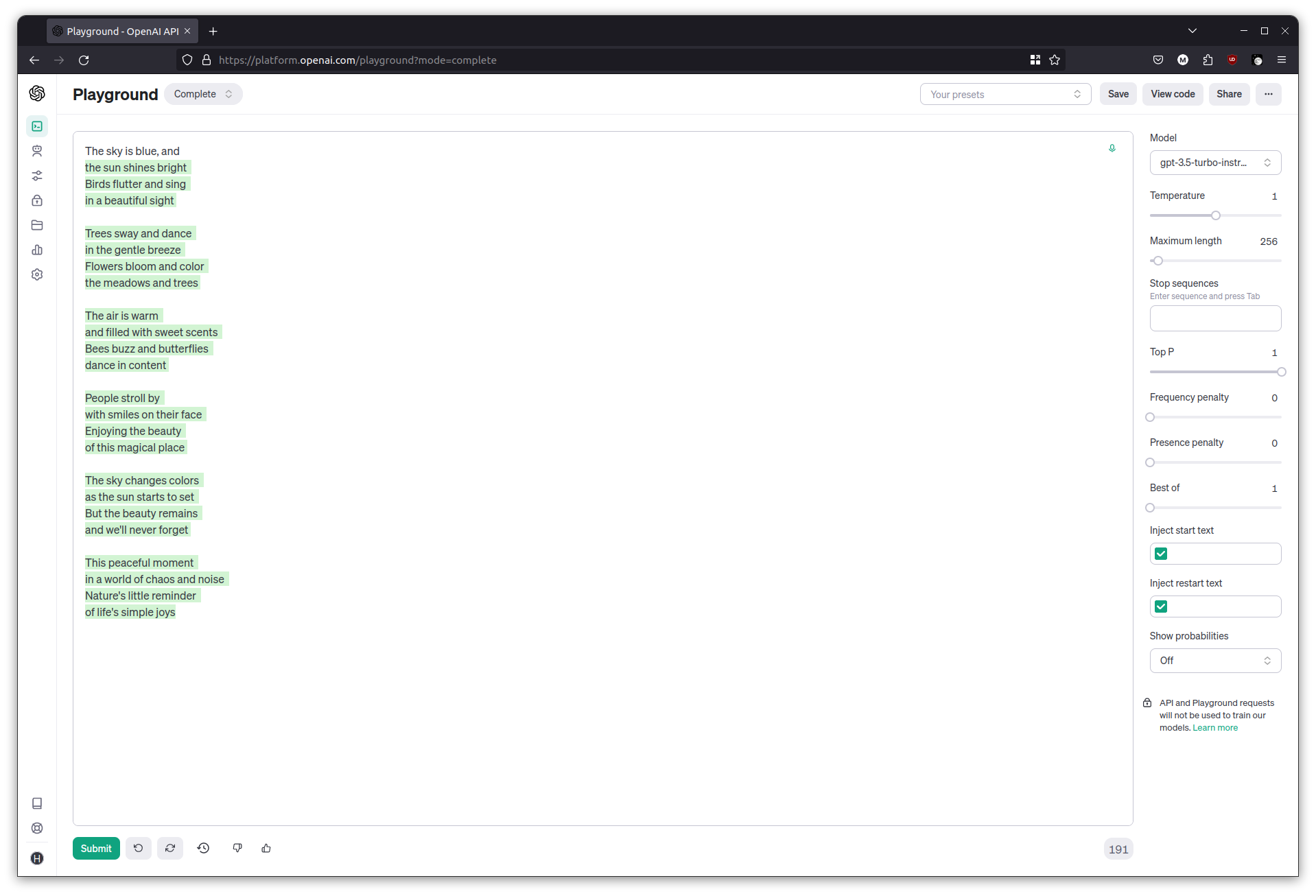

Hands On Introduction To Llm Programming For Developers The definitive llm leaderboard — ranking the best ai models including claude, gpt, gemini, deepseek, llama, and more across coding, reasoning, math, agentic, and chat benchmarks. compare llm rankings, tier lists, and pricing. We ranked every major llm by benchlm's current coding formula — swe rebench, swe bench pro, livecodebench, and swe bench verified. here's which models actually come out on top and why. This paper investigates how llms adapt their code generation strategies when exposed to test cases under different prompting conditions, and identifies four recurring adaptation strategies, with test driven refinement emerging as the most frequent. This paper proposes a human llm collaborative method (saga) and a new benchmark (tcgbench) to improve the verification and evaluation of llm code generation by generating more thorough and high quality test cases. In this post, we’ll delve into the world of llm benchmarks, exploring the key metrics that matter, and providing a comprehensive comparison of the most popular benchmarks used to rank llms. We investigate this problem from the perspective of competition level programming (cp) programs and propose tcgbench, a benchmark for (llm generation of) test case generators.

Github Minhngyuen Llm Benchmark Benchmark Llm Performance This paper investigates how llms adapt their code generation strategies when exposed to test cases under different prompting conditions, and identifies four recurring adaptation strategies, with test driven refinement emerging as the most frequent. This paper proposes a human llm collaborative method (saga) and a new benchmark (tcgbench) to improve the verification and evaluation of llm code generation by generating more thorough and high quality test cases. In this post, we’ll delve into the world of llm benchmarks, exploring the key metrics that matter, and providing a comprehensive comparison of the most popular benchmarks used to rank llms. We investigate this problem from the perspective of competition level programming (cp) programs and propose tcgbench, a benchmark for (llm generation of) test case generators.

Comments are closed.