Table 5 From Executable Code Actions Elicit Better Llm Agents

Executable Code Actions Elicit Better Llm Agents Fxis Ai Our extensive analysis of 17 llms on api bank and a newly curated benchmark shows that codeact outperforms widely used alternatives (up to 20% higher success rate). Table 5: evaluation results for codeactagent. the best results among all open source llms are bolded, and the second best results are underlined. id and od stand for in domain and out of domain evaluation correspondingly.

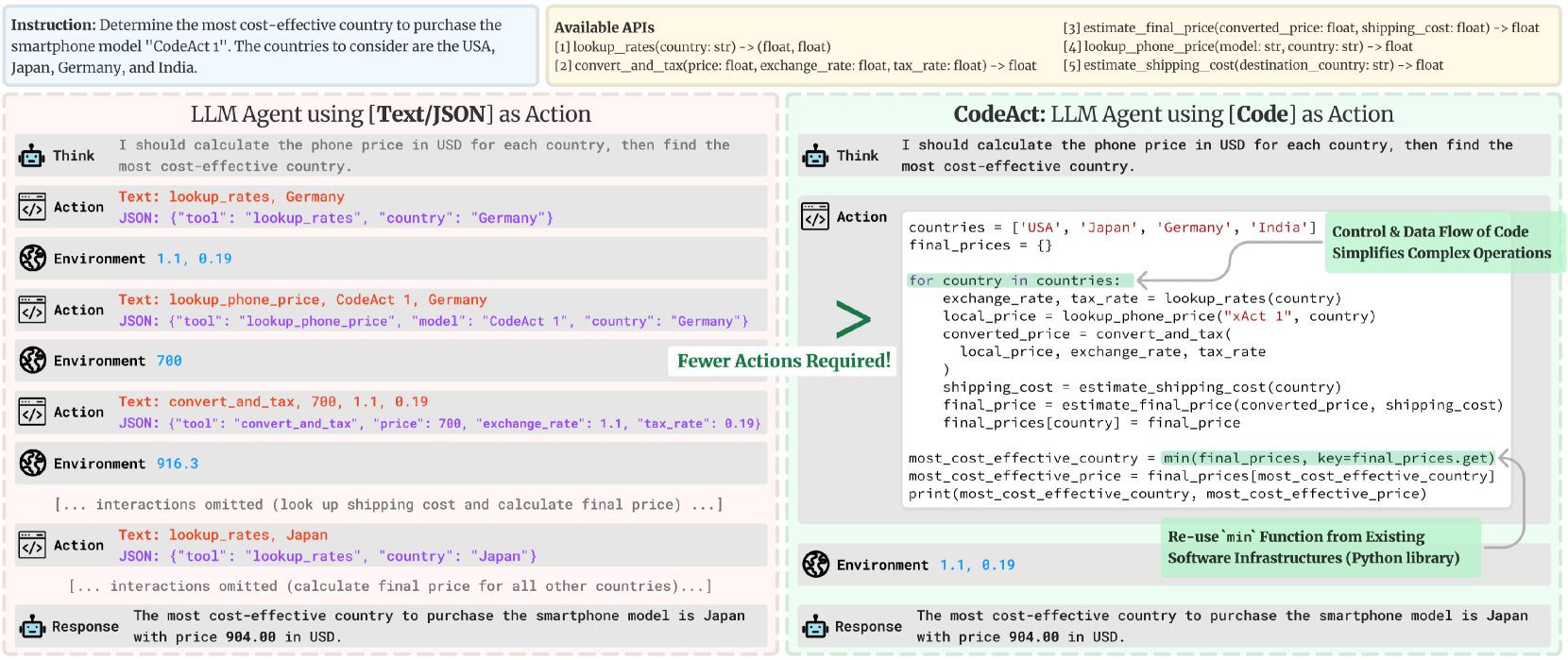

Executable Code Actions Elicit Better Llm Agents A Kaboyo Collection Our extensive analysis of 17 llms on api bank and a newly curated benchmark shows that codeact outperforms widely used alternatives (up to 20% higher success rate). On average, the paper shows that code actions require 30% fewer steps than json, which amounts to an equivalent reduction in the tokens generated. since llm calls are often the dimensioning cost of agent systems, it means your agent system runs are ~30% cheaper. Abstract large language model (llm) agents, capable of performing a broad range of actions, such as invoking tools and controlling robots, show great potential in tackling real world challenges. This work introduces codeact that employs executable python code for the llm agent’s action, which is advantageous over using text or json action, especially in complex scenarios.

Executable Code Actions Elicit Better Llm Agents Ai Research Paper Abstract large language model (llm) agents, capable of performing a broad range of actions, such as invoking tools and controlling robots, show great potential in tackling real world challenges. This work introduces codeact that employs executable python code for the llm agent’s action, which is advantageous over using text or json action, especially in complex scenarios. Integrated with a python interpreter, codeact can execute code actions and dynamically revise prior actions or emit new actions upon new observations (e.g., code execution results) through multi turn interactions (check out this example!). Published in icml, 2024. recommended citation: xingyao wang, yangyi chen, lifan yuan, yizhe zhang, yunzhu li, hao peng, heng ji arxiv.org abs 2402.01030. Taskweaver is proposed as a code first framework for building llm powered autonomous agents that converts user requests into executable code and treats user defined plugins as callable functions.

Executable Code Actions Elicit Better Llm Agents Ai Research Paper Integrated with a python interpreter, codeact can execute code actions and dynamically revise prior actions or emit new actions upon new observations (e.g., code execution results) through multi turn interactions (check out this example!). Published in icml, 2024. recommended citation: xingyao wang, yangyi chen, lifan yuan, yizhe zhang, yunzhu li, hao peng, heng ji arxiv.org abs 2402.01030. Taskweaver is proposed as a code first framework for building llm powered autonomous agents that converts user requests into executable code and treats user defined plugins as callable functions.

Comments are closed.