Table 1 From Interpreting And Improving Diffusion Models Using The

Interpreting And Improving Diffusion Models Using The Euclidean In this paper, we use this observation to interpret denoising diffusion models as approximate gradient descent applied to the euclidean distance function. we then provide straight forward convergence analysis of the ddim sampler under simple assumptions on the projection error of the denoiser. This paper provides the first convergence results for diffusion models in this more general setting by providing quantitative bounds on the wasserstein distance of order one between the target data distribution and the generative distribution of the diffusion model.

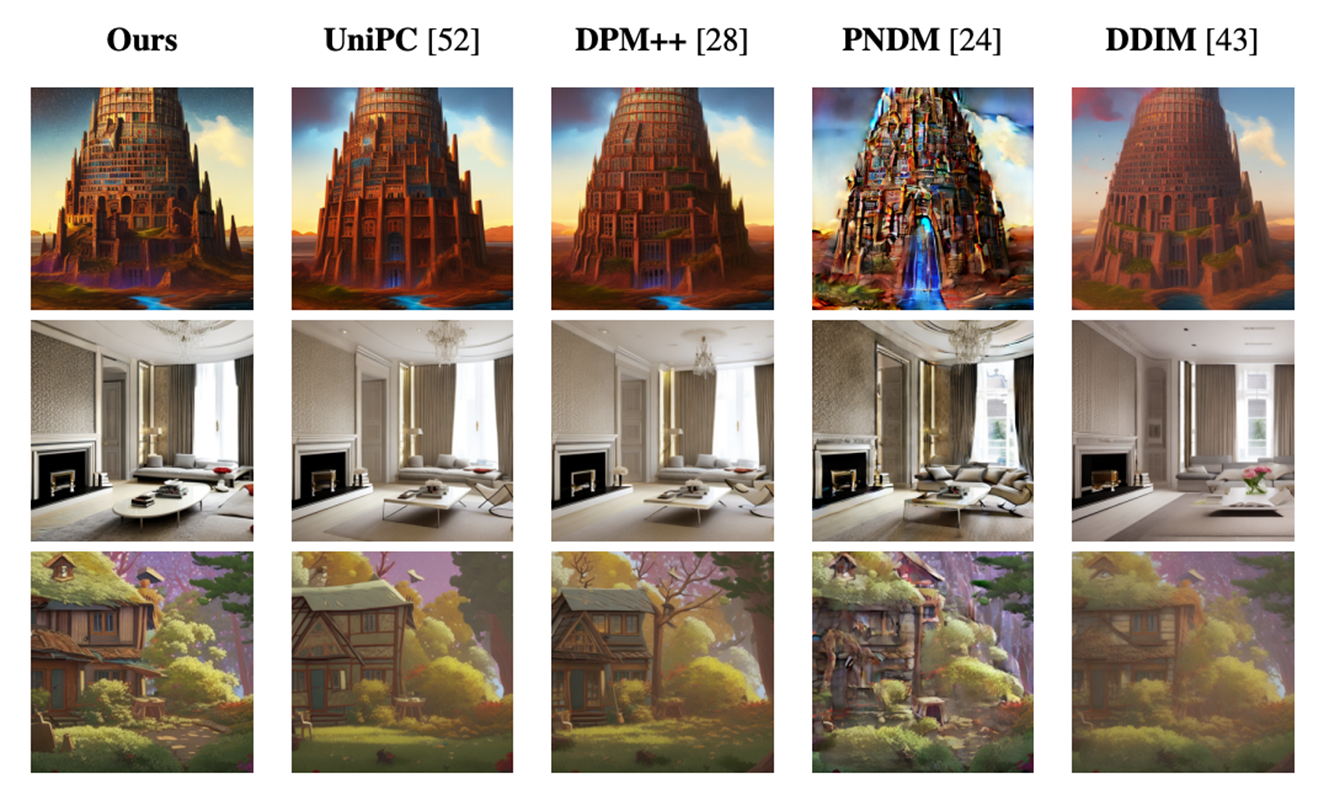

Interpreting And Improving Diffusion Models From An Optimization In this paper, the authors offer a novel perspective by interpreting denoising diffusion models as an approximation of gradient descent applied to the euclidean distance function. Motivation given commonly used difusion training and sampling algorithms, is there a deterministic model that motivates the same algorithms? can we make reasonable assumptions on learned nn model to analyze performance of sampling algorithm? our optimization based interpretation:. Sampling from diffusion models samplers for diffusion models started with probabilistic methods (e.g. [13]) that formed the reverse process by conditioning on the denoiser output at each step. This paper interprets denoising diffusion models as gradient descent on euclidean distance, analyzes ddim's convergence, and introduces a new gradient estimation sampler that achieves state of the art fid scores with fewer function evaluations.

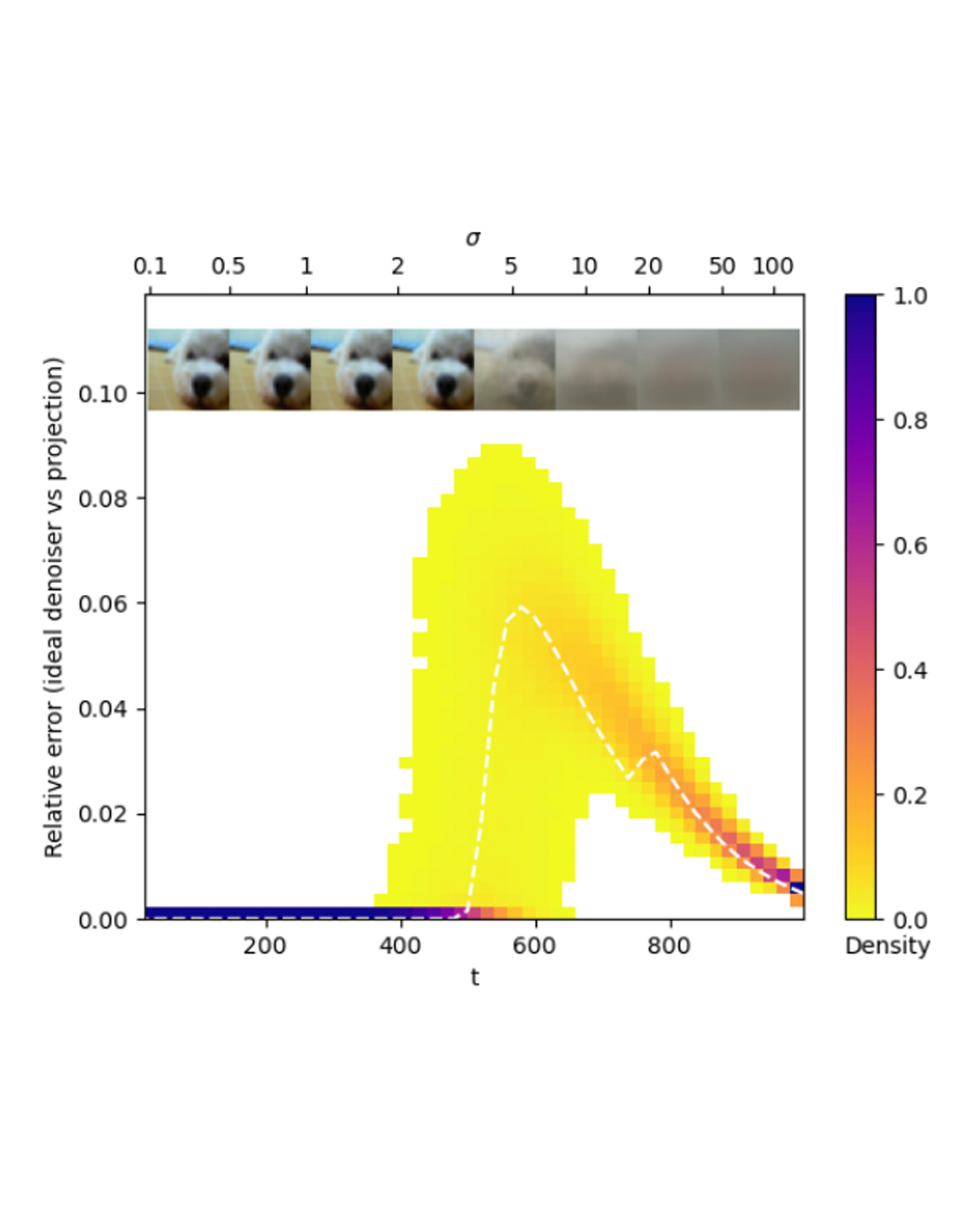

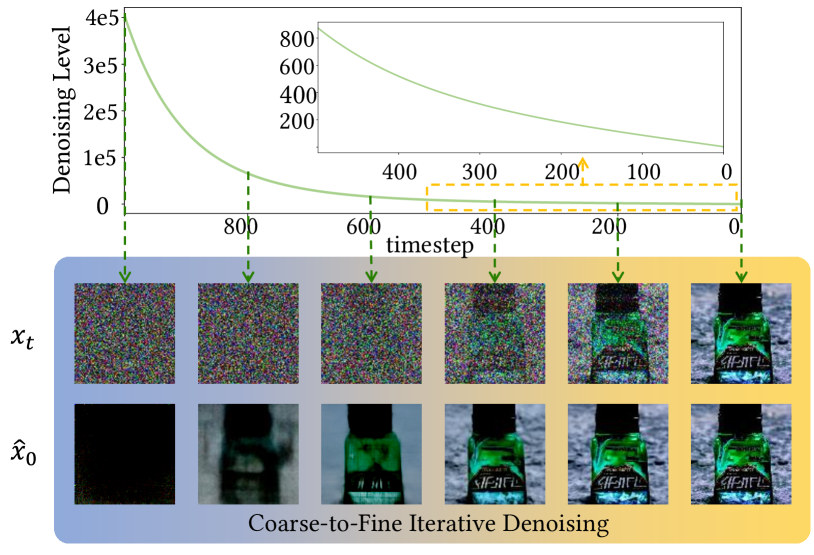

Interpreting And Improving Diffusion Models From An Optimization Sampling from diffusion models samplers for diffusion models started with probabilistic methods (e.g. [13]) that formed the reverse process by conditioning on the denoiser output at each step. This paper interprets denoising diffusion models as gradient descent on euclidean distance, analyzes ddim's convergence, and introduces a new gradient estimation sampler that achieves state of the art fid scores with fewer function evaluations. Interpreting and improving diffusion models from an optimization perspective. 1 introduction. denoising approximates projection. diffusion as distance minimization. 2 background. 2.1 denoising diffusion models. denoisers. ideal denoiser. sampling. 2.2 distance and projection. 3 denoising as approximate projection. In this paper, we use this observation to reinterpret denoising diffusion models as approximate gradient descent applied to the euclidean distance function. we then provide straight forward. This paper provides the first convergence results for diffusion models in this more general setting by providing quantitative bounds on the wasserstein distance of order one between the target data distribution and the generative distribution of the diffusion model. Diffusion models are presented as the reversal of a stochastic process that corrupts clean data with increasing levels of random noise (sohl dickstein et al., 2015; ho et al., 2020).

Figure 5 From Interpreting And Improving Diffusion Models Using The Interpreting and improving diffusion models from an optimization perspective. 1 introduction. denoising approximates projection. diffusion as distance minimization. 2 background. 2.1 denoising diffusion models. denoisers. ideal denoiser. sampling. 2.2 distance and projection. 3 denoising as approximate projection. In this paper, we use this observation to reinterpret denoising diffusion models as approximate gradient descent applied to the euclidean distance function. we then provide straight forward. This paper provides the first convergence results for diffusion models in this more general setting by providing quantitative bounds on the wasserstein distance of order one between the target data distribution and the generative distribution of the diffusion model. Diffusion models are presented as the reversal of a stochastic process that corrupts clean data with increasing levels of random noise (sohl dickstein et al., 2015; ho et al., 2020).

Comments are closed.