System Design What Is A Cache

Elements Of Cache Design Pdf Cpu Cache Central Processing Unit Caching is a concept that involves storing frequently accessed data in a location that is easily and quickly accessible. the purpose of caching is to improve the performance and efficiency of a system by reducing the amount of time it takes to access frequently accessed data. Caching is the process of storing a copy of frequently accessed data in a temporary storage location, so future requests for that data can be served more quickly. think of it as a high speed.

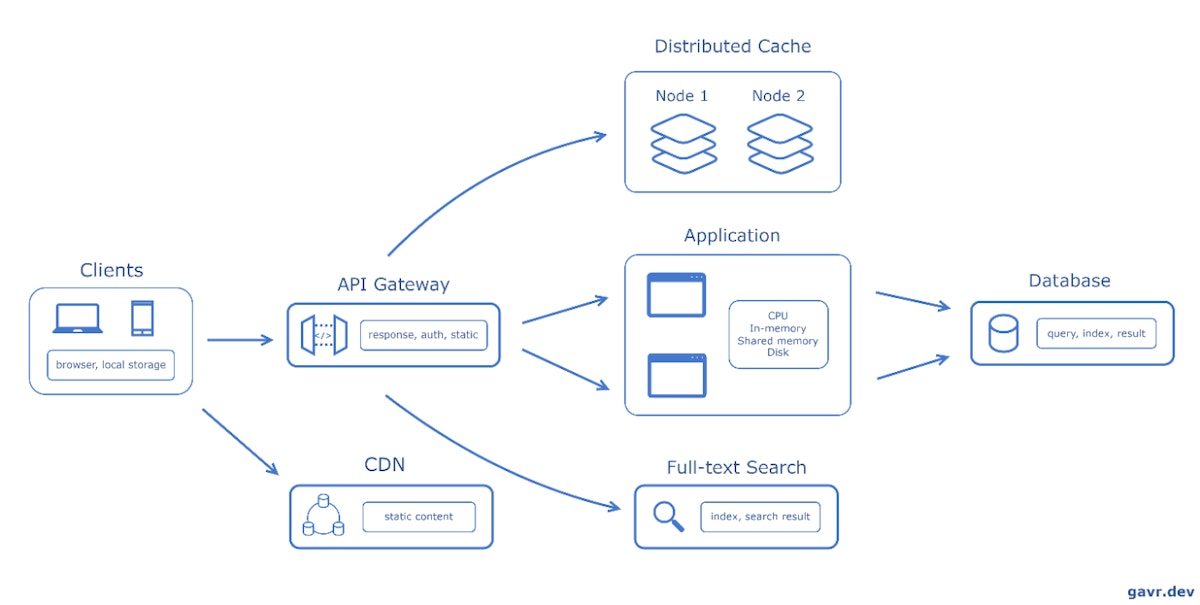

The System Design Cheat Sheet Cache Hackernoon Caching is the process of storing copies of data in a high speed storage layer (the cache memory) to reduce the time it takes to access this data compared to fetching it directly from the primary storage (the database). the illustration below depicts how a cache operates on an abstract level:. Caching is one of the most fundamental techniques used in system design to improve application performance, reduce latency, and manage system load. the idea is simple: instead of fetching data from a slower backend system (e.g., a database) every time it is needed, you store frequently accessed data in a faster medium called a cache. The system design cheat sheet for caching is used to reduce latency and improve the efficiency of data retrieval across the distributed system. Caching is a technique where systems store frequently accessed data in a temporary storage area, known as a cache, to quickly serve future requests for the same data. this temporary storage is typically faster than accessing the original data source, making it ideal for improving system performance. real world example:.

The System Design Cheat Sheet Cache Hackernoon The system design cheat sheet for caching is used to reduce latency and improve the efficiency of data retrieval across the distributed system. Caching is a technique where systems store frequently accessed data in a temporary storage area, known as a cache, to quickly serve future requests for the same data. this temporary storage is typically faster than accessing the original data source, making it ideal for improving system performance. real world example:. Caching is the process of storing a copy of data in a temporary storage location so it can be accessed more quickly. in simple terms, a cache is a shortcut: it sits between the client (or application) and the main data source (like a database) to serve repeated requests faster. Learn about caching and when to use it in system design interviews. in system design interviews, caching comes up almost every time you need to handle high read traffic. your database becomes the bottleneck, latency starts creeping up, and the interviewer is waiting for you to say the word: cache. A shared (external) cache is a separate service (or a cluster) that caches data independently of any application instance, e.g. elasticache (memcached, redis). a local cache is quicker to access because of spatial locality (on the same machine). At a high level, a cache is a fast storage layer that keeps copies of frequently used data so future requests can be served quickly, without repeatedly hitting slower systems like databases or external apis.

The System Design Cheat Sheet Cache Hackernoon Caching is the process of storing a copy of data in a temporary storage location so it can be accessed more quickly. in simple terms, a cache is a shortcut: it sits between the client (or application) and the main data source (like a database) to serve repeated requests faster. Learn about caching and when to use it in system design interviews. in system design interviews, caching comes up almost every time you need to handle high read traffic. your database becomes the bottleneck, latency starts creeping up, and the interviewer is waiting for you to say the word: cache. A shared (external) cache is a separate service (or a cluster) that caches data independently of any application instance, e.g. elasticache (memcached, redis). a local cache is quicker to access because of spatial locality (on the same machine). At a high level, a cache is a fast storage layer that keeps copies of frequently used data so future requests can be served quickly, without repeatedly hitting slower systems like databases or external apis.

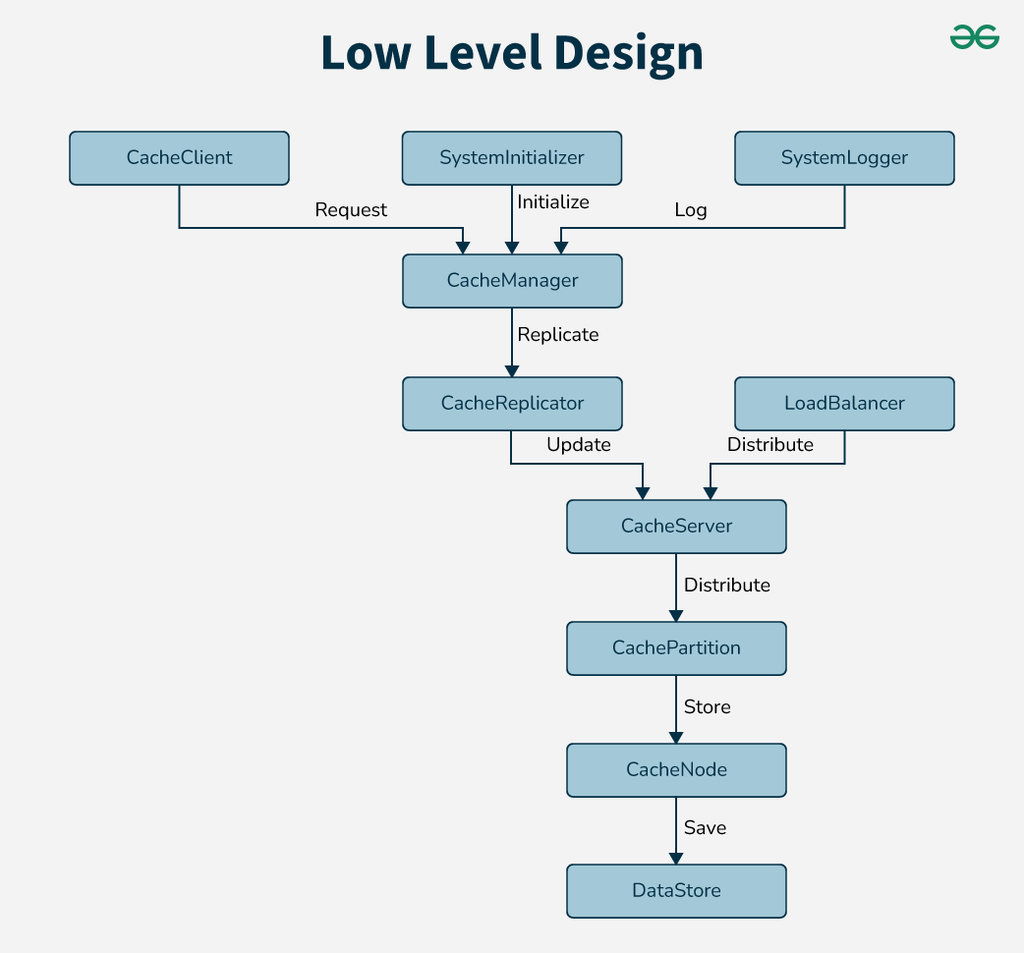

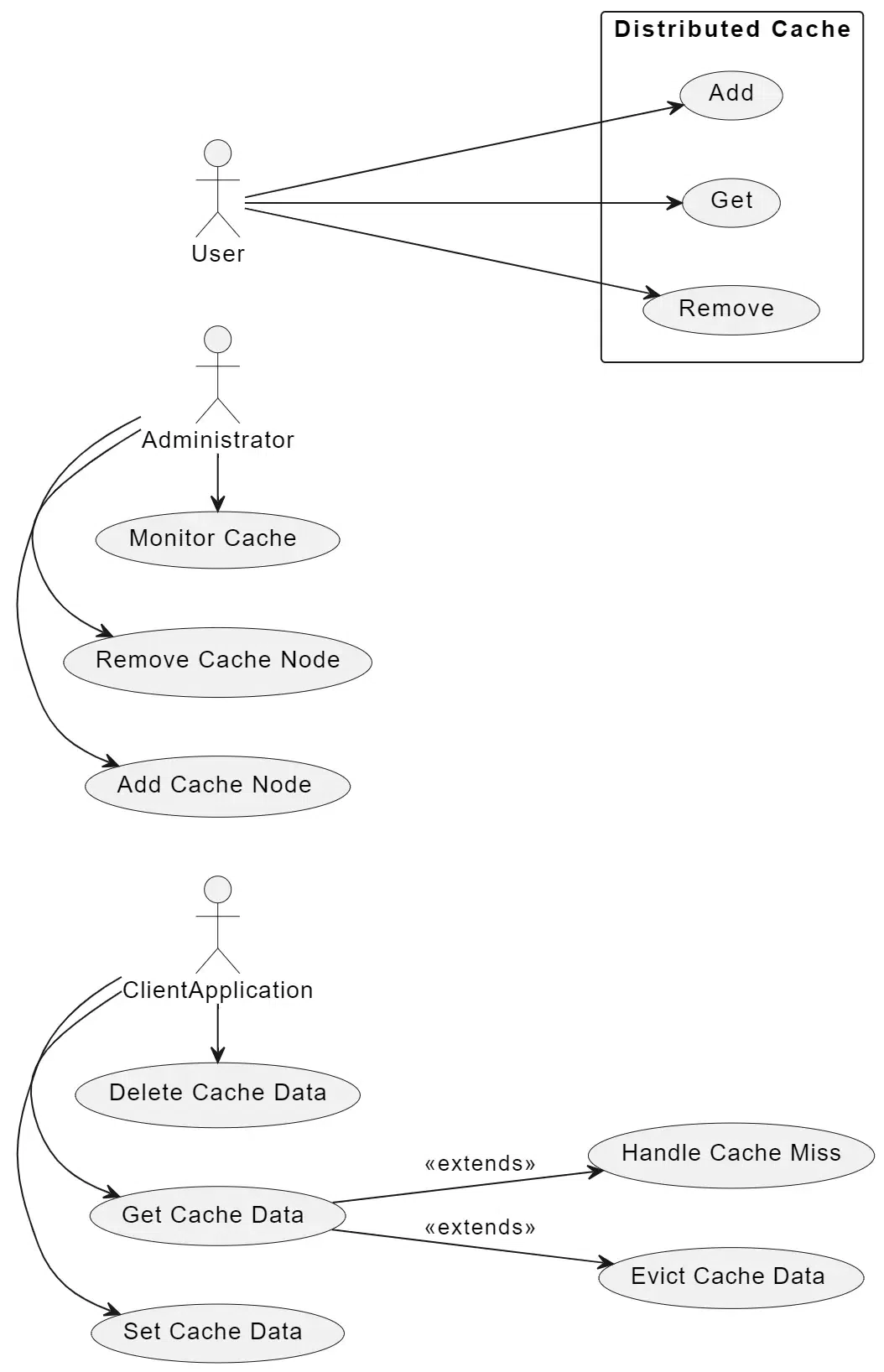

Design Distributed Cache System Design Geeksforgeeks A shared (external) cache is a separate service (or a cluster) that caches data independently of any application instance, e.g. elasticache (memcached, redis). a local cache is quicker to access because of spatial locality (on the same machine). At a high level, a cache is a fast storage layer that keeps copies of frequently used data so future requests can be served quickly, without repeatedly hitting slower systems like databases or external apis.

Design Distributed Cache System Design Geeksforgeeks

Comments are closed.