System Design Caching Eric S Note

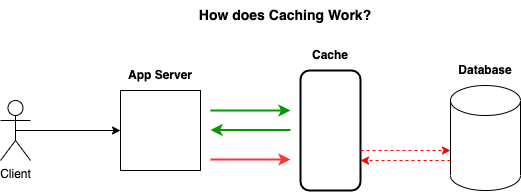

System Design Caching Eric S Note To increase the amount of writes the database can handle, the cache is actually updated before the database. this does allows to maintain high consistency between your cache and your database. Caching is a concept that involves storing frequently accessed data in a location that is easily and quickly accessible. the purpose of caching is to improve the performance and efficiency of a system by reducing the amount of time it takes to access frequently accessed data.

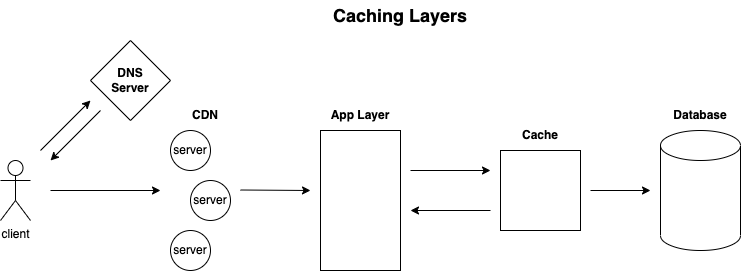

System Design Caching Eric S Note In this post, i’ll break down the core caching concepts, architectural choices, and management policies every engineer should understand when designing scalable systems. This blog will teach you a step by step approach for applying caching at different layers of the system to enhance scalability, reliability and availability. Why caching? improve performance of application. save money in long term. speed and performance reading from memory is much faster than disk, 50~200x faster. can serve the same amount of traffic with fewer resources. with the rapid performance benefits aforementioned, you can server much more many more requests per second with the same resources. Note: basically it keeps a cache directly on the application server. each time a request is made to the service, the node will quickly return local, cached data if it exists. if not, the.

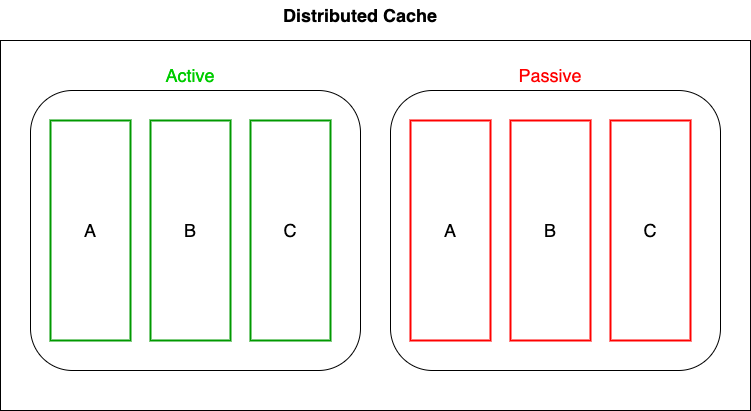

System Design Caching Eric S Note Why caching? improve performance of application. save money in long term. speed and performance reading from memory is much faster than disk, 50~200x faster. can serve the same amount of traffic with fewer resources. with the rapid performance benefits aforementioned, you can server much more many more requests per second with the same resources. Note: basically it keeps a cache directly on the application server. each time a request is made to the service, the node will quickly return local, cached data if it exists. if not, the. The process of storing things closer to you or in a faster storage system so that you can get them very fast can be termed caching. caching happens at different places. With a cache aside pattern, the application code maintains the cache using a caching strategy like read through and write through. a side cache adds complexity because the application has to manage the lifetime of cached data while an inline cache pulls this logic out of the application code. Caching is a vital system design strategy that helps improve application performance, reduce latency, and minimize the load on backend systems. understanding different caching strategies, policies, and distributed caching solutions is essential when designing scalable, high performance systems. Learn about caching and when to use it in system design interviews. in system design interviews, caching comes up almost every time you need to handle high read traffic. your database becomes the bottleneck, latency starts creeping up, and the interviewer is waiting for you to say the word: cache.

System Design Caching Eric S Note The process of storing things closer to you or in a faster storage system so that you can get them very fast can be termed caching. caching happens at different places. With a cache aside pattern, the application code maintains the cache using a caching strategy like read through and write through. a side cache adds complexity because the application has to manage the lifetime of cached data while an inline cache pulls this logic out of the application code. Caching is a vital system design strategy that helps improve application performance, reduce latency, and minimize the load on backend systems. understanding different caching strategies, policies, and distributed caching solutions is essential when designing scalable, high performance systems. Learn about caching and when to use it in system design interviews. in system design interviews, caching comes up almost every time you need to handle high read traffic. your database becomes the bottleneck, latency starts creeping up, and the interviewer is waiting for you to say the word: cache.

System Design Caching System Design Interviewhelp Io Caching is a vital system design strategy that helps improve application performance, reduce latency, and minimize the load on backend systems. understanding different caching strategies, policies, and distributed caching solutions is essential when designing scalable, high performance systems. Learn about caching and when to use it in system design interviews. in system design interviews, caching comes up almost every time you need to handle high read traffic. your database becomes the bottleneck, latency starts creeping up, and the interviewer is waiting for you to say the word: cache.

Comments are closed.