Swish The Activation Function That Might Replace Relu

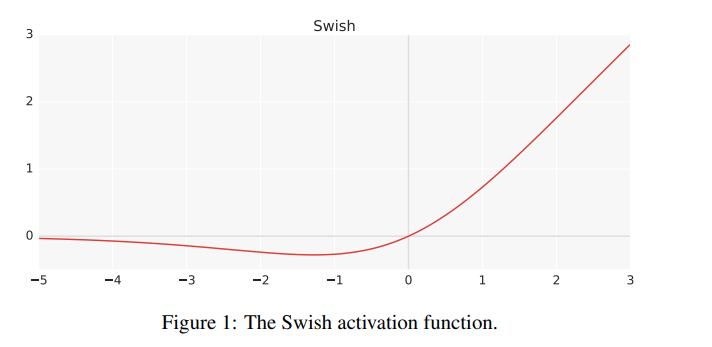

Swish Google Researchers Found New Activation Function To Replace Relu As the machine learning community keeps working on trying to identify complex patterns in the dataset for better results, google proposed the swish activation function as an alternative to the popular relu activation function. In this work, we propose a new activation function, named swish, which is simply f(x) = x sigmoid(x). our experiments show that swish tends to work better than relu on deeper models across a number of challenging datasets.

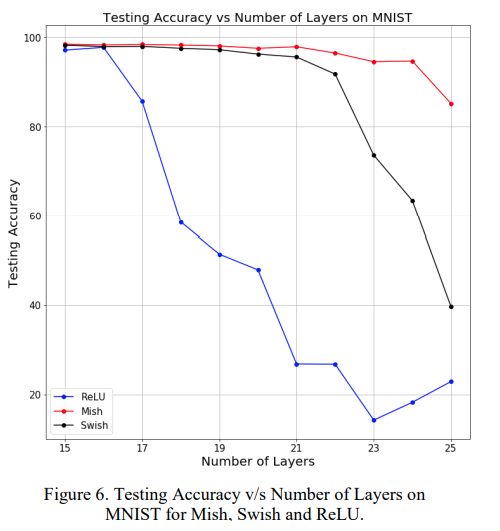

Relu Gelu Swish Mish Activation Function Comparison Chadrick Blog The paper proposes a novel activation function called swish, which was discovered using a neural architecture search (nas) approach and showed significant improvement in performance compared to standard activation functions like relu or leaky relu. The web content introduces swish, a new activation function that outperforms relu in deep learning tasks by offering improved classification accuracy and smoother optimization landscapes. In this video, we're diving deep into the swish activation function, developed by google researchers to address the limitations of the popular relu function. Silu (or swish) can be used in transformers, though it’s less common than the widely used gelu (gaussian error linear unit) activation function in models like bert and gpt.

Relu Gelu Swish Mish Activation Function Comparison Chadrick Blog In this video, we're diving deep into the swish activation function, developed by google researchers to address the limitations of the popular relu function. Silu (or swish) can be used in transformers, though it’s less common than the widely used gelu (gaussian error linear unit) activation function in models like bert and gpt. In 2017, after performing analysis on imagenet data, researchers from google indicated that using this function as an activation function in artificial neural networks improves the performance, compared to relu and sigmoid functions. [1]. In this post, we'll explore the most important activation functions in modern deep learning, understand why certain choices dominate in specific architectures, and examine empirical data on their performance, sparsity patterns, and computational costs. To extend neural network behavior to non linear data, smart minds have invented the activation function a function which takes the scalar as its input and maps it to another numerical value. Activation function redesign: aims and experimental framing at first glance, the work centers on proposing a new activation, and it appears to be driven by both a theoretical intuition and broad empirical tests. one detail that stood out to me is the dual focus on mathematical behavior and benchmarking: the paper frames mish activation as a non monotonic behavior that leverages softplus and.

Comments are closed.