Swin Transformer V2 Paper Explained

Github Ahmedibrahimai Swin Transformer Paper Explained Computer Vision This paper aims to explore large scale models in computer vision. we tackle three major issues in training and application of large vision models, including training instability, resolution gaps between pre training and fine tuning, and hunger on labelled data. Papers explained 215: swin transformer v2 swin transformer v2 explores large scale models in computer vision, addressing challenges like training stability, resolution gaps, and.

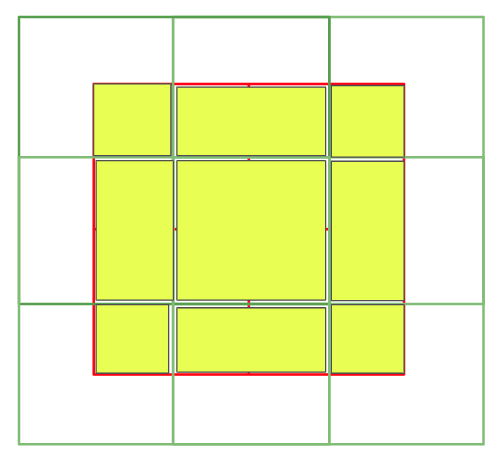

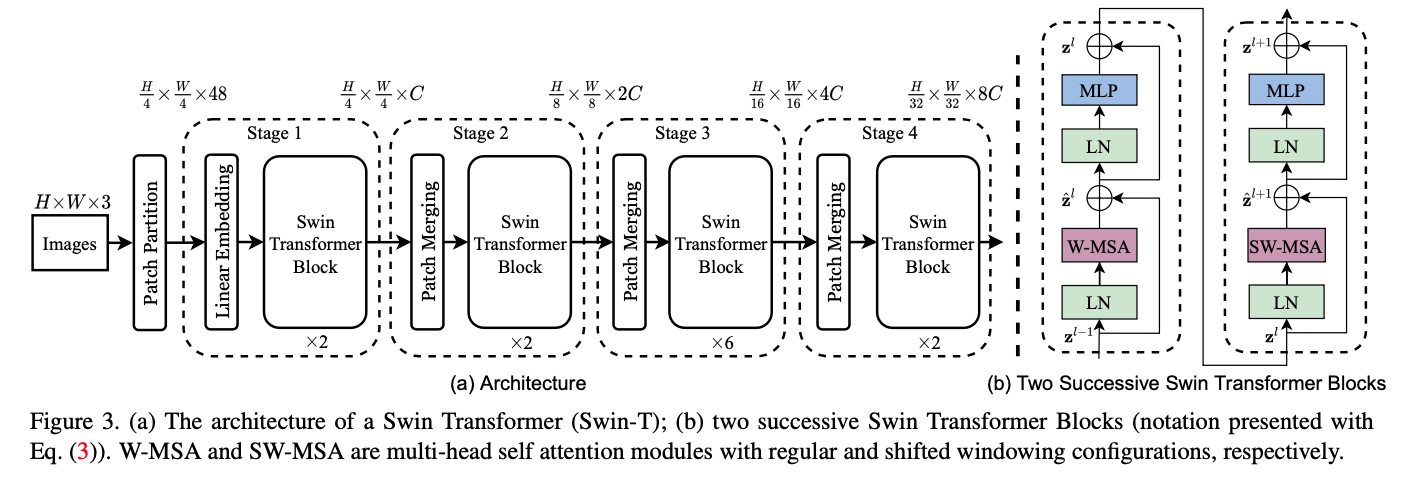

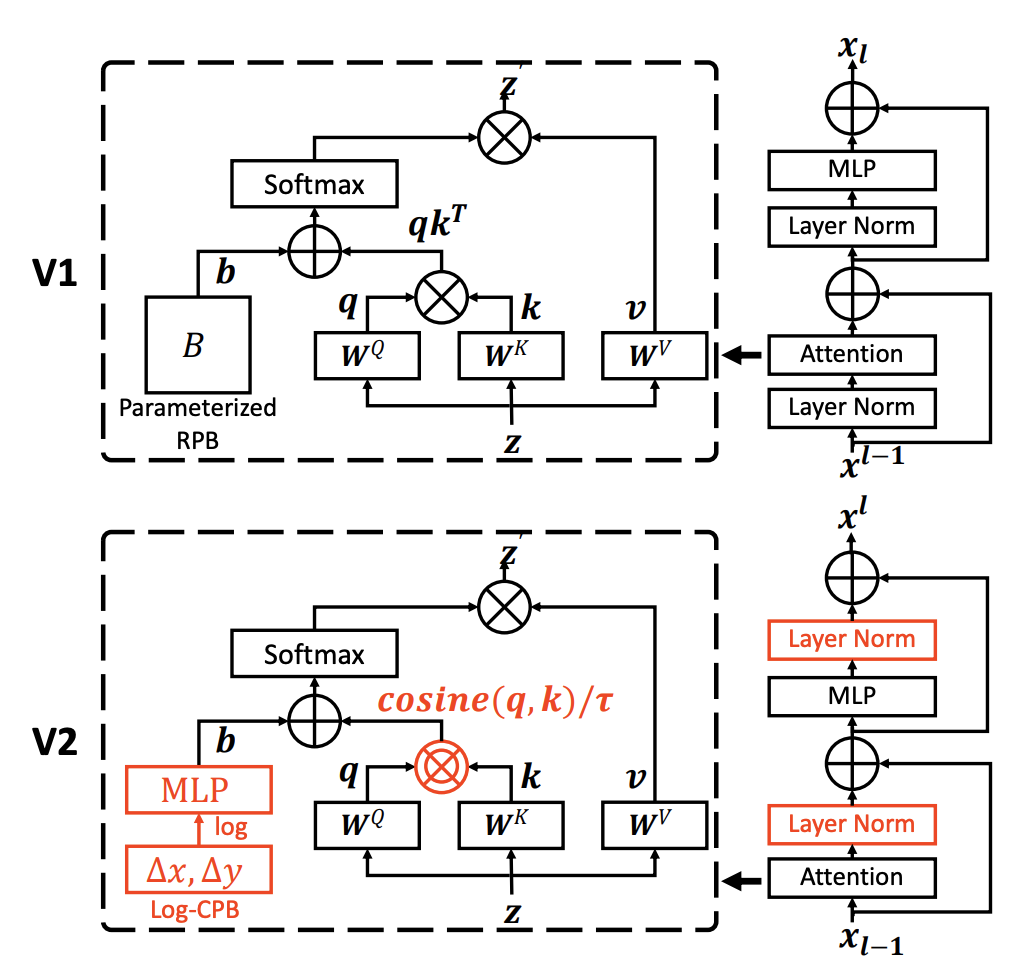

Paper Complete Guide Of Swin Transformer With Full Pytorch Introduced in the 2021 paper, swin transformer: hierarchical vision transformer using shifted windows, the swin transformer architecture optimizes for latency and performance using a shifted window (as opposed to sliding window) approach which reduces the number of operations required. We present techniques for scaling swin transformer [35] up to 3 billion parameters and making it capable of training with images of up to 1,536x1,536 resolution. In this video, i explain how the swin transformer architecture is modified to make it more scalable and achieve better performance. It shows use of swin transformer model for image classification without fine tuning on the cifar 10 dataset. while the model accurately predicted common classes like "cat", "ship", "frog" and "automobile" there are some wrong predictions like confusing between "airplane" with "bird".

Paper Complete Guide Of Swin Transformer With Full Pytorch In this video, i explain how the swin transformer architecture is modified to make it more scalable and achieve better performance. It shows use of swin transformer model for image classification without fine tuning on the cifar 10 dataset. while the model accurately predicted common classes like "cat", "ship", "frog" and "automobile" there are some wrong predictions like confusing between "airplane" with "bird". The swin transformer addresses these challenges through several key innovations. the model constructs a hierarchical feature map, allowing it to process visual information at multiple scales. Using these techniques and self supervised pre training, we suc cessfully train a strong 3 billion swin transformer model and effectively transfer it to various vision tasks involving high resolution images or windows, achieving the state of the art accuracy on a variety of benchmarks. This showcases the effectiveness of the swin transformer and swinv2 models in object detection tasks, demonstrating their superior performance on high resolution images and dense prediction tasks. We present techniques for scaling swin transformer up to 3 billion parameters and making it capable of training with images of up to 1,536$\times$1,536 resolution.

Github Bvlad917 Swin Transformer V2 The swin transformer addresses these challenges through several key innovations. the model constructs a hierarchical feature map, allowing it to process visual information at multiple scales. Using these techniques and self supervised pre training, we suc cessfully train a strong 3 billion swin transformer model and effectively transfer it to various vision tasks involving high resolution images or windows, achieving the state of the art accuracy on a variety of benchmarks. This showcases the effectiveness of the swin transformer and swinv2 models in object detection tasks, demonstrating their superior performance on high resolution images and dense prediction tasks. We present techniques for scaling swin transformer up to 3 billion parameters and making it capable of training with images of up to 1,536$\times$1,536 resolution.

Comments are closed.