Swe Bench Verified Ai Benchmark

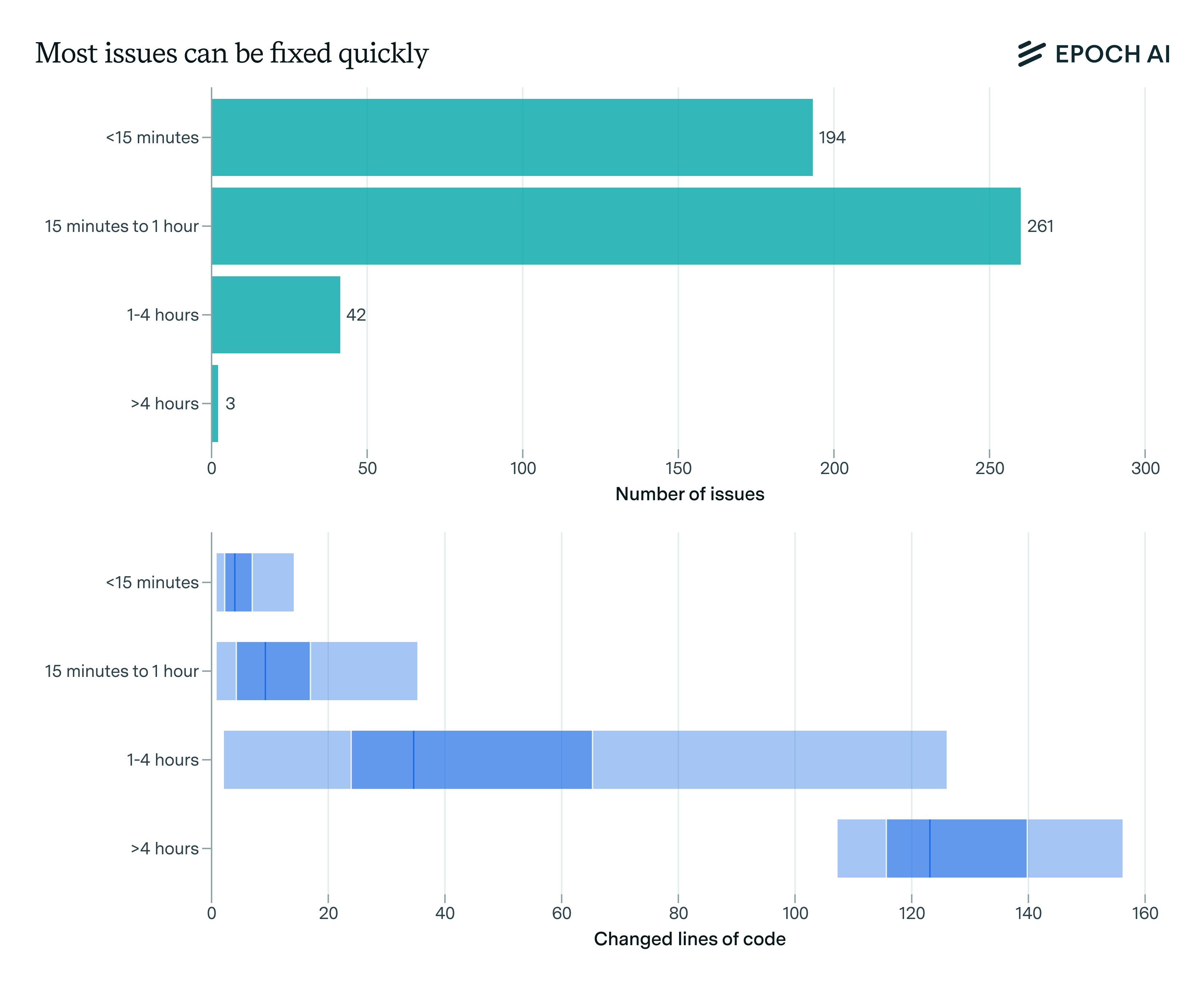

Swe Bench Verified Epoch Ai Swe bench verified is a human filtered subset of 500 instances from swe bench, created in collaboration with openai. human annotators reviewed each instance to ensure the problem descriptions are clear, the test patches are correct, and the tasks are solvable given the available information. What is the swe bench verified benchmark? a verified subset of 500 software engineering problems from real github issues, validated by human annotators for evaluating language models' ability to resolve real world coding issues by generating patches for python codebases.

What Does Swe Bench Verified Actually Measure We’re releasing a human validated subset of swe bench that more reliably evaluates ai models’ ability to solve real world software issues. Swe bench verified is the gold standard for evaluating ai coding agents on real world software engineering tasks. each task requires understanding codebases, writing patches, and passing test suites. Swe bench verified is a human validated section of the swe bench dataset released by openai in august 2024. each task in the split has been carefully reviewed and validated by human experts, resulting in a curated set of 500 high quality test cases from the original benchmark. Swe bench verified is a human validated subset of the original swe bench dataset, consisting of 500 samples that evaluate ai models’ ability to solve real world software engineering issues. epoch evaluations of this benchmark use 484 samples that are validated on our infrastructure.

Github Openagentsinc Swe Bench Verified Let S Pwn Everyone On Swe Swe bench verified is a human validated section of the swe bench dataset released by openai in august 2024. each task in the split has been carefully reviewed and validated by human experts, resulting in a curated set of 500 high quality test cases from the original benchmark. Swe bench verified is a human validated subset of the original swe bench dataset, consisting of 500 samples that evaluate ai models’ ability to solve real world software engineering issues. epoch evaluations of this benchmark use 484 samples that are validated on our infrastructure. Comprehensive swe bench verified benchmark results comparing 3 ai models from 2 organizations. category: coding. top performers: claude opus 4.5 (80.9%), claude opus 4.6 (80.8%), gemini 3.1 pro (80.6%). detailed performance metrics, rankings, and comparisons for 2026. Swe bench is the most widely cited benchmark for ai coding agents. it measures whether a model can resolve real github issues by generating working patches. this guide covers the full swe bench family, the 2026 leaderboard, and the other benchmarks that matter. Leaderboards our swe agent is the state of the art agent on swe bench, it's open source and easy to extend. click here to learn more. Swe bench is a benchmark for evaluating large language models on real world software issues collected from github. given a codebase and an issue, a language model is tasked with generating a patch that resolves the described problem.

Swe Bench Benchmark Scores Usage Model Performance Comprehensive swe bench verified benchmark results comparing 3 ai models from 2 organizations. category: coding. top performers: claude opus 4.5 (80.9%), claude opus 4.6 (80.8%), gemini 3.1 pro (80.6%). detailed performance metrics, rankings, and comparisons for 2026. Swe bench is the most widely cited benchmark for ai coding agents. it measures whether a model can resolve real github issues by generating working patches. this guide covers the full swe bench family, the 2026 leaderboard, and the other benchmarks that matter. Leaderboards our swe agent is the state of the art agent on swe bench, it's open source and easy to extend. click here to learn more. Swe bench is a benchmark for evaluating large language models on real world software issues collected from github. given a codebase and an issue, a language model is tasked with generating a patch that resolves the described problem.

Introducing Swe Bench Verified Openai Leaderboards our swe agent is the state of the art agent on swe bench, it's open source and easy to extend. click here to learn more. Swe bench is a benchmark for evaluating large language models on real world software issues collected from github. given a codebase and an issue, a language model is tasked with generating a patch that resolves the described problem.

Demystifying Swe Bench Ai Coding Assistants In Action

Comments are closed.