Supervised Fine Tuning Sft Learn Code Camp

Understanding And Using Supervised Fine Tuning Sft For Language Models Supervised fine tuning is a training strategy where a pre trained language model is further refined on a carefully curated dataset of prompt response pairs. the primary goal is to “teach” the model how to generate appropriate, contextually relevant, and human aligned responses. Supervised fine tuning (sft) lets you train an openai model with examples for your specific use case. the result is a customized model that more reliably produces your desired style and content. provide examples of correct responses to prompts to guide the model’s behavior.

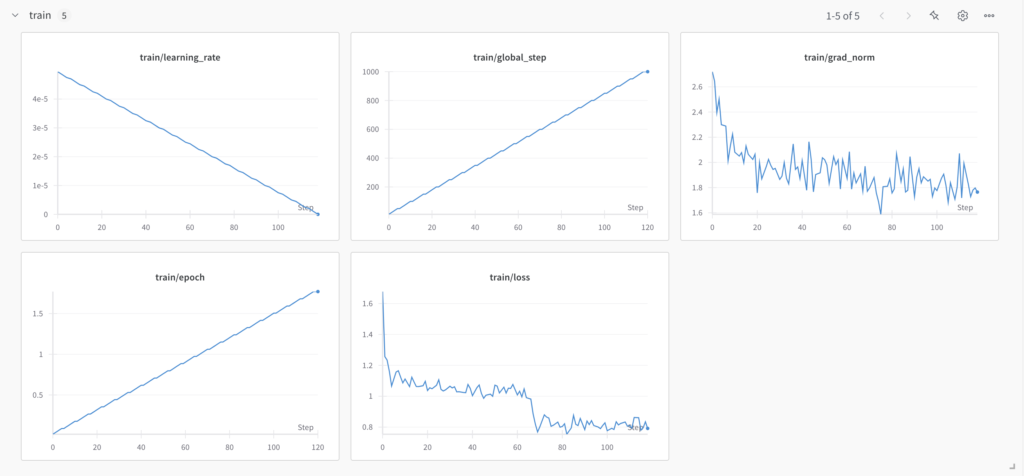

Supervised Fine Tuning Sft Learn Code Camp The term "supervised" refers to the use of labeled training data to guide the fine tuning process. in sft the model learns to map specific inputs to desired outputs by minimizing prediction errors on a labeled dataset. However, nowadays it is far more common to fine tune language models on a broad range of tasks simultaneously; a method known as supervised fine tuning (sft). this process helps models become more versatile and capable of handling diverse use cases. This object specifies hyperparameters and settings for the fine tuning process. it’s tailored to supervised fine tuning tasks, often used for adapting language models to specific tasks or. In this post, i will discuss supervised fine tuning (sft), one of the main post training methods for large language models (llms). i will cover the basic idea of sft, how it works, when.

Supervised Fine Tuning Sft Learn Code Camp This object specifies hyperparameters and settings for the fine tuning process. it’s tailored to supervised fine tuning tasks, often used for adapting language models to specific tasks or. In this post, i will discuss supervised fine tuning (sft), one of the main post training methods for large language models (llms). i will cover the basic idea of sft, how it works, when. Supervised fine tuning (sft) is a critical process for adapting pre trained language models to specific tasks. it involves training the model on a task specific dataset with labeled examples. for a detailed guide on sft, including key steps and best practices, see the supervised fine tuning section of the trl documentation. In this paper, we present a comprehensive empirical study on supervised fine tuning small size llms and compare our findings with existing research on this topic. Sft stabilizes the model’s output format, enabling subsequent rl to achieve its performance gains. they show that sft is necessary for the llm training and will benefit the rl stage. In this article, we explore the crucial process of supervised fine tuning (sft) for large language models (llms).

Supervised Fine Tuning Sft Explained Tailoring Ai To Your Business Needs Supervised fine tuning (sft) is a critical process for adapting pre trained language models to specific tasks. it involves training the model on a task specific dataset with labeled examples. for a detailed guide on sft, including key steps and best practices, see the supervised fine tuning section of the trl documentation. In this paper, we present a comprehensive empirical study on supervised fine tuning small size llms and compare our findings with existing research on this topic. Sft stabilizes the model’s output format, enabling subsequent rl to achieve its performance gains. they show that sft is necessary for the llm training and will benefit the rl stage. In this article, we explore the crucial process of supervised fine tuning (sft) for large language models (llms).

Understanding And Using Supervised Fine Tuning Sft For Language Models Sft stabilizes the model’s output format, enabling subsequent rl to achieve its performance gains. they show that sft is necessary for the llm training and will benefit the rl stage. In this article, we explore the crucial process of supervised fine tuning (sft) for large language models (llms).

Comments are closed.