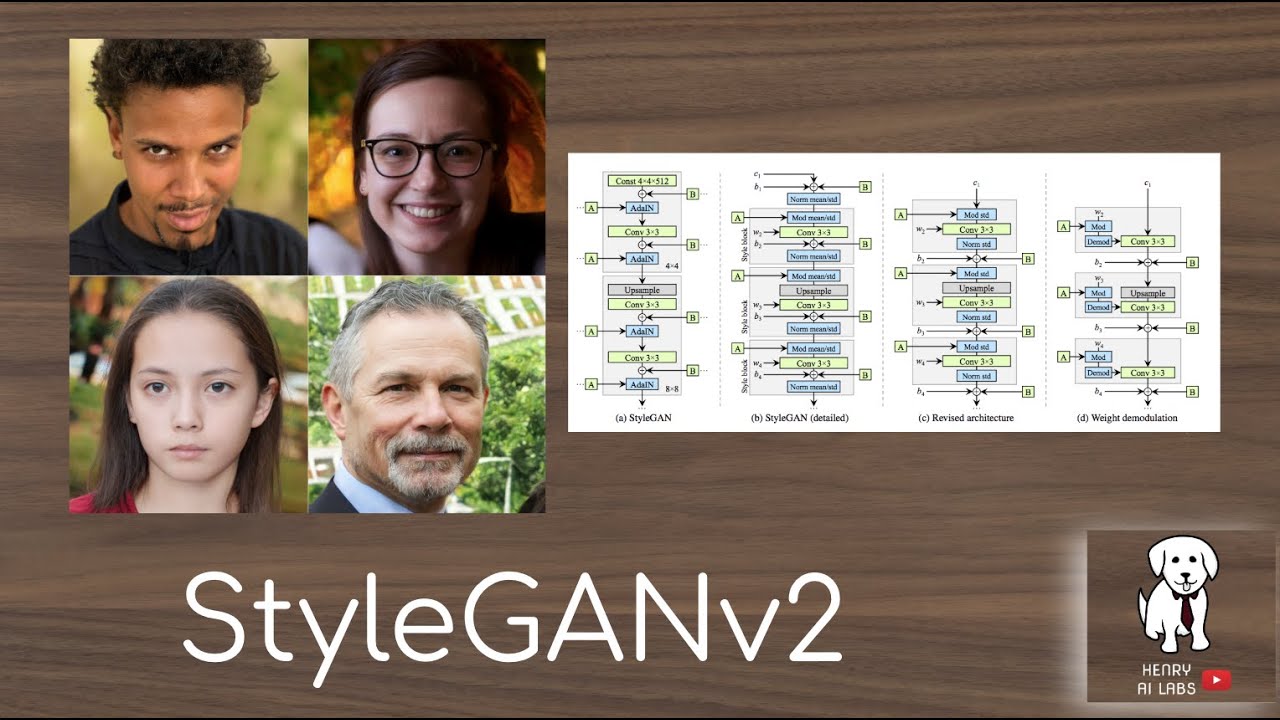

Styleganv2 Explained

Stylegan3 Clearly Explained Paper Explained Stylegan3 Alias Free Abstract: the style based gan architecture (stylegan) yields state of the art results in data driven unconditional generative image modeling. we expose and analyze several of its characteristic artifacts, and propose changes in both model architecture and training methods to address them. In this post we implement the stylegan and in the third and final post we will implement stylegan2. you can find the stylegan paper here. note, if i refer to the “the authors” i am referring to karras et al, they are the authors of the stylegan paper.

Styleganv2 Explained Youtube Stylegan is a generative model that produces highly realistic images by controlling image features at multiple levels from overall structure to fine details like texture and lighting. Below you can see both the traditional and the style based generator (new one or stylegan network) network. Stylegan is based on progan from the paper progressive growing of gans for improved quality, stability, and variation. all three papers are from the same authors from nvidia ai. we will go through the stylegan2 project, see its goals, the loss function, and results, break down its components, and understand each one. Shown in this new demo, the resulting model allows the user to create and fluidly explore portraits. this is done by separately controlling the content, identity, expression, and pose of the subject. users can also modify the artistic style, color scheme, and appearance of brush strokes.

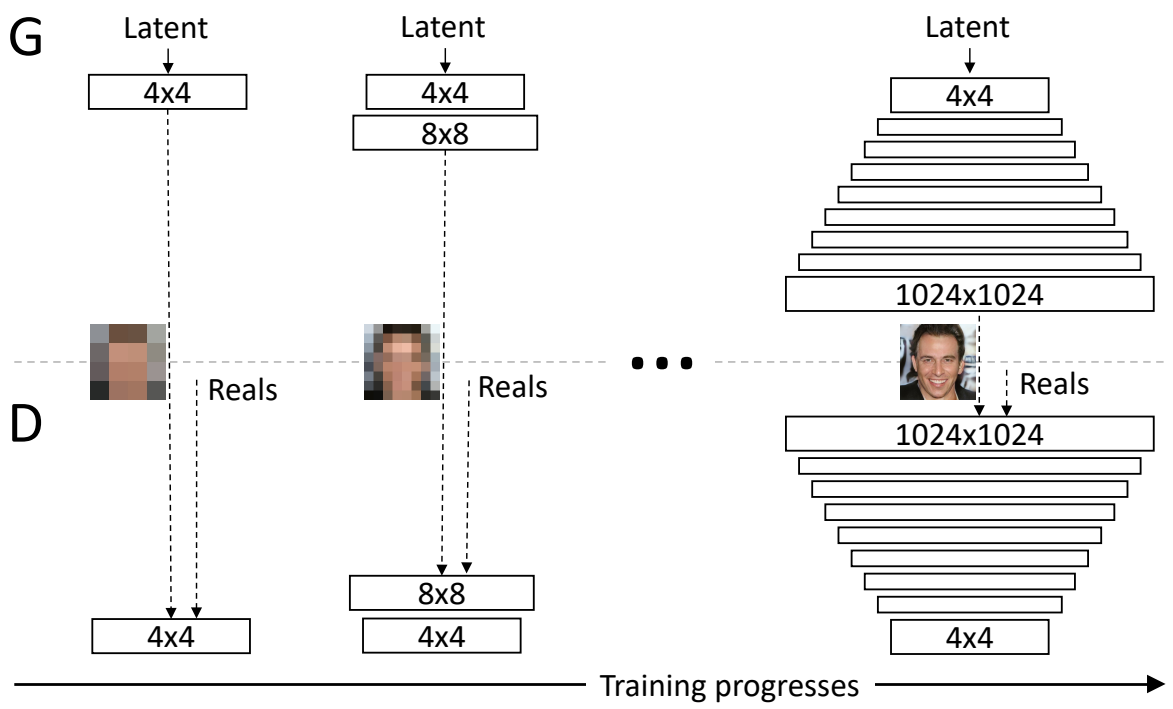

In This Article I Will Compare And Show You The Evolution Of Stylegan Stylegan is based on progan from the paper progressive growing of gans for improved quality, stability, and variation. all three papers are from the same authors from nvidia ai. we will go through the stylegan2 project, see its goals, the loss function, and results, break down its components, and understand each one. Shown in this new demo, the resulting model allows the user to create and fluidly explore portraits. this is done by separately controlling the content, identity, expression, and pose of the subject. users can also modify the artistic style, color scheme, and appearance of brush strokes. Stylegan2 addresses specific artifacts and limitations of the original stylegan while improving overall image quality and network invertibility. for installation instructions, see installation and setup. for specific usage of core components, see core components. Stylegan is designed as a combination of progressive gan with neural style transfer. [18] the key architectural choice of stylegan 1 is a progressive growth mechanism, similar to progressive gan. each generated image starts as a constant [note 1] array, and repeatedly passed through style blocks. Generative adversarial networks (gans) are a class of generative models that produce realistic images. but it is very evident that you don’t have any control over how the images are generated. in vanilla gans, you have two networks (i) a generator, and (ii) a discriminator. My notes on the overview of styleganv2 by henry ai labs. i recently started learning more about generative deep learning models for some potential projects and decided to check out this video by henry ai labs covering styleganv2. below are some notes i took while watching.

What Is Stylegan T A Deep Dive Stylegan2 addresses specific artifacts and limitations of the original stylegan while improving overall image quality and network invertibility. for installation instructions, see installation and setup. for specific usage of core components, see core components. Stylegan is designed as a combination of progressive gan with neural style transfer. [18] the key architectural choice of stylegan 1 is a progressive growth mechanism, similar to progressive gan. each generated image starts as a constant [note 1] array, and repeatedly passed through style blocks. Generative adversarial networks (gans) are a class of generative models that produce realistic images. but it is very evident that you don’t have any control over how the images are generated. in vanilla gans, you have two networks (i) a generator, and (ii) a discriminator. My notes on the overview of styleganv2 by henry ai labs. i recently started learning more about generative deep learning models for some potential projects and decided to check out this video by henry ai labs covering styleganv2. below are some notes i took while watching.

Understanding Stylegan1 Generative adversarial networks (gans) are a class of generative models that produce realistic images. but it is very evident that you don’t have any control over how the images are generated. in vanilla gans, you have two networks (i) a generator, and (ii) a discriminator. My notes on the overview of styleganv2 by henry ai labs. i recently started learning more about generative deep learning models for some potential projects and decided to check out this video by henry ai labs covering styleganv2. below are some notes i took while watching.

The Structure Of Stylegan2 Generator Download Scientific Diagram

Comments are closed.