Stacking In Machine Learning Geeksforgeeks

Stacking In Machine Learning Amit Singh Rajawat Tealfeed Stacking is a ensemble learning technique where the final model known as the “stacked model" combines the predictions from multiple base models. the goal is to create a stronger model by using different models and combining them. Learn about three techniques for improving the performance of ml models: boosting, bagging, and stacking, and explore their python implementations.

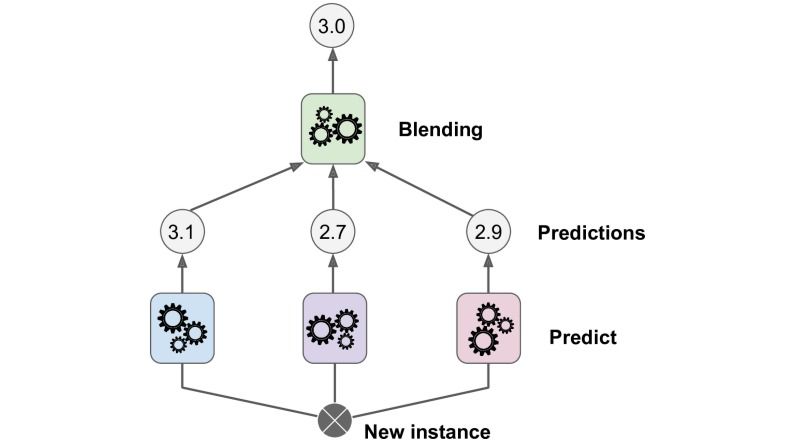

Stacking In Machine Learning Amit Singh Rajawat Tealfeed Stacking’s defining characteristic is its hierarchical structure where predictions from level 0 (base models) become features for level 1 (meta model), creating a learning system that learns to learn from other models. This article will introduce you to the concept of stacking, its benefits, and show you how to implement a stacking classifier on a classification dataset using python. This chapter focuses on the use of h2o for model stacking. h2o provides an efficient implementation of stacking and allows you to stack existing base learners, stack a grid search, and also implements an automated machine learning search with stacked results. Stacking is a technique in machine learning where we combine the predictions of multiple models to create a new model that can make better predictions than any individual model. in stacking, we first train several base models (also called first layer models) on the training data.

Stacking In Machine Learning Geeksforgeeks This chapter focuses on the use of h2o for model stacking. h2o provides an efficient implementation of stacking and allows you to stack existing base learners, stack a grid search, and also implements an automated machine learning search with stacked results. Stacking is a technique in machine learning where we combine the predictions of multiple models to create a new model that can make better predictions than any individual model. in stacking, we first train several base models (also called first layer models) on the training data. Stacking in machine learning is an ensemble machine learning technique that combines multiple models by arranging them in stacks. when using stacking, we have two layers a base layer and a meta layer. In this section, we'll dive into the principles and mechanics of stacking, the types of models used, and the benefits and challenges associated with this technique. stacking involves two levels of models: the base models and the meta model. Let’s explore what stacking is, how this powerful ensemble technique works, and when it is most beneficial to apply it in your machine learning projects. learn how to build and optimize various machine learning models, including ensemble methods like stacking, to tackle complex real world problems!. Stacking, also known as stacked ensembles or stacked generalization, is a powerful ensemble learning strategy that has gained significant attention in recent years. in this article, we will explore the concept of stacking in machine learning, its benefits, implementation, and best practices.

Comments are closed.